NAS/Server/Desktop Gehäuse

Hardware

6

Beiträge

1

Kommentatoren

2.5k

Aufrufe

-

-

Der Preis für das Gehäuse beträgt 44,99$

PINE64 Community

PINE64 is a large, vibrant and diverse community and creates software, documentation and projects. Founded in 2015, it is known for affordable devices that promote user freedom.

PINE64 (www.pine64.org)

-

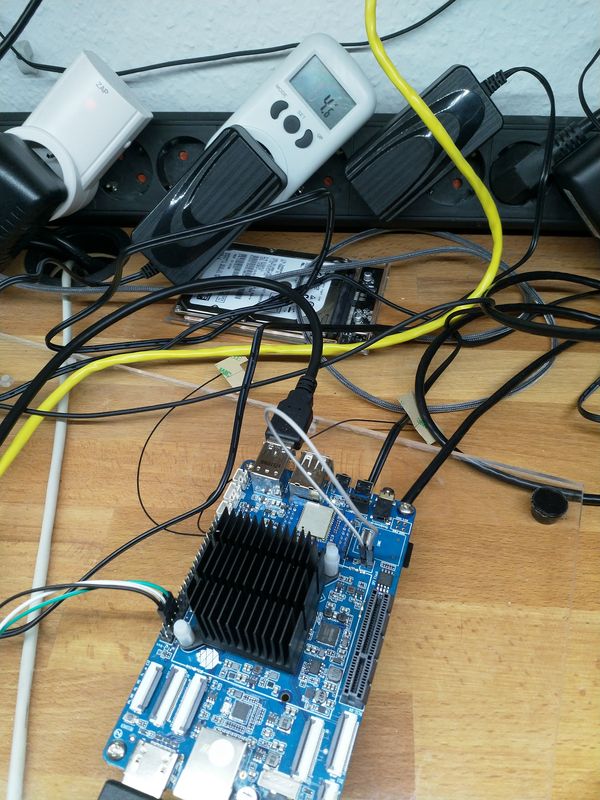

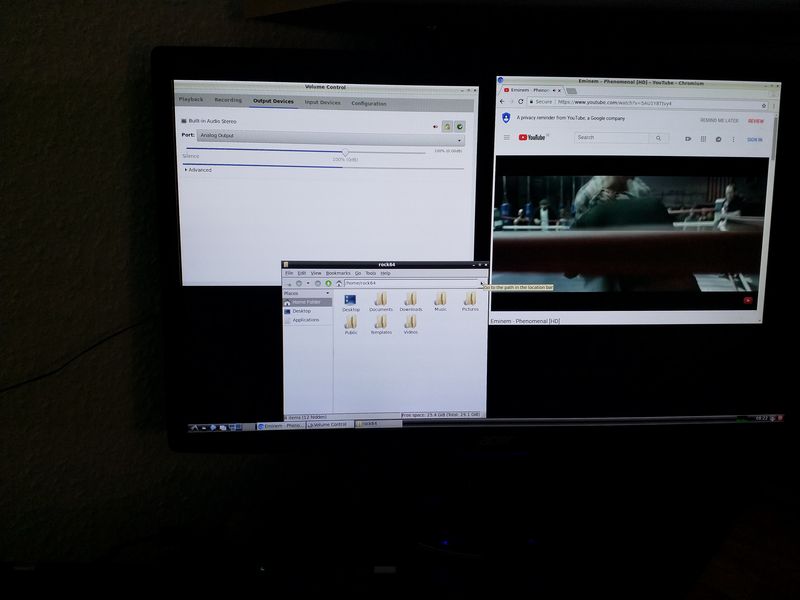

Paar Bilder von rookieone.

-

ROCKPro64 - Kernel switchen

Verschoben ROCKPro64 -

-

-

-

-

Mainline Kernel 4.20.x

Verschoben Images -

ROCKPro64 Übersicht - was geht? **veraltet**

Angeheftet Verschoben Archiv -

stretch-openmediavault-rockpro64

Verschoben Linux