Benchmarks

-

Hier möchte ich alle Benchmarks usw. sammeln. Bitte unbedingt das vorher lesen! Ich werde die Version des Images dabei schreiben. Einsetzen werde ich ausschließlich folgendes Image.

https://frank-mankel.org/topic/69/bionic-minimal-rockpro64-0-7-x-228-arm64-img-xz

Macht bis jetzt für mich den stabilsten Eindruck.

-

USB2/3 (Version 0.7.3)

Ich benutze eine SAN Disk 240GB SSD an einem Inateck USB 3.0 2,5 Zoll Adapter.

Info zum USB-Adapter

lsusb Bus 004 Device 002: ID 174c:55aa ASMedia Technology Inc. ASM1051E SATA 6Gb/s bridge, ASM1053E SATA 6Gb/s bridge, ASM1153 SATA 3Gb/s bridge2,5 Zoll SSD am USB2-Port

sudo dd if=/dev/zero of=sd.img bs=1M count=4096 conv=fdatasync 4096+0 records in 4096+0 records out 4294967296 bytes (4.3 GB, 4.0 GiB) copied, 160.058 s, **26.8 MB/s**2,5 Zoll SSD am USB3 Port

sudo dd if=/dev/zero of=sd.img bs=1M count=4096 conv=fdatasync 4096+0 records in 4096+0 records out 4294967296 bytes (4.3 GB, 4.0 GiB) copied, 36.2588 s, **118 MB/s**Der @tkaiser erreicht deutlich höhere Geschwindigkeiten. Bis zu 400 MB/s. Hier nachzulesen.

Ich habe mich mit @tkaiser noch mal unterhalten. Scheint sehr deutlich ein Problem des Adapters zu sein. Da müsste eigentlich mehr gehen. Mal sehen....

-

7-zip (Version 0.7.3)

Kleiner Stresstest für die CPU

Installation

sudo apt-get install p7zip p7zip-full p7zip-rarTest

7zr b 7-Zip (a) [64] 16.02 : Copyright (c) 1999-2016 Igor Pavlov : 2016-05-21 p7zip Version 16.02 (locale=de_DE.UTF-8,Utf16=on,HugeFiles=on,64 bits,6 CPUs LE) LE CPU Freq: 904 1276 1530 1721 1794 1793 1793 1794 1794 RAM size: 3876 MB, # CPU hardware threads: 6 RAM usage: 1323 MB, # Benchmark threads: 6 Compressing | Decompressing Dict Speed Usage R/U Rating | Speed Usage R/U Rating KiB/s % MIPS MIPS | KiB/s % MIPS MIPS 22: 4400 502 852 4281 | 92530 522 1513 7891 23: 4227 513 840 4307 | 90668 523 1500 7845 24: 4180 534 842 4495 | 88868 525 1486 7800 25: 4207 564 852 4804 | 86102 526 1457 7663 ---------------------------------- | ------------------------------ Avr: 528 847 4472 | 524 1489 7800 Tot: 526 1168 6136 -

LAN (Version 0.7.3)

Geschwindigkeit der Schnittstelle

iperf3 -c 192.168.3.213 Connecting to host 192.168.3.213, port 5201 [ 4] local 192.168.3.12 port 42350 connected to 192.168.3.213 port 5201 [ ID] Interval Transfer Bandwidth Retr Cwnd [ 4] 0.00-1.00 sec 116 MBytes 971 Mbits/sec 0 921 KBytes [ 4] 1.00-2.00 sec 112 MBytes 941 Mbits/sec 11 460 KBytes [ 4] 2.00-3.00 sec 112 MBytes 941 Mbits/sec 11 339 KBytes [ 4] 3.00-4.00 sec 112 MBytes 941 Mbits/sec 10 355 KBytes [ 4] 4.00-5.00 sec 112 MBytes 942 Mbits/sec 11 339 KBytes [ 4] 5.00-6.00 sec 112 MBytes 941 Mbits/sec 0 382 KBytes [ 4] 6.00-7.00 sec 112 MBytes 941 Mbits/sec 11 324 KBytes [ 4] 7.00-8.00 sec 112 MBytes 942 Mbits/sec 11 243 KBytes [ 4] 8.00-9.00 sec 112 MBytes 941 Mbits/sec 10 315 KBytes [ 4] 9.00-10.00 sec 112 MBytes 942 Mbits/sec 11 308 KBytes - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth Retr [ 4] 0.00-10.00 sec 1.10 GBytes 944 Mbits/sec 86 sender [ 4] 0.00-10.00 sec 1.10 GBytes 941 Mbits/sec receiver iperf Done. rock64@rockpro64:~$ iperf3 -s ----------------------------------------------------------- Server listening on 5201 ----------------------------------------------------------- Accepted connection from 192.168.3.213, port 35834 [ 5] local 192.168.3.12 port 5201 connected to 192.168.3.213 port 35836 [ ID] Interval Transfer Bandwidth [ 5] 0.00-1.00 sec 108 MBytes 908 Mbits/sec [ 5] 1.00-2.00 sec 112 MBytes 941 Mbits/sec [ 5] 2.00-3.00 sec 112 MBytes 941 Mbits/sec [ 5] 3.00-4.00 sec 112 MBytes 941 Mbits/sec [ 5] 4.00-5.00 sec 112 MBytes 941 Mbits/sec [ 5] 5.00-6.00 sec 112 MBytes 941 Mbits/sec [ 5] 6.00-7.00 sec 112 MBytes 941 Mbits/sec [ 5] 7.00-8.00 sec 112 MBytes 941 Mbits/sec [ 5] 8.00-9.00 sec 112 MBytes 941 Mbits/sec [ 5] 9.00-10.00 sec 112 MBytes 941 Mbits/sec [ 5] 10.00-10.02 sec 1.85 MBytes 930 Mbits/sec - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth [ 5] 0.00-10.02 sec 0.00 Bytes 0.00 bits/sec sender [ 5] 0.00-10.02 sec 1.09 GBytes 938 Mbits/sec receiver ----------------------------------------------------------- Server listening on 5201 ----------------------------------------------------------- ^Ciperf3: interrupt - the server has terminated -

Speichertest (Version 0.7.3)

Mit dem Tool tinymembench die Geschwindigkeit des Speichers testen.

./tinymembench tinymembench v0.4.9 (simple benchmark for memory throughput and latency) ========================================================================== == Memory bandwidth tests == == == == Note 1: 1MB = 1000000 bytes == == Note 2: Results for 'copy' tests show how many bytes can be == == copied per second (adding together read and writen == == bytes would have provided twice higher numbers) == == Note 3: 2-pass copy means that we are using a small temporary buffer == == to first fetch data into it, and only then write it to the == == destination (source -> L1 cache, L1 cache -> destination) == == Note 4: If sample standard deviation exceeds 0.1%, it is shown in == == brackets == ========================================================================== C copy backwards : 2868.1 MB/s (0.3%) C copy backwards (32 byte blocks) : 2860.8 MB/s C copy backwards (64 byte blocks) : 2851.0 MB/s C copy : 2724.3 MB/s (0.1%) C copy prefetched (32 bytes step) : 2775.6 MB/s C copy prefetched (64 bytes step) : 2778.9 MB/s C 2-pass copy : 2546.9 MB/s C 2-pass copy prefetched (32 bytes step) : 2577.6 MB/s C 2-pass copy prefetched (64 bytes step) : 2577.3 MB/s C fill : 4897.9 MB/s (0.4%) C fill (shuffle within 16 byte blocks) : 4895.2 MB/s C fill (shuffle within 32 byte blocks) : 4896.9 MB/s C fill (shuffle within 64 byte blocks) : 4898.0 MB/s --- standard memcpy : 2841.6 MB/s standard memset : 4897.1 MB/s (0.4%) --- NEON LDP/STP copy : 2842.3 MB/s NEON LDP/STP copy pldl2strm (32 bytes step) : 2863.3 MB/s (0.3%) NEON LDP/STP copy pldl2strm (64 bytes step) : 2863.2 MB/s NEON LDP/STP copy pldl1keep (32 bytes step) : 2784.3 MB/s NEON LDP/STP copy pldl1keep (64 bytes step) : 2777.8 MB/s NEON LD1/ST1 copy : 2839.5 MB/s NEON STP fill : 4896.0 MB/s (0.4%) NEON STNP fill : 4862.2 MB/s ARM LDP/STP copy : 2841.1 MB/s ARM STP fill : 4896.6 MB/s (0.4%) ARM STNP fill : 4861.4 MB/s ========================================================================== == Framebuffer read tests. == == == == Many ARM devices use a part of the system memory as the framebuffer, == == typically mapped as uncached but with write-combining enabled. == == Writes to such framebuffers are quite fast, but reads are much == == slower and very sensitive to the alignment and the selection of == == CPU instructions which are used for accessing memory. == == == == Many x86 systems allocate the framebuffer in the GPU memory, == == accessible for the CPU via a relatively slow PCI-E bus. Moreover, == == PCI-E is asymmetric and handles reads a lot worse than writes. == == == == If uncached framebuffer reads are reasonably fast (at least 100 MB/s == == or preferably >300 MB/s), then using the shadow framebuffer layer == == is not necessary in Xorg DDX drivers, resulting in a nice overall == == performance improvement. For example, the xf86-video-fbturbo DDX == == uses this trick. == ========================================================================== NEON LDP/STP copy (from framebuffer) : 606.2 MB/s NEON LDP/STP 2-pass copy (from framebuffer) : 560.0 MB/s NEON LD1/ST1 copy (from framebuffer) : 672.9 MB/s NEON LD1/ST1 2-pass copy (from framebuffer) : 614.2 MB/s ARM LDP/STP copy (from framebuffer) : 451.0 MB/s ARM LDP/STP 2-pass copy (from framebuffer) : 433.7 MB/s ========================================================================== == Memory latency test == == == == Average time is measured for random memory accesses in the buffers == == of different sizes. The larger is the buffer, the more significant == == are relative contributions of TLB, L1/L2 cache misses and SDRAM == == accesses. For extremely large buffer sizes we are expecting to see == == page table walk with several requests to SDRAM for almost every == == memory access (though 64MiB is not nearly large enough to experience == == this effect to its fullest). == == == == Note 1: All the numbers are representing extra time, which needs to == == be added to L1 cache latency. The cycle timings for L1 cache == == latency can be usually found in the processor documentation. == == Note 2: Dual random read means that we are simultaneously performing == == two independent memory accesses at a time. In the case if == == the memory subsystem can't handle multiple outstanding == == requests, dual random read has the same timings as two == == single reads performed one after another. == ========================================================================== block size : single random read / dual random read 1024 : 0.0 ns / 0.0 ns 2048 : 0.0 ns / 0.0 ns 4096 : 0.0 ns / 0.0 ns 8192 : 0.0 ns / 0.0 ns 16384 : 0.0 ns / 0.0 ns 32768 : 0.0 ns / 0.0 ns 65536 : 4.5 ns / 7.2 ns 131072 : 6.8 ns / 9.7 ns 262144 : 9.8 ns / 12.8 ns 524288 : 11.4 ns / 14.7 ns 1048576 : 16.3 ns / 22.8 ns 2097152 : 110.8 ns / 169.8 ns 4194304 : 157.2 ns / 213.9 ns 8388608 : 185.0 ns / 234.5 ns 16777216 : 198.8 ns / 244.2 ns 33554432 : 206.9 ns / 249.3 ns 67108864 : 218.7 ns / 261.9 nsVergleichsergebnisse findet man hier.

Speichertest (Version 0.7.5)

Nachdem die Version 0.7.4 unstabil lief, hier die Ergebnisse von 0.7.5

rock64@rockpro64:~/tinymembench$ ./tinymembench tinymembench v0.4.9 (simple benchmark for memory throughput and latency) ========================================================================== == Memory bandwidth tests == == == == Note 1: 1MB = 1000000 bytes == == Note 2: Results for 'copy' tests show how many bytes can be == == copied per second (adding together read and writen == == bytes would have provided twice higher numbers) == == Note 3: 2-pass copy means that we are using a small temporary buffer == == to first fetch data into it, and only then write it to the == == destination (source -> L1 cache, L1 cache -> destination) == == Note 4: If sample standard deviation exceeds 0.1%, it is shown in == == brackets == ========================================================================== C copy backwards : 2668.2 MB/s C copy backwards (32 byte blocks) : 2662.3 MB/s C copy backwards (64 byte blocks) : 2659.3 MB/s C copy : 2673.1 MB/s C copy prefetched (32 bytes step) : 2648.6 MB/s C copy prefetched (64 bytes step) : 2653.3 MB/s C 2-pass copy : 2404.3 MB/s C 2-pass copy prefetched (32 bytes step) : 2441.8 MB/s C 2-pass copy prefetched (64 bytes step) : 2442.8 MB/s (1.1%) C fill : 4808.3 MB/s (0.4%) C fill (shuffle within 16 byte blocks) : 4793.4 MB/s C fill (shuffle within 32 byte blocks) : 4801.1 MB/s (0.4%) C fill (shuffle within 64 byte blocks) : 4810.3 MB/s (0.2%) --- standard memcpy : 2677.8 MB/s standard memset : 4809.4 MB/s (0.4%) --- NEON LDP/STP copy : 2673.6 MB/s NEON LDP/STP copy pldl2strm (32 bytes step) : 2691.4 MB/s (0.9%) NEON LDP/STP copy pldl2strm (64 bytes step) : 2690.8 MB/s NEON LDP/STP copy pldl1keep (32 bytes step) : 2743.8 MB/s (1.1%) NEON LDP/STP copy pldl1keep (64 bytes step) : 2741.6 MB/s NEON LD1/ST1 copy : 2793.6 MB/s NEON STP fill : 4897.8 MB/s (0.6%) NEON STNP fill : 4864.0 MB/s (0.2%) ARM LDP/STP copy : 2802.0 MB/s ARM STP fill : 4898.0 MB/s (0.4%) ARM STNP fill : 4863.8 MB/s (0.2%) ========================================================================== == Framebuffer read tests. == == == == Many ARM devices use a part of the system memory as the framebuffer, == == typically mapped as uncached but with write-combining enabled. == == Writes to such framebuffers are quite fast, but reads are much == == slower and very sensitive to the alignment and the selection of == == CPU instructions which are used for accessing memory. == == == == Many x86 systems allocate the framebuffer in the GPU memory, == == accessible for the CPU via a relatively slow PCI-E bus. Moreover, == == PCI-E is asymmetric and handles reads a lot worse than writes. == == == == If uncached framebuffer reads are reasonably fast (at least 100 MB/s == == or preferably >300 MB/s), then using the shadow framebuffer layer == == is not necessary in Xorg DDX drivers, resulting in a nice overall == == performance improvement. For example, the xf86-video-fbturbo DDX == == uses this trick. == ========================================================================== NEON LDP/STP copy (from framebuffer) : 539.1 MB/s (1.8%) NEON LDP/STP 2-pass copy (from framebuffer) : 522.1 MB/s NEON LD1/ST1 copy (from framebuffer) : 583.0 MB/s NEON LD1/ST1 2-pass copy (from framebuffer) : 564.2 MB/s ARM LDP/STP copy (from framebuffer) : 373.2 MB/s (0.1%) ARM LDP/STP 2-pass copy (from framebuffer) : 418.2 MB/s ========================================================================== == Memory latency test == == == == Average time is measured for random memory accesses in the buffers == == of different sizes. The larger is the buffer, the more significant == == are relative contributions of TLB, L1/L2 cache misses and SDRAM == == accesses. For extremely large buffer sizes we are expecting to see == == page table walk with several requests to SDRAM for almost every == == memory access (though 64MiB is not nearly large enough to experience == == this effect to its fullest). == == == == Note 1: All the numbers are representing extra time, which needs to == == be added to L1 cache latency. The cycle timings for L1 cache == == latency can be usually found in the processor documentation. == == Note 2: Dual random read means that we are simultaneously performing == == two independent memory accesses at a time. In the case if == == the memory subsystem can't handle multiple outstanding == == requests, dual random read has the same timings as two == == single reads performed one after another. == ========================================================================== block size : single random read / dual random read 1024 : 0.0 ns / 0.0 ns 2048 : 0.0 ns / 0.0 ns 4096 : 0.0 ns / 0.0 ns 8192 : 0.0 ns / 0.0 ns 16384 : 0.0 ns / 0.0 ns 32768 : 0.0 ns / 0.0 ns 65536 : 4.1 ns / 6.5 ns 131072 : 6.2 ns / 8.7 ns 262144 : 8.9 ns / 11.6 ns 524288 : 10.3 ns / 13.3 ns 1048576 : 15.0 ns / 21.3 ns 2097152 : 112.3 ns / 173.0 ns 4194304 : 159.5 ns / 217.2 ns 8388608 : 187.3 ns / 237.9 ns 16777216 : 201.0 ns / 246.2 ns 33554432 : 208.5 ns / 250.8 ns 67108864 : 219.7 ns / 264.1 ns -

Cpu Sysbench (Version 0.7.3)

sysbench --test=cpu --cpu-max-prime=20000 run WARNING: the --test option is deprecated. You can pass a script name or path on the command line without any options. sysbench 1.0.11 (using system LuaJIT 2.1.0-beta3) Running the test with following options: Number of threads: 1 Initializing random number generator from current time Prime numbers limit: 20000 Initializing worker threads... Threads started! CPU speed: events per second: 697.34 General statistics: total time: 10.0006s total number of events: 6983 Latency (ms): min: 1.42 avg: 1.43 max: 6.58 95th percentile: 1.42 sum: 9993.83 Threads fairness: events (avg/stddev): 6983.0000/0.00 execution time (avg/stddev): 9.9938/0.00 -

Memtester (Version 0.7.5)

Installation

rock64@rockpro64:~$ sudo apt-get install memtesterTest

Im Beispiel testen wir 3072 MB und zwar einmal. Bei 1024 5 würde man 1024 MB fünfmal testen.

rock64@rockpro64:~$ sudo memtester 3072 1 memtester version 4.3.0 (64-bit) Copyright (C) 2001-2012 Charles Cazabon. Licensed under the GNU General Public License version 2 (only). pagesize is 4096 pagesizemask is 0xfffffffffffff000 want 3072MB (3221225472 bytes) got 3072MB (3221225472 bytes), trying mlock ...locked. Loop 1/1: Stuck Address : ok Random Value : ok Compare XOR : ok Compare SUB : ok Compare MUL : ok Compare DIV : ok Compare OR : ok Compare AND : ok Sequential Increment: ok Solid Bits : ok Block Sequential : ok Checkerboard : ok Bit Spread : ok Bit Flip : ok Walking Ones : ok Walking Zeroes : ok 8-bit Writes : ok 16-bit Writes : ok Done. -

cryptsetup benchmark (v.0.6.44)

Dient dem Test des verbauten Speichers.

Installation

sudo apt-get install cryptsetupTest

rock64@rockpro64:/usr/local/sbin$ cryptsetup benchmark # Tests are approximate using memory only (no storage IO). PBKDF2-sha1 793173 iterations per second for 256-bit key PBKDF2-sha256 1483134 iterations per second for 256-bit key PBKDF2-sha512 499321 iterations per second for 256-bit key PBKDF2-ripemd160 381023 iterations per second for 256-bit key PBKDF2-whirlpool 172463 iterations per second for 256-bit key argon2i 4 iterations, 387040 memory, 4 parallel threads (CPUs) for 256-bit key (requested 2000 ms time) argon2id 4 iterations, 374949 memory, 4 parallel threads (CPUs) for 256-bit key (requested 2000 ms time) # Algorithm | Key | Encryption | Decryption aes-cbc 128b 621.7 MiB/s 851.2 MiB/s serpent-cbc 128b N/A N/A twofish-cbc 128b 80.7 MiB/s 82.7 MiB/s aes-cbc 256b 536.2 MiB/s 759.3 MiB/s serpent-cbc 256b N/A N/A twofish-cbc 256b 81.0 MiB/s 82.7 MiB/s aes-xts 256b 686.9 MiB/s 691.4 MiB/s serpent-xts 256b N/A N/A twofish-xts 256b N/A N/A aes-xts 512b 637.8 MiB/s 638.4 MiB/s serpent-xts 512b N/A N/A twofish-xts 512b N/A N/AZum Vergleich die Ergebnisse meines Haupt-PC's

frank@frank-MS-7A34 ~ $ cryptsetup benchmark # Die Tests sind nur annähernd genau, da sie nicht auf die Festplatte zugreifen. PBKDF2-sha1 1106092 iterations per second PBKDF2-sha256 740519 iterations per second PBKDF2-sha512 555389 iterations per second PBKDF2-ripemd160 668734 iterations per second PBKDF2-whirlpool 262144 iterations per second # Algorithm | Key | Encryption | Decryption aes-cbc 128b 1022,9 MiB/s 3369,1 MiB/s serpent-cbc 128b 94,4 MiB/s 345,8 MiB/s twofish-cbc 128b 189,5 MiB/s 342,5 MiB/s aes-cbc 256b 779,6 MiB/s 2751,3 MiB/s serpent-cbc 256b 96,9 MiB/s 343,8 MiB/s twofish-cbc 256b 195,0 MiB/s 335,0 MiB/s aes-xts 256b 2653,5 MiB/s 2619,4 MiB/s serpent-xts 256b 339,4 MiB/s 339,3 MiB/s twofish-xts 256b 340,5 MiB/s 338,3 MiB/s aes-xts 512b 2294,2 MiB/s 2329,1 MiB/s serpent-xts 512b 327,4 MiB/s 337,8 MiB/s twofish-xts 512b 351,5 MiB/s 343,3 MiB/s -

Gestern mal was praxistaugliches aufgebaut. Auf dem ROCKPro64 einen NFS-Server installiert. Die Freigabe lag auf der NVMe SSD. Das ganze dann auf meinem Haupt-PC gemountet und mal einen Star Wars Film kopiert. 8,5 GB

Konstant 97 MB/s

Das sieht doch schon mal sehr erfreulich aus!

-

iozone Test (0.6.52)

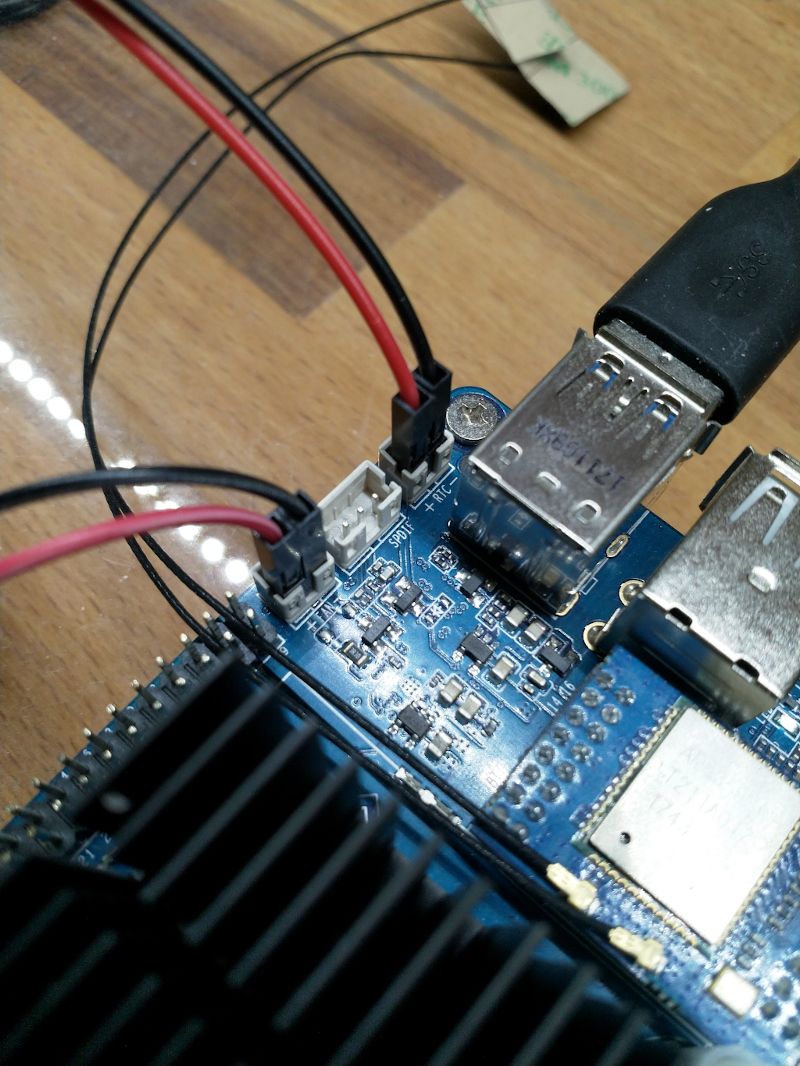

Hardware

Hardware ist eine Samsung EVO 960 m.2 mit 250GB

Eingabe

sudo iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2Ausgabe

Run began: Thu Jun 14 12:04:01 2018 Include fsync in write timing O_DIRECT feature enabled Auto Mode File size set to 102400 kB Record Size 4 kB Record Size 16 kB Record Size 512 kB Record Size 1024 kB Record Size 16384 kB Command line used: iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Output is in kBytes/sec Time Resolution = 0.000001 seconds. Processor cache size set to 1024 kBytes. Processor cache line size set to 32 bytes. File stride size set to 17 * record size. random random bkwd record stride kB reclen write rewrite read reread read write read rewrite read fwrite frewrite fread freread 102400 4 40859 79542 101334 101666 31721 60459 102400 16 113215 202566 234307 233091 108334 154750 102400 512 362864 412548 359279 362810 340235 412626 102400 1024 400478 453205 381115 385746 372378 453548 102400 16384 583762 598047 595752 596251 590950 604690Zum direkten Vergleich hier heute mal mit 4.17.0-rc6-1019

rock64@rockpro64:/mnt$ uname -a Linux rockpro64 4.17.0-rc6-1019-ayufan-gfafc3e1c913f #1 SMP PREEMPT Tue Jun 12 19:06:59 UTC 2018 aarch64 aarch64 aarch64 GNU/Linuxiozone Test

rock64@rockpro64:/mnt$ sudo iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Iozone: Performance Test of File I/O Version $Revision: 3.429 $ Compiled for 64 bit mode. Build: linux Contributors:William Norcott, Don Capps, Isom Crawford, Kirby Collins Al Slater, Scott Rhine, Mike Wisner, Ken Goss Steve Landherr, Brad Smith, Mark Kelly, Dr. Alain CYR, Randy Dunlap, Mark Montague, Dan Million, Gavin Brebner, Jean-Marc Zucconi, Jeff Blomberg, Benny Halevy, Dave Boone, Erik Habbinga, Kris Strecker, Walter Wong, Joshua Root, Fabrice Bacchella, Zhenghua Xue, Qin Li, Darren Sawyer, Vangel Bojaxhi, Ben England, Vikentsi Lapa. Run began: Sat Jun 16 06:34:43 2018 Include fsync in write timing O_DIRECT feature enabled Auto Mode File size set to 102400 kB Record Size 4 kB Record Size 16 kB Record Size 512 kB Record Size 1024 kB Record Size 16384 kB Command line used: iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Output is in kBytes/sec Time Resolution = 0.000001 seconds. Processor cache size set to 1024 kBytes. Processor cache line size set to 32 bytes. File stride size set to 17 * record size. random random bkwd record stride kB reclen write rewrite read reread read write read rewrite read fwrite frewrite fread freread 102400 4 48672 104754 115838 116803 47894 103606 102400 16 168084 276437 292660 295458 162550 273703 102400 512 566572 597648 580005 589209 534508 597007 102400 1024 585621 624443 590545 599177 569452 630098 102400 16384 504871 754710 765558 780592 777696 753426 iozone test complete.

-

-

-

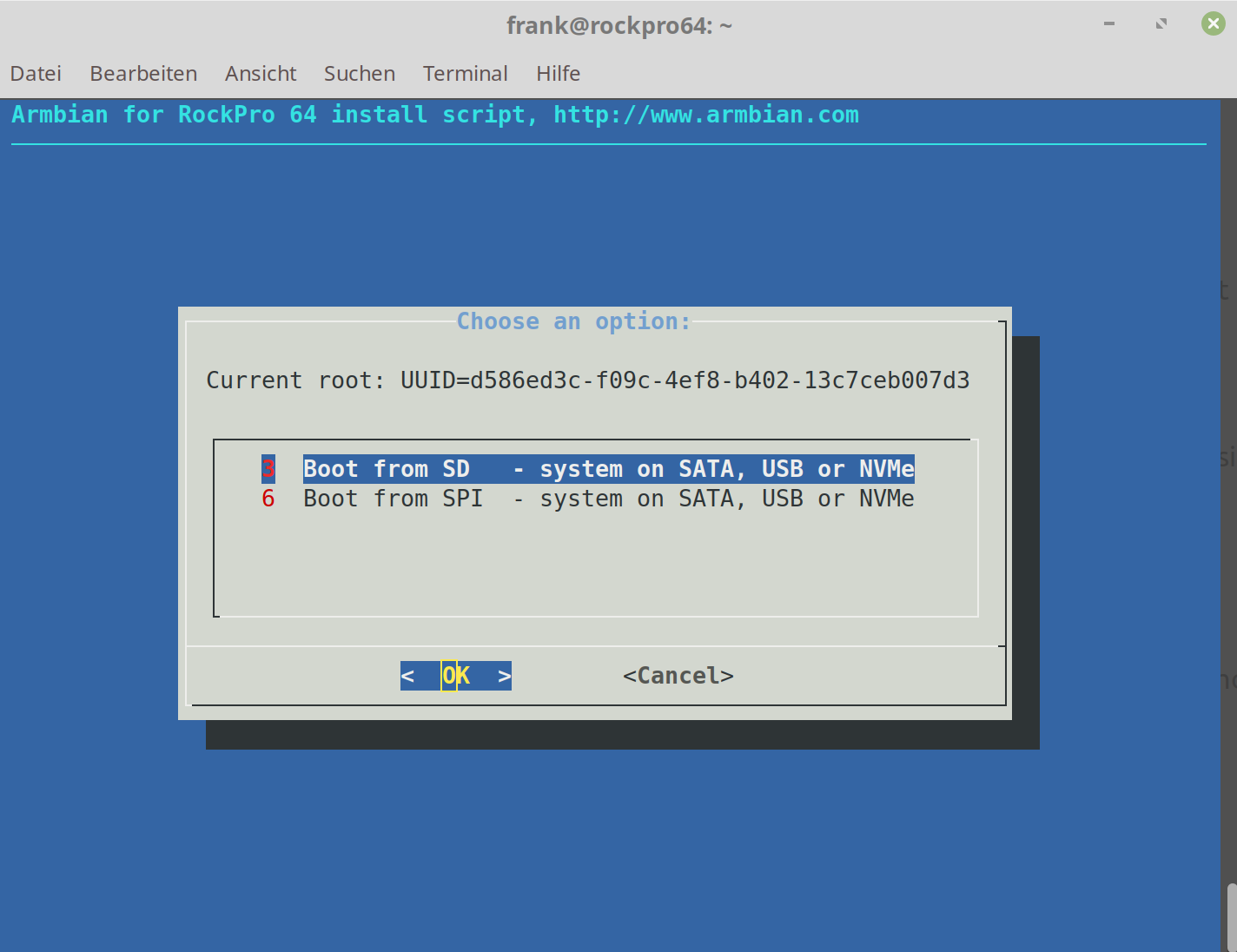

ROCKPro64 - Kernel switchen

Verschoben ROCKPro64 -

-

-

0.6.59 released

Verschoben Archiv -

Image 0.6.57 - NVMe paar Notizen

Verschoben Archiv -

u-boot-erase-spi-rockpro64.img.xz

Verschoben Tools