u-boot-erase-spi-rockpro64.img.xz

Dieses Thema wurde gelöscht. Nur Nutzer mit entsprechenden Rechten können es sehen.

-

INFO's

- Image Name: u-boot-erase-spi-rockpro64.img.xz

- Inhalt: Tool um den SPI Speicher zu Löschen!

- Downloadlink: https://github.com/ayufan-rock64/linux-u-boot/releases

- Autor: Ayufan

Anwendung

Die Datei auf eine SD-Karte schreiben, den ROCKPro64 damit starten. Diese Tool löscht den SPI-Speicher, so das man wieder ganz normal mit einer SD-Karte / eMMC-Karte starten kann.

Wenn der Löschvorgang beendet ist, blinkt die weiße LED auf dem Board!

Status

- August 2018: Funktioniert einwandfrei.

Im Fediverse -> @FrankM@nrw.social

- NanoPi R5S

- Quartz64 Model B, 4GB RAM

- Quartz64 Model A, 4GB RAM

- RockPro64 v2.1

-

ROCKPro64 - Debian Bullseye Teil 2

Beobachtet Ignoriert Geplant Angeheftet Gesperrt Verschoben ROCKPro64 debian linux rockpro640 Stimmen3 Beiträge553 Aufrufe -

ROCKPro64 - PCIe NVMe SSD installieren

Beobachtet Ignoriert Geplant Angeheftet Gesperrt Verschoben Hardware linux rockpro640 Stimmen1 Beiträge377 Aufrufe -

ROCKPro64 - Reset per SSH funktioniert nicht (Kernel 4.4.x)

Beobachtet Ignoriert Geplant Angeheftet Gesperrt Verschoben ROCKPro64 rockpro640 Stimmen14 Beiträge2k Aufrufe -

OMV Images

Beobachtet Ignoriert Geplant Angeheftet Gesperrt Verschoben ROCKPro64 rockpro640 Stimmen3 Beiträge1k Aufrufe -

Mainline Kernel 4.20.x

Beobachtet Ignoriert Geplant Angeheftet Gesperrt Verschoben Images rockpro640 Stimmen26 Beiträge5k Aufrufe -

stretch-minimal-rockpro64

Beobachtet Ignoriert Geplant Angeheftet Gesperrt Verschoben Linux rockpro640 Stimmen3 Beiträge1k Aufrufe -

bionic-minimal-rockpro64

Beobachtet Ignoriert Geplant Angeheftet Gesperrt Verschoben Linux rockpro640 Stimmen4 Beiträge1k Aufrufe -

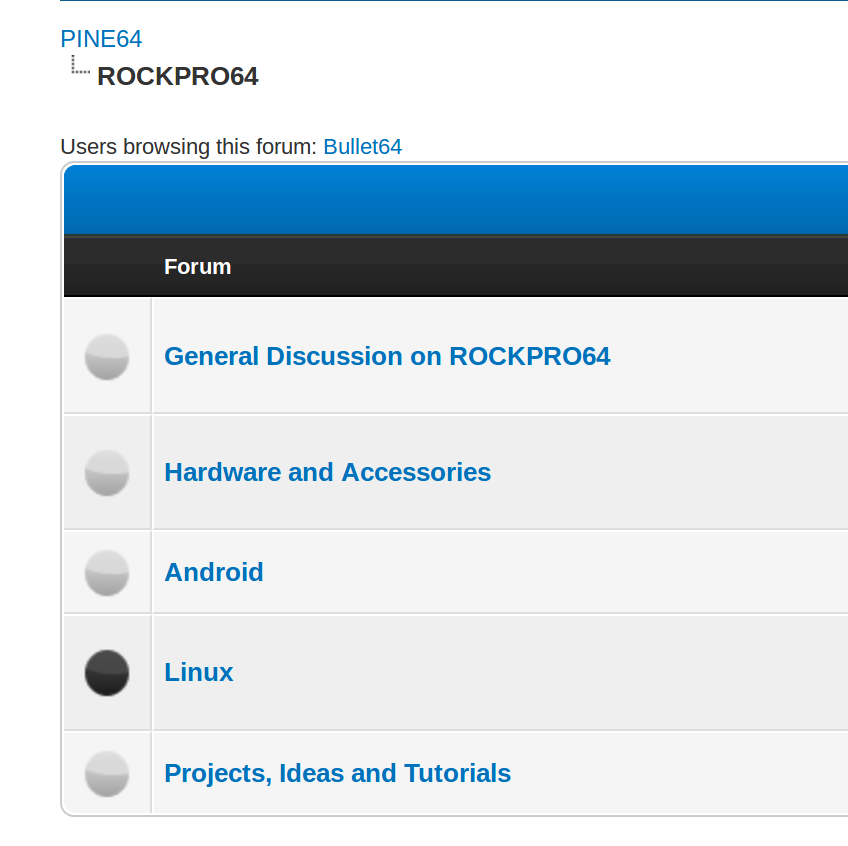

ROCKPro64 Forum

Beobachtet Ignoriert Geplant Angeheftet Gesperrt Verschoben ROCKPro64 rockpro64 1

0 Stimmen1 Beiträge695 Aufrufe

1

0 Stimmen1 Beiträge695 Aufrufe