Kernel 4.4.x

-

- 4.4.167-1146-rockchip-ayufan

- 4.4.167-1148-rockchip-ayufan

- 4.4.167-1151-rockchip-ayufan

- 4.4.167-1153-rockchip-ayufan

Änderungen:

- ayufan: rockchip-vpu: fix compilation errors

- ayufan: dts: rockpro64: fix es8316 support

- ayufan: dts: rockpro64: add missing gpu_power_model for MALI

- ayufan: dts: pinebook-pro: fix support for sound-out

Ayufan bereitet die Images für das kommende Pinbook Pro vor.

-

- 4.4.167-1155-rockchip-ayufan

- 4.4.167-1157-rockchip-ayufan

- 4.4.167-1159-rockchip-ayufan

- 4.4.167-1161-rockchip-ayufan

Änderungen

- ayufan: dts: pinebook-pro: change bt/audio supply according to Android changes

- ayufan: dts: rock64: remove unused

ir-receiver - ayufan: dts: pinebook-pro: fix display port output

- ayufan: dts: pinebook-pro: fix display port output

-

4.4.167-1169-rockchip-ayufan released

- nuumio: dts/c: rockpro64: add pcie scan sleep and enable it for rockpro64 (#45)

-

4.4.167-1175-rockchip-ayufan released

- Old driver is

rockchip-drm-rga

- Old driver is

-

4.4.167-1184-rockchip-ayufan released

4.4.167-1185-rockchip-ayufan released- Revert "PCI: rockchip: Add Rockchip DW PCIe controller support"

- Revert "nuumio: dts/c: rockpro64: add pcie scan sleep and enable it for rockpro64 (#45)"

und viele andere Änderungen. https://gitlab.com/ayufan-repos/rock64/linux-kernel/commits/release-4.4

-

-

4.4.167-1201-rockchip-ayufan released

Ganz schön aktiv unser Kamil im Moment.

Hier zum Nachlesen -> https://gitlab.com/ayufan-repos/rock64/linux-kernel/commits/release-4.4

-

4.4.167-1217-rockchip-ayufan released

Kamil hat die letzten Tage viele Änderungen gemacht, aber hauptsächlich für das kommende Pinebook Pro.

Hier zum Nachlesen https://gitlab.com/ayufan-repos/rock64/linux-kernel/commits/release-4.4

-

-

-

-

-

-

-

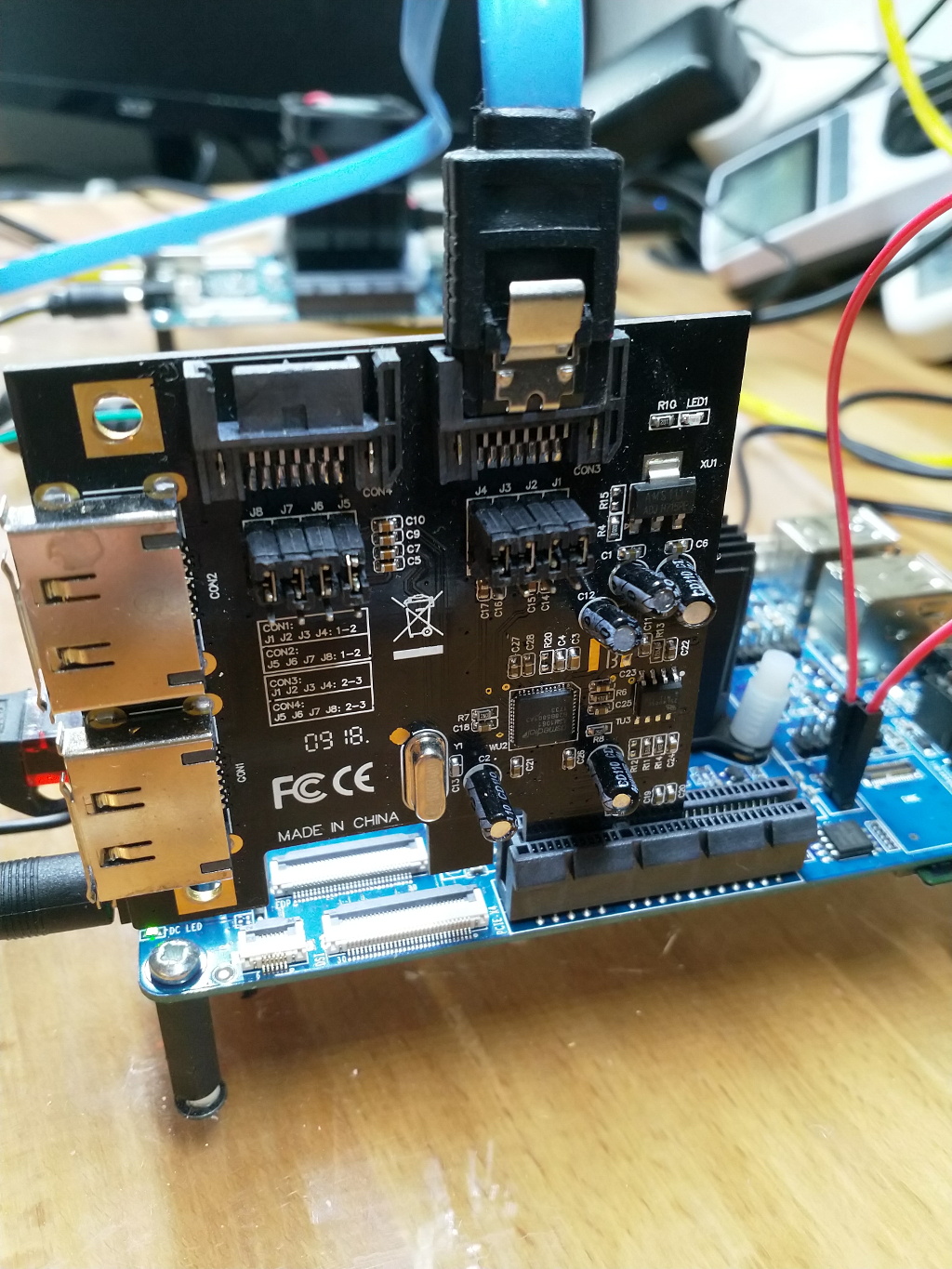

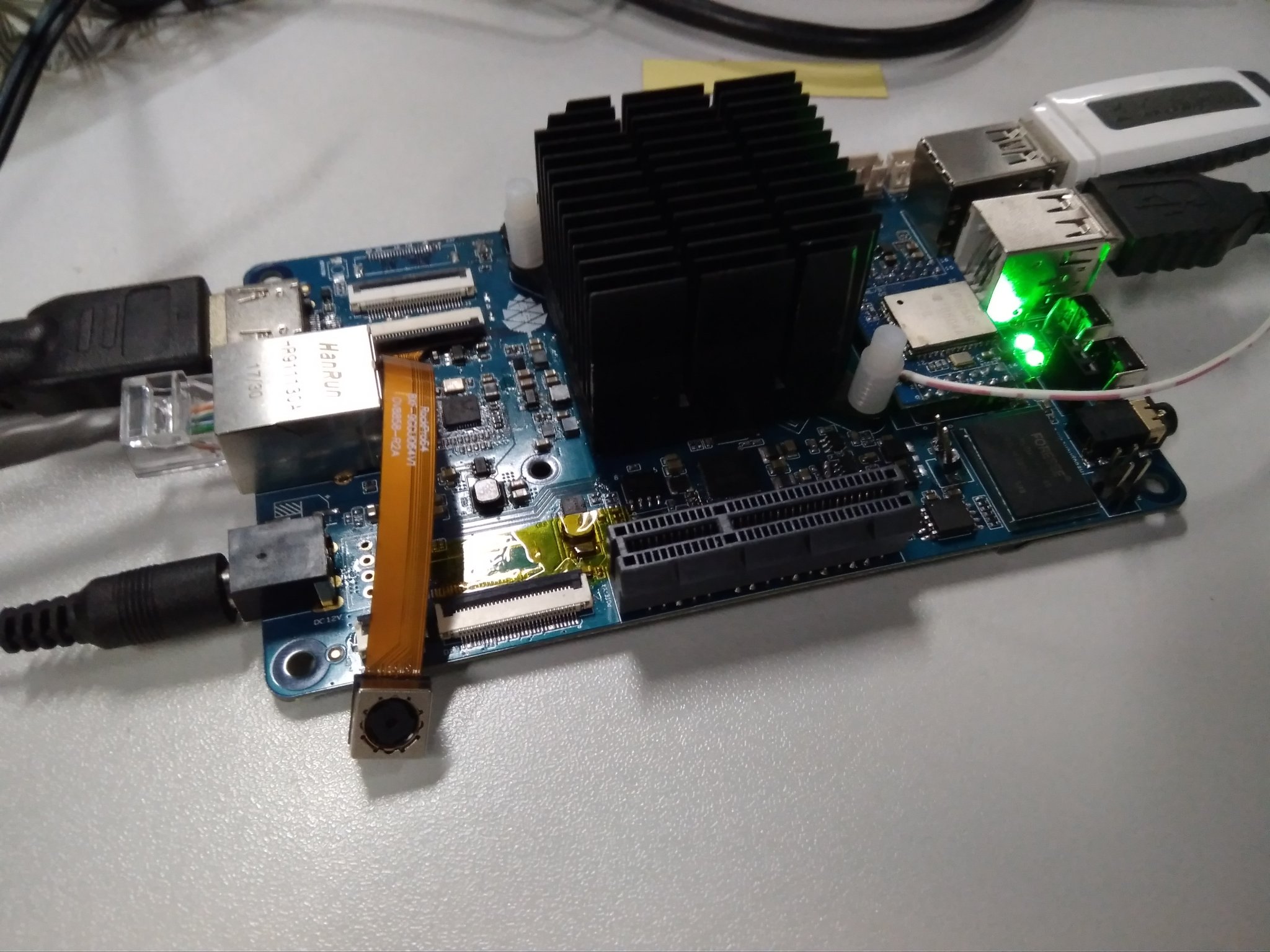

stretch-openmediavault-rockpro64

Verschoben Linux -