ROCKPro64 - Ayufan's Images vs. Armbian

-

Was haben wir?

Ayufan

Kamil bietet eine Menge verschiedener Images an. Die Übersicht findet ihr hier.

Da Kamil auf dem bionic-minimal entwickelt, ist das in meinen Augen das Stabilste.

Außerdem bietet er zwei Kernel Versionen an.

Den 4.20 bitte nicht benutzen, da ist das dts File Schrott. Ich habe keine Ahnung warum Kamil das veröffentlicht, wenn nichts funktioniert!?

Armbian

Armbian bietet zwei Images zur Zeit an.

- Armbian Bionic (ein Ubuntu Desktop) 4.4.y

- Armbian Stretch (Debian Serverversion) 4.4.y

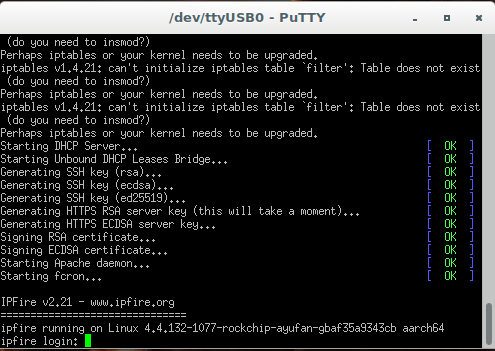

Also eine Desktop-Version und eine Serverversion. Die Nightly Version gibt folgendes aus.

Welcome to ARMBIAN 5.67.181213 nightly Debian GNU/Linux 9 (stretch) 4.4.166-rockchip64Vergleich

Kann man das jetzt vergleichen? Schwierig, aber ich versuche das mal aus dem Blickwinkel eines Anwenders.

Armbian hat zwei sehr interessante Vorteile aktuell. Das sind zwei Scripte, die es vor allen Dingen Einsteigern leichter machen.

Das erste dient zum Einstellen von allen möglichen Funktionen auf dem Board. Das zweite dient dazu, die Installation auf eine USB-HDD, eine SATA-HDD oder eine PCIe-NVMe-SSD zu bringen.

Ich habe hier aktuell zwei ROCKPro64 mit Armbian laufen.

- ROCKPro64 v2.1 2GB mit PCIe-NVMe-SSD (root), SD-Karte (boot), USB-HDD (data) [Sys1]

- ROCKPro64 v2.0 4GB mit USB 3.1 Stick (root), SD-Karte (boot) [Sys 2]

Beide laufen auf der letzten Nightly-Version absolut stabil. Der erste kümmert sich Nachts um Backups. Diese werden auf eine USB-HDD am USB3-Port geschrieben. Unter Kamils Images bekam ich da ständig Fehler im dmesg (endpoint...). Leider so nicht nutzbar. Unter Armbian läuft das ohne Probleme.

Sys 1

USB3-HDD (2,5 Zoll mechanisch, pine64 Adapter)

frank@armbian:/mnt/backup$ sudo dd if=/dev/zero of=sd.img bs=1M count=4096 conv=fdatasync [sudo] Passwort für frank: 4096+0 Datensätze ein 4096+0 Datensätze aus 4294967296 Bytes (4,3 GB, 4,0 GiB) kopiert, 38,4846 s, 112 MB/sRootsystem 960 EVO 250GB

frank@armbian:~$ dd if=/dev/zero of=sd.img bs=1M count=4096 conv=fdatasync 4096+0 Datensätze ein 4096+0 Datensätze aus 4294967296 Bytes (4,3 GB, 4,0 GiB) kopiert, 10,4067 s, 413 MB/sUnd mit iozone

frank@armbian:~$ sudo iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Iozone: Performance Test of File I/O Version $Revision: 3.429 $ Compiled for 64 bit mode. Build: linux Contributors:William Norcott, Don Capps, Isom Crawford, Kirby Collins Al Slater, Scott Rhine, Mike Wisner, Ken Goss Steve Landherr, Brad Smith, Mark Kelly, Dr. Alain CYR, Randy Dunlap, Mark Montague, Dan Million, Gavin Brebner, Jean-Marc Zucconi, Jeff Blomberg, Benny Halevy, Dave Boone, Erik Habbinga, Kris Strecker, Walter Wong, Joshua Root, Fabrice Bacchella, Zhenghua Xue, Qin Li, Darren Sawyer, Vangel Bojaxhi, Ben England, Vikentsi Lapa. Run began: Mon Dec 17 09:39:59 2018 Include fsync in write timing O_DIRECT feature enabled Auto Mode File size set to 102400 kB Record Size 4 kB Record Size 16 kB Record Size 512 kB Record Size 1024 kB Record Size 16384 kB Command line used: iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Output is in kBytes/sec Time Resolution = 0.000001 seconds. Processor cache size set to 1024 kBytes. Processor cache line size set to 32 bytes. File stride size set to 17 * record size. random random bkwd record stride kB reclen write rewrite read reread read write read rewrite read fwrite frewrite fread freread 102400 4 81607 116616 103474 116901 47254 88551 102400 16 194153 277302 325816 326089 170661 274580 102400 512 946236 976213 884237 867914 737332 998820 102400 1024 1007972 1066045 907937 908226 825566 1045686 102400 16384 1164681 1222640 1160792 1161918 1148409 1216002 iozone test complete.Sys 2

USB 3.1 Stick Corsair GTX am USB3 Port

frank@armbian_v2:~$ dd if=/dev/zero of=sd.img bs=1M count=4096 conv=fdatasync 4096+0 Datensätze ein 4096+0 Datensätze aus 4294967296 Bytes (4,3 GB, 4,0 GiB) kopiert, 13,067 s, 329 MB/sund mit iozone

frank@armbian_v2:~$ sudo iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 [sudo] Passwort für frank: Iozone: Performance Test of File I/O Version $Revision: 3.429 $ Compiled for 64 bit mode. Build: linux Contributors:William Norcott, Don Capps, Isom Crawford, Kirby Collins Al Slater, Scott Rhine, Mike Wisner, Ken Goss Steve Landherr, Brad Smith, Mark Kelly, Dr. Alain CYR, Randy Dunlap, Mark Montague, Dan Million, Gavin Brebner, Jean-Marc Zucconi, Jeff Blomberg, Benny Halevy, Dave Boone, Erik Habbinga, Kris Strecker, Walter Wong, Joshua Root, Fabrice Bacchella, Zhenghua Xue, Qin Li, Darren Sawyer, Vangel Bojaxhi, Ben England, Vikentsi Lapa. Run began: Mon Dec 17 09:47:37 2018 Include fsync in write timing O_DIRECT feature enabled Auto Mode File size set to 102400 kB Record Size 4 kB Record Size 16 kB Record Size 512 kB Record Size 1024 kB Record Size 16384 kB Command line used: iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Output is in kBytes/sec Time Resolution = 0.000001 seconds. Processor cache size set to 1024 kBytes. Processor cache line size set to 32 bytes. File stride size set to 17 * record size. random random bkwd record stride kB reclen write rewrite read reread read write read rewrite read fwrite frewrite fread freread 102400 4 26194 30367 35838 35633 19020 14192 102400 16 82127 93832 121197 121433 69124 39366 102400 512 286335 319648 316854 320232 231133 297216 102400 1024 280997 335673 352453 352479 273345 325084 102400 16384 301802 359713 330482 330071 333978 361110 iozone test complete.Fazit

Da ich aktuell mit Armbian am USB3-Port keine Probleme habe, ist das meine erste Wahl im Moment. Wer natürlich einen aktuellen Kernel (4.19.y) benötigt, muss Kamil sein Image nutzen. Die Nightly Versionen sind für den normalen Anwender auch nicht zu empfehlen, da bleibt man lieber auf Stable.

Das schöne ist im Moment, das wir die Wahl haben! Kamil ist ja schon länger nicht mehr sehr aktiv und bringt dann Kernel Versionen raus (4.20), die die Sache nur verschlechtern (PCIe).

Was ich fast vergessen hätte, und Armbian bootet sauber von USB3, da gibt es ja beim Kamil das ein oder andere Problem.

Also von mir im Moment eine klare Empfehlung für Armbian!

-

-

-

-

-

Rock64 and RockPro64 ayufan’s packages

Angeheftet ROCKPro64 -

-

-