Hallo zusammen,

da ich weiß das dieser Artikel recht beliebt ist, wollen wir den heute mal aktualisieren. Vieles aus den vorherigen Beiträgen passt noch. Es gibt aber kleine Anpassungen.

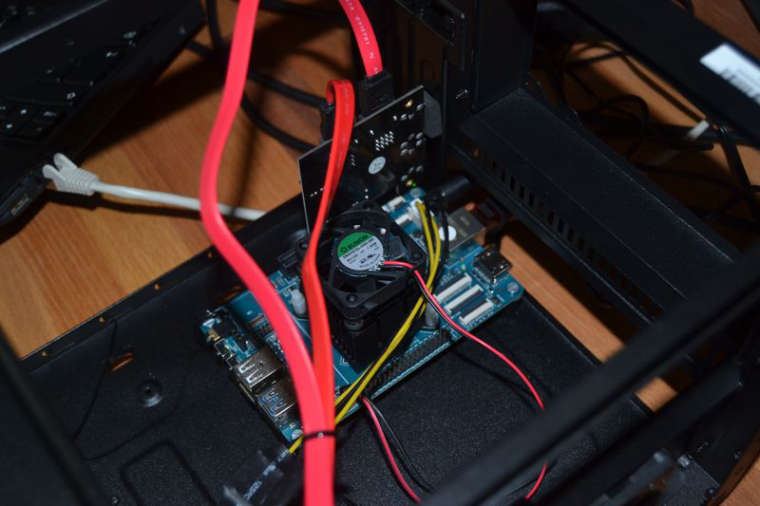

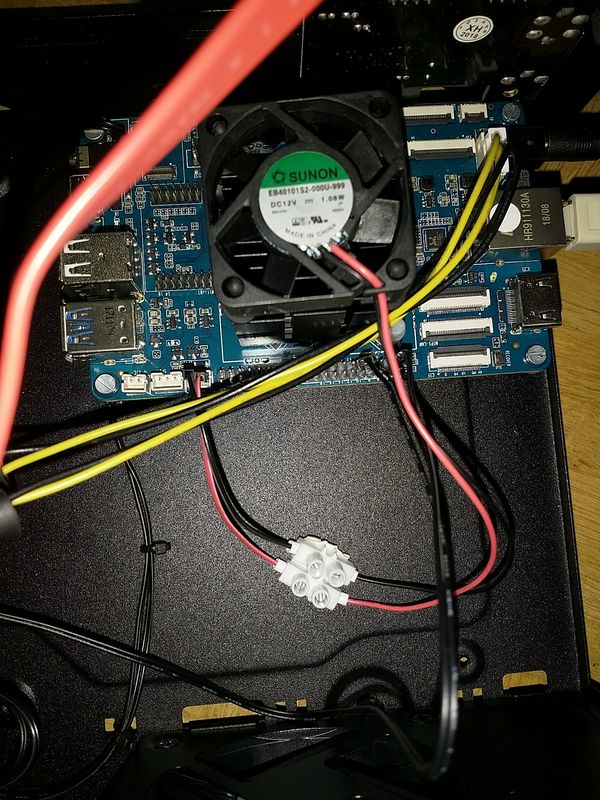

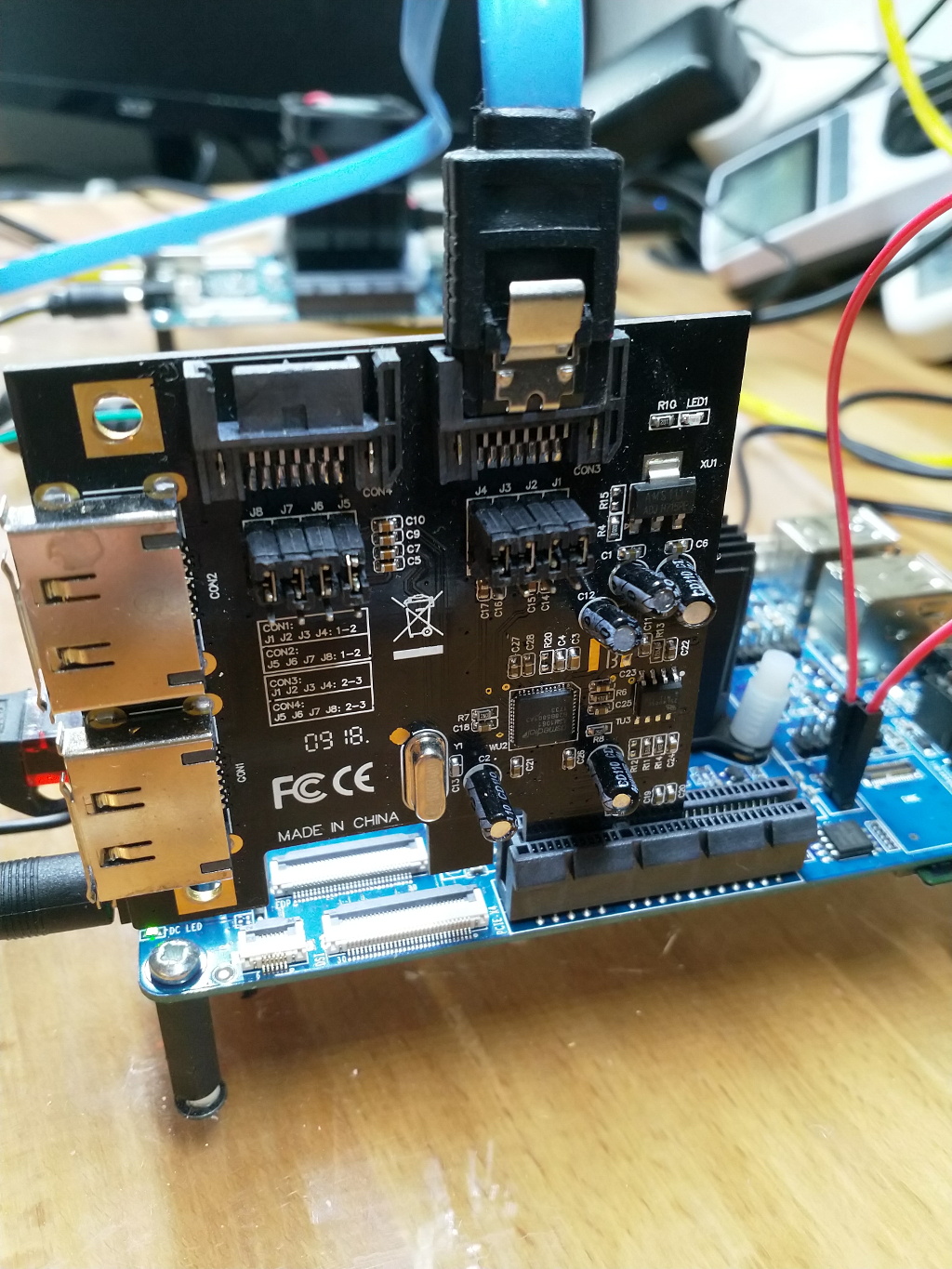

Hardware

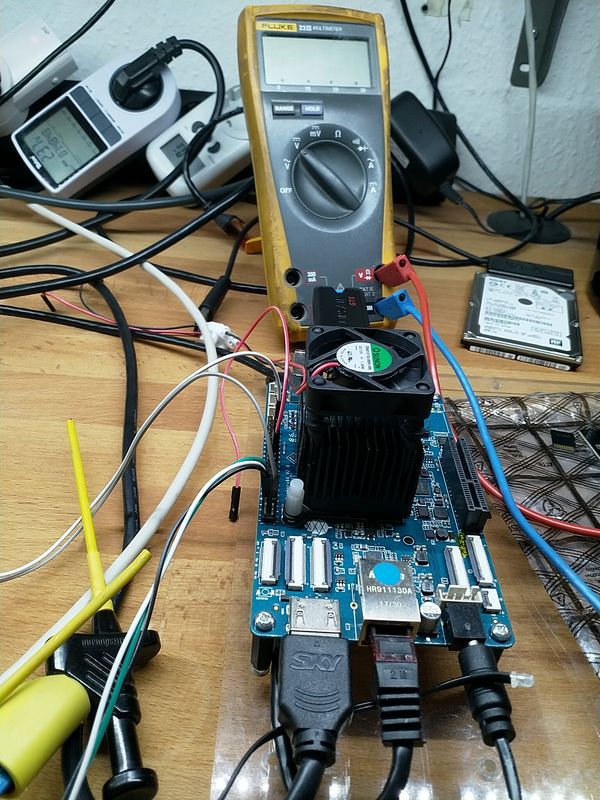

ROCKPro64v21. 2GB RAM

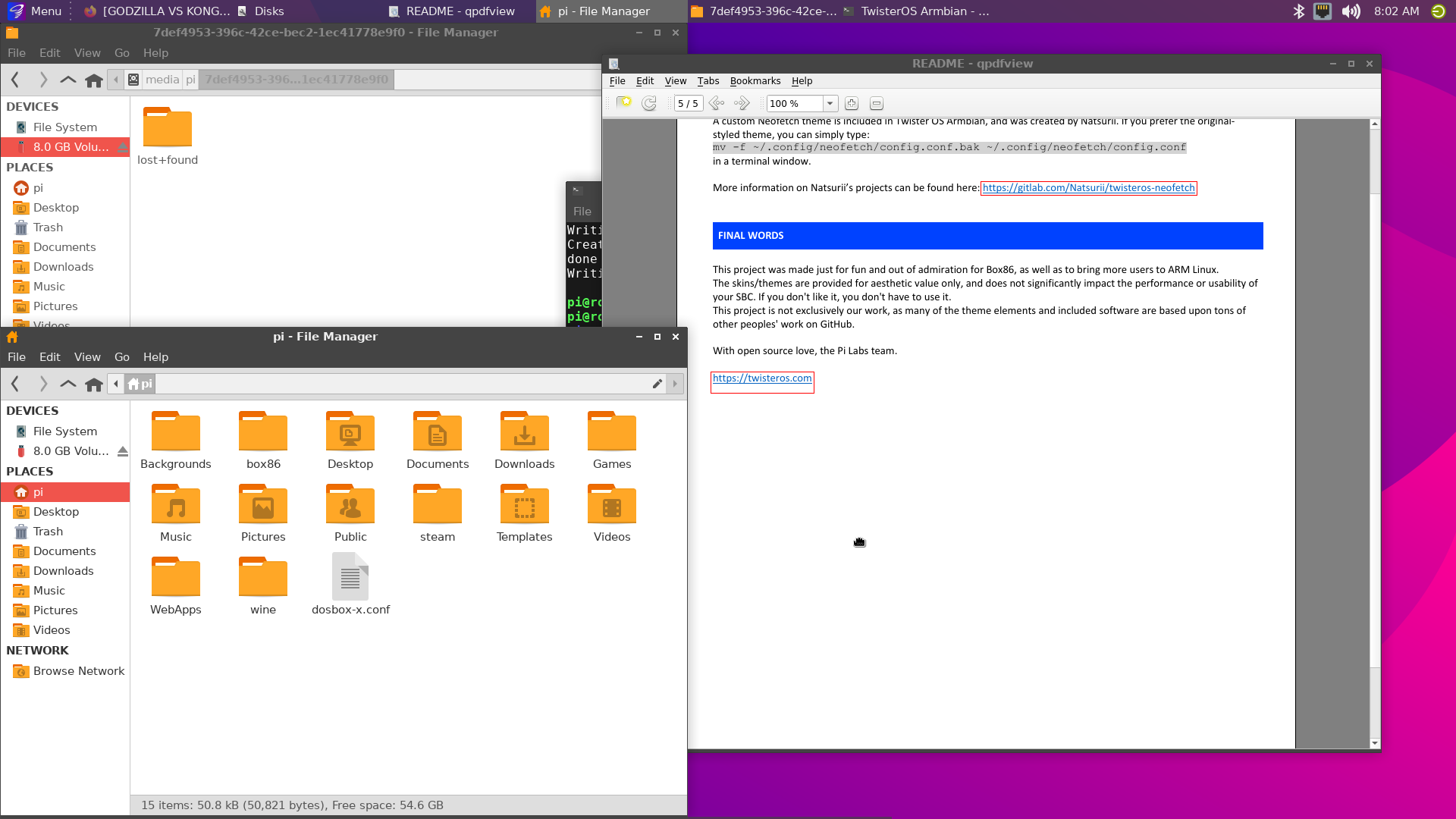

Software

Kamils Release 0.10.9

Linux rockpro64 5.6.0-1132-ayufan-g81043e6e109a #ayufan SMP Tue Apr 7 10:07:35 UTC 2020 aarch64 GNU/Linux

Installation

apt install python

Danach laden wir das Projekt

git clone https://github.com/Leapo/Rock64-R64.GPIO

PIN Nummern anpassen

cd Rock64-R64.GPIO/R64

nano _GPIO.py

Datei ergänzen

# Define GPIO arrays

#ROCK_valid_channels = [27, 32, 33, 34, 35, 36, 37, 38, 64, 65, 67, 68, 69, 76, 79, 80, 81, 82, 83, 84, 85, 86, 87, 88, 89, 96, 97, 98, 100, 101, 102, 103, 104]

#BOARD_to_ROCK = [0, 0, 0, 89, 0, 88, 0, 0, 64, 0, 65, 0, 67, 0, 0, 100, 101, 0, 102, 97, 0, 98, 103, 96, 104, 0, 76, 68, 69, 0, 0, 0, 38, 32, 0, 33, 37, 34, 36, 0, 35, 0, 0, 81, 82, 87, 83, 0, 0, 80, 79, 85, 84, 27, 86, 0, 0, 0, 0, 0, 0, 89, 88]

#BCM_to_ROCK = [68, 69, 89, 88, 81, 87, 83, 76, 104, 98, 97, 96, 38, 32, 64, 65, 37, 80, 67, 33, 36, 35, 100, 101, 102, 103, 34, 82]

ROCK_valid_channels = [52,53,152,54,50,33,48,39,41,43,155,156,125,122,121,148,147,120,36,149,153,42,45,44,124,126,123,127]

BOARD_to_ROCK = [0,0,0,52,0,53,0,152,148,0,147,54,120,50,0,33,36,0,149,48,0,39,153,41,42,0,45,43,44,155,0,156,124,125,0,122,126,121,123,0,127]

BCM_to_ROCK = [43,44,52,53,152,155,156,45,42,39,48,41,124,125,148,147,124,54,120,122,123,127,33,36,149,153,121,50]

Abspeichern.

Datei test.py anlegen

nano test.py

Inhalt

#!/usr/bin/env python

# Frank Mankel, 2018, LGPLv3 License

# Rock 64 GPIO Library for Python

# Thanks Allison! Thanks smartdave!

import R64.GPIO as GPIO

from time import sleep

print("Output Test R64.GPIO Module...")

# Set Variables

var_gpio_out = 156

var_gpio_in = 155

# GPIO Setup

GPIO.setwarnings(True)

GPIO.setmode(GPIO.ROCK)

GPIO.setup(var_gpio_out, GPIO.OUT, initial=GPIO.HIGH) # Set up GPIO as an output, with an initial state of HIGH

GPIO.setup(var_gpio_in, GPIO.IN, pull_up_down=GPIO.PUD_UP) # Set up GPIO as an input, pullup enabled

# Test Output

print("")

print("Testing GPIO Input/Output:")

while True:

var_gpio_state_in = GPIO.input(var_gpio_in)

var_gpio_state = GPIO.input(var_gpio_out) # Return State of GPIO

if var_gpio_state == 0 and var_gpio_state_in == 1:

GPIO.output(var_gpio_out,GPIO.HIGH) # Set GPIO to HIGH

print("Input State: " + str(var_gpio_state_in)) # Print results

print("Output State IF : " + str(var_gpio_state)) # Print results

else:

GPIO.output(var_gpio_out,GPIO.LOW) # Set GPIO to LOW

print("Input State: " + str(var_gpio_state_in)) # Print results

print("Output State ELSE: " + str(var_gpio_state)) # Print results

sleep(0.5)

exit()

Beispiel

[image: 1537522070243-input_ergebnis.jpg]

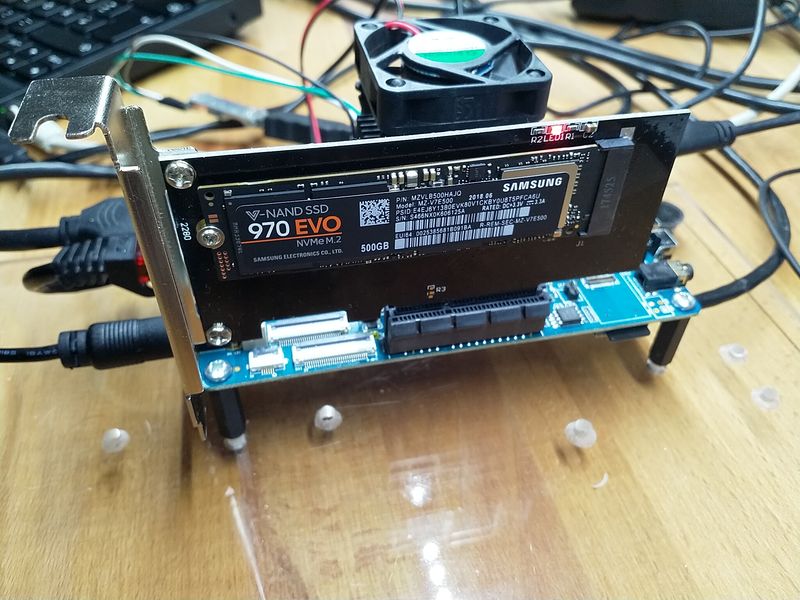

Wenn der Taster im Bild betätigt wird, soll die LED blinken.

Wir benutzen folgende Ein- Augänge des ROCKPro64.

# Set Variables

var_gpio_out = 156

var_gpio_in = 155

Das heißt:

an Pin 1 (3,3V) kommt eine Strippe des Tasters

an Pin 29 (Input) kommt eine Strippe des Tasters

an Pin 31 (Output) kommt der Plus-Pol der LED

an Pin 39 (GND) kommt der Minus-Pol der LED

Somit wird auf den Eingang (Pin 29) bei Betätigung des Tasters 3,3 Volt angelegt. Damit wird dann der Eingang als High (1) erkannt. Die LED wird über den Ausgang (Pin 31) gesteuert.

Starten kann man das Script mit

python test.py

https://www.youtube.com/watch?v=aPSC0Q0xInw