ROCKPro64 v2.1 - Und wieder mal einer der Ersten? ;)

-

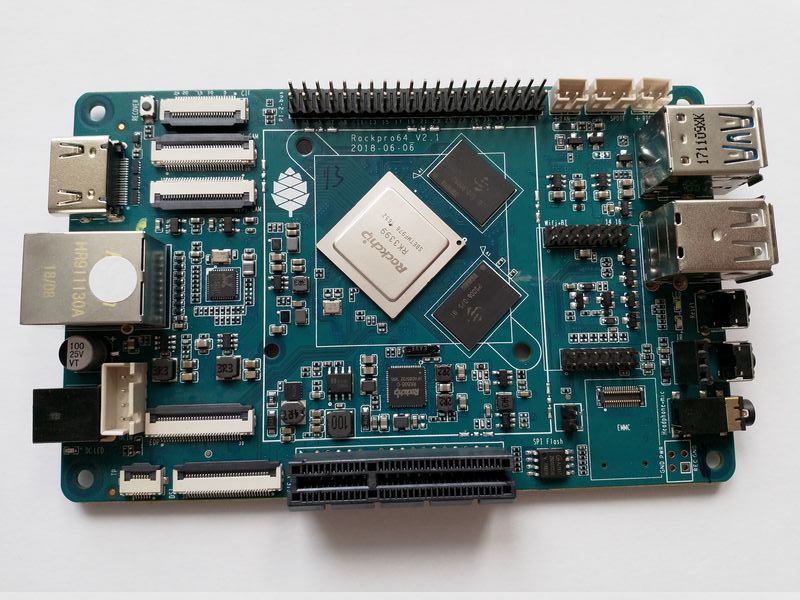

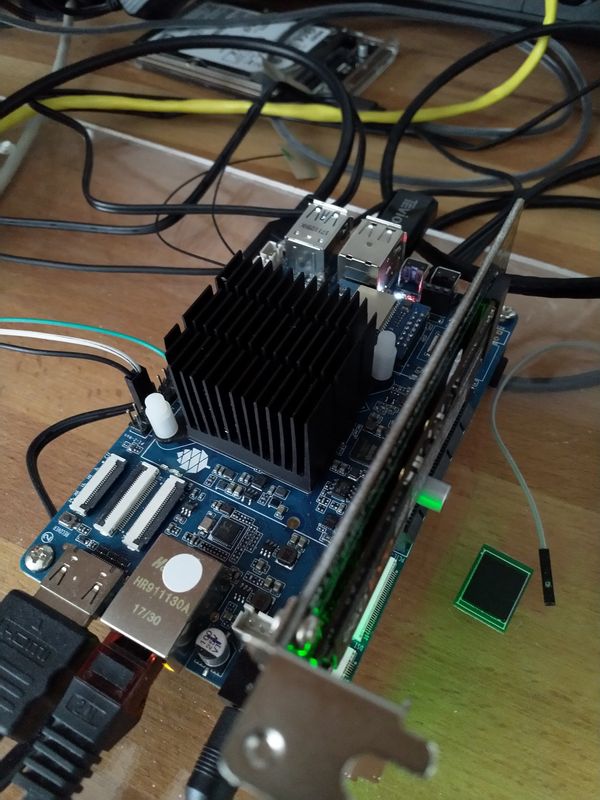

Vorderseite

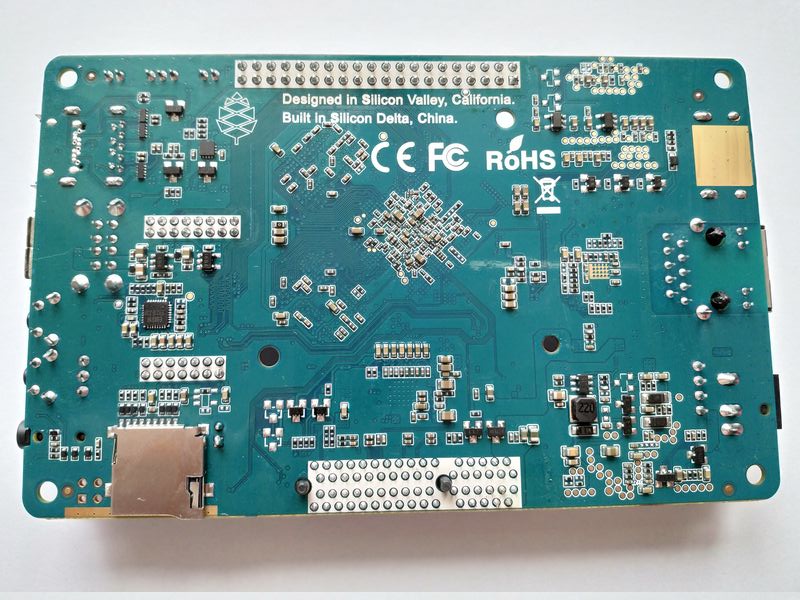

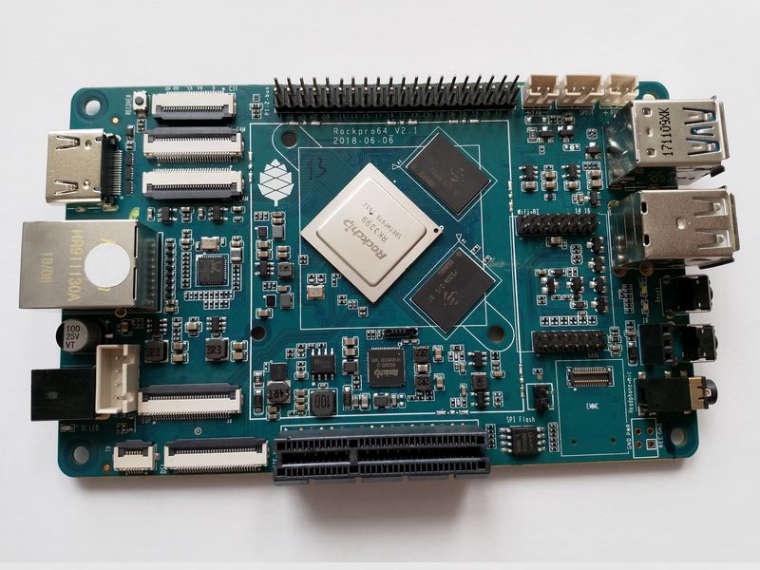

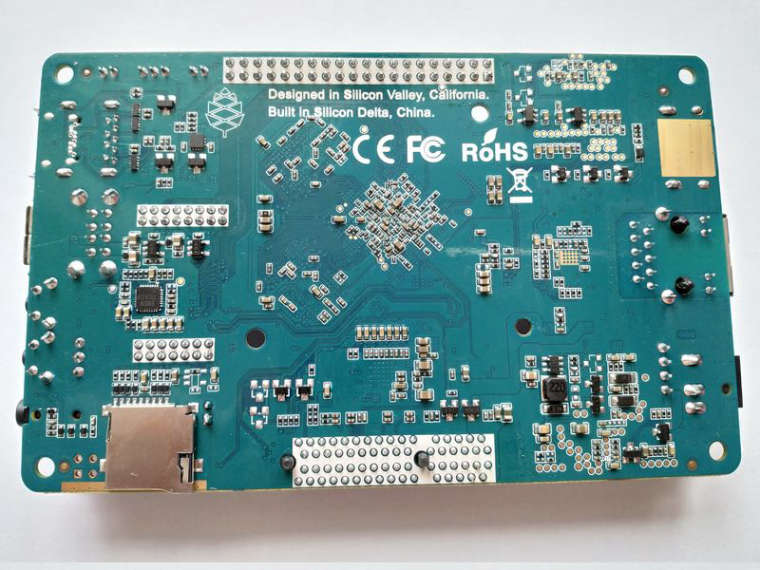

Rückseite

Gerade den Karton geöffnet, kurzer Blick über's Board.

- Der Widerstand für das PCIe Problem ist natürlich weg

- gelbe Folie weg

- WLan Bereich sieht überarbeitet aus, wie erwartet

- vermisse den IR-Empfänger

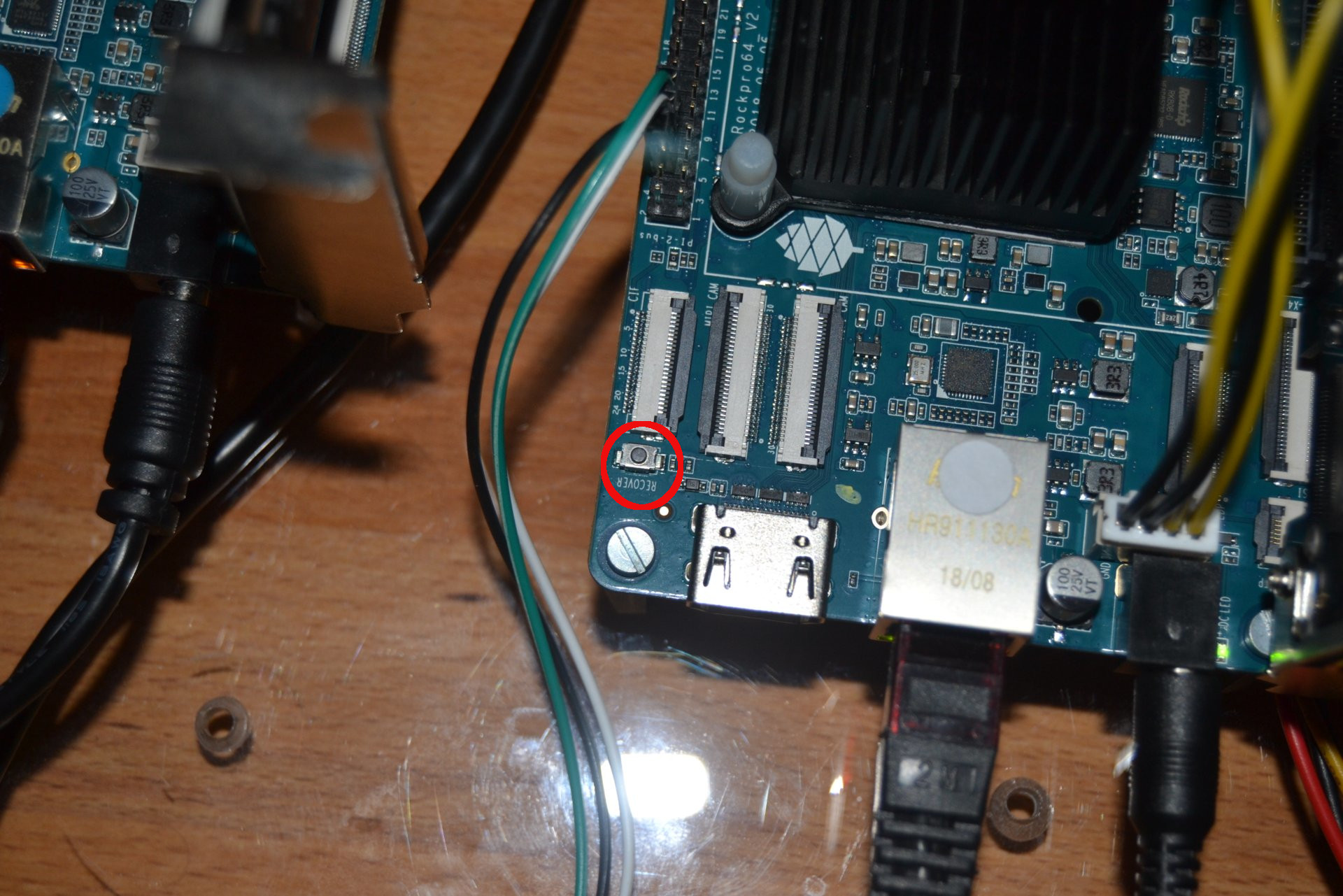

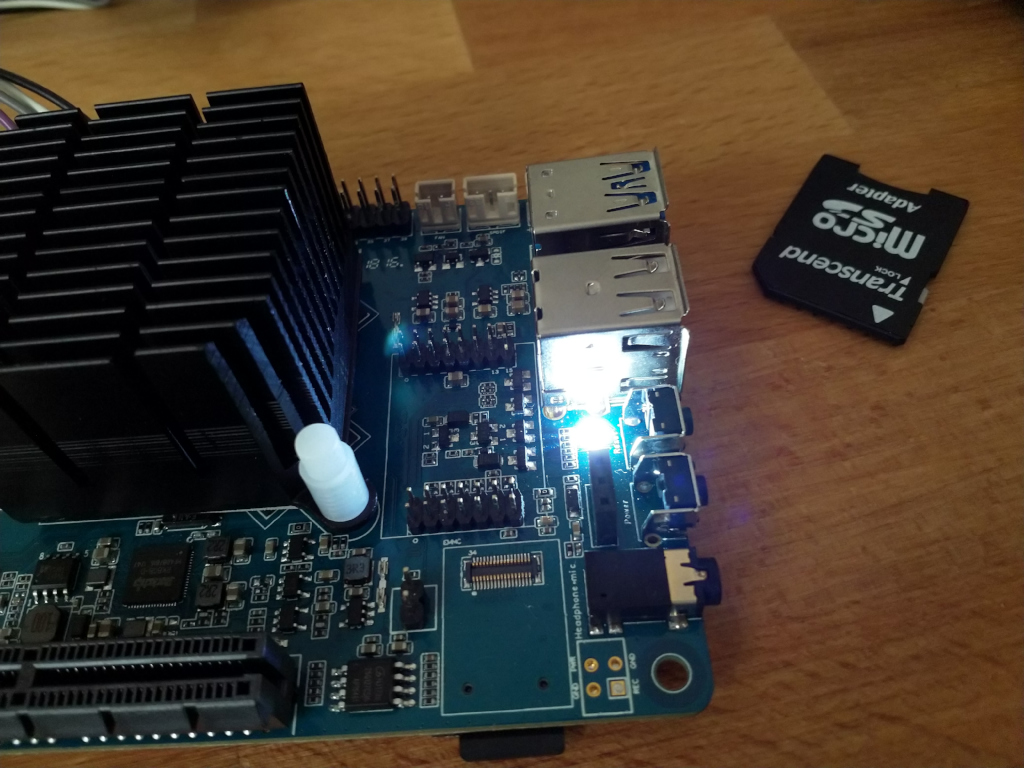

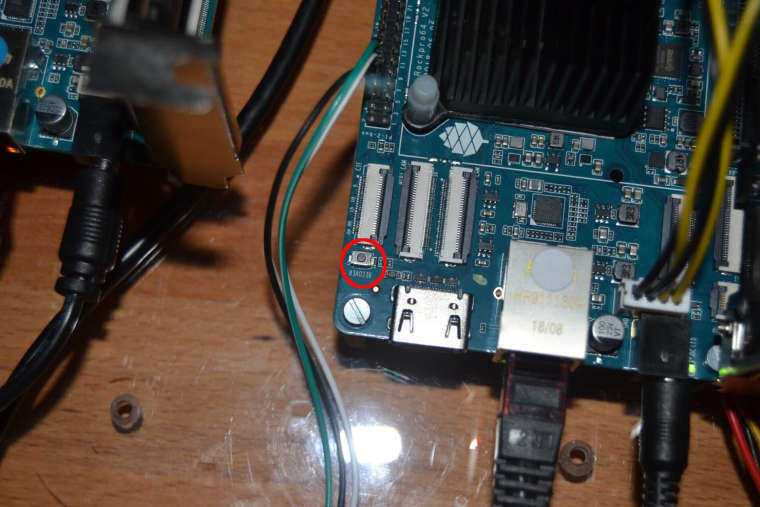

sollte nicht unten rechts ein Taster rein?? (Habe ich aber noch nicht vermisst)- Recover Taster ist jetzt vorhanden! Auf dem oberen Bild oben links!

Mehr und vor allen Dingen ausführlichere Info's folgen.

Update

Recovery Taster

-

Fangen wir vorne an. Das Paket ist angekommen

Was war drin?

- ROCKPro64 2GB

- Kühlkörper

- 32GB eMMC Karte

- USB3-to-SATA Adapter

- Stromanschlüsse für SATA HDD's

- WiFi Adapter

Versand ist teuer 30$, dazu kommt dann noch Zoll und Handling ca. 41€. Aber, läuft alles perfekt und super schnell. Zweite Lieferung ohne Probleme!

Wir kommen zum Board v2.1, das andere Board von mir war ein Vorserienmodell und hatte ja so seine Probleme. Die sollen aber jetzt gefixt sein. Das schauen wir uns dann mal an.

ROCKPro64 v2.1 2GB RAM

Problem Nr.1

PCIe Schnittstelle bekam keine Spannung wegen einem überflüssigen Widerstand. https://forum.frank-mankel.org/topic/116/howto-smd-widerstand-preproduction-board

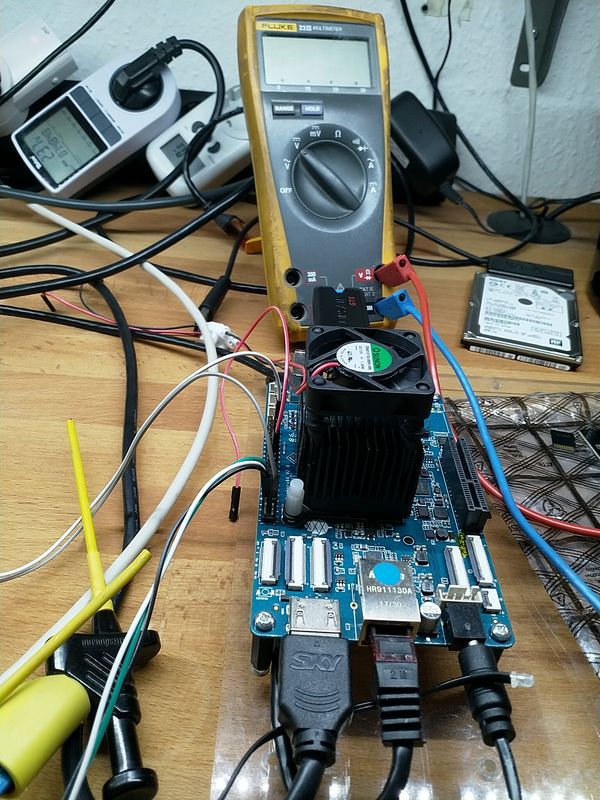

Test

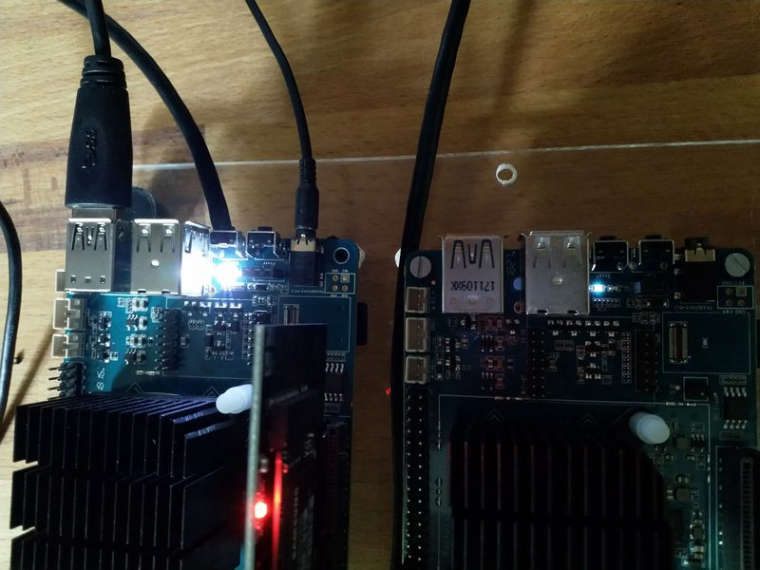

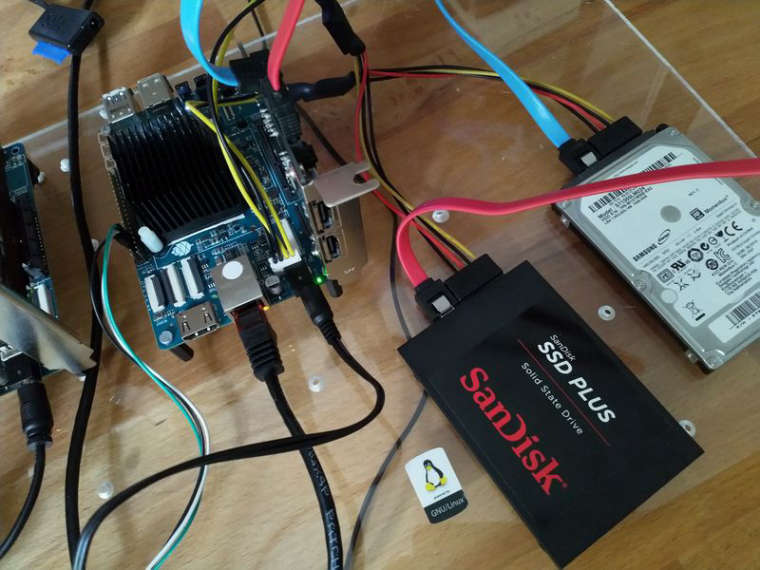

Ich habe eine SSD und einen HDD angeschlossen, mittels zweier SATA Kabeln an der PCIe SATA Karte. Die Spannung für die 2,5 Zoll Festplatten kann man sich direkt vom Board holen. Vorne am Stromanschluß ist ein weißer Steckplatz, dieser stellt die Spannung zur Verfügung. Pine64 hat ein Kabel dafür im Angebot, welches ich mitbestellt habe.

Nachdem ich alles zusammen gesteckt habe, startete der ROCKPro64 ohne Probleme.

Eingesetztes Image

rock64@rockpro64:/mnt$ uname -a Linux rockpro64 4.4.132-1072-rockchip-ayufan-ga1d27dba5a2e #1 SMP Sat Jul 21 20:18:03 UTC 2018 aarch64 aarch64 aarch64 GNU/LinuxMeine 2,5 Zoll SSD mit 240GB

rock64@rockpro64:/mnt$ sudo iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Iozone: Performance Test of File I/O Version $Revision: 3.429 $ Compiled for 64 bit mode. Build: linux Contributors:William Norcott, Don Capps, Isom Crawford, Kirby Collins Al Slater, Scott Rhine, Mike Wisner, Ken Goss Steve Landherr, Brad Smith, Mark Kelly, Dr. Alain CYR, Randy Dunlap, Mark Montague, Dan Million, Gavin Brebner, Jean-Marc Zucconi, Jeff Blomberg, Benny Halevy, Dave Boone, Erik Habbinga, Kris Strecker, Walter Wong, Joshua Root, Fabrice Bacchella, Zhenghua Xue, Qin Li, Darren Sawyer, Vangel Bojaxhi, Ben England, Vikentsi Lapa. Run began: Wed Jul 25 13:46:40 2018 Include fsync in write timing O_DIRECT feature enabled Auto Mode File size set to 102400 kB Record Size 4 kB Record Size 16 kB Record Size 512 kB Record Size 1024 kB Record Size 16384 kB Command line used: iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Output is in kBytes/sec Time Resolution = 0.000001 seconds. Processor cache size set to 1024 kBytes. Processor cache line size set to 32 bytes. File stride size set to 17 * record size. random random bkwd record stride kB reclen write rewrite read reread read write read rewrite read fwrite frewrite fread freread 102400 4 9411 15504 18778 18833 10727 12138 102400 16 28420 52412 62043 62207 33101 37892 102400 512 220877 253630 212220 213237 214789 245359 102400 1024 172543 176752 165533 239463 237009 180548 102400 16384 330306 211445 201754 331198 329653 218451 iozone test complete.Meine 2,5 Zoll HDD, 1TB

rock64@rockpro64:/media$ sudo iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Iozone: Performance Test of File I/O Version $Revision: 3.429 $ Compiled for 64 bit mode. Build: linux Contributors:William Norcott, Don Capps, Isom Crawford, Kirby Collins Al Slater, Scott Rhine, Mike Wisner, Ken Goss Steve Landherr, Brad Smith, Mark Kelly, Dr. Alain CYR, Randy Dunlap, Mark Montague, Dan Million, Gavin Brebner, Jean-Marc Zucconi, Jeff Blomberg, Benny Halevy, Dave Boone, Erik Habbinga, Kris Strecker, Walter Wong, Joshua Root, Fabrice Bacchella, Zhenghua Xue, Qin Li, Darren Sawyer, Vangel Bojaxhi, Ben England, Vikentsi Lapa. Run began: Wed Jul 25 13:52:56 2018 Include fsync in write timing O_DIRECT feature enabled Auto Mode File size set to 102400 kB Record Size 4 kB Record Size 16 kB Record Size 512 kB Record Size 1024 kB Record Size 16384 kB Command line used: iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Output is in kBytes/sec Time Resolution = 0.000001 seconds. Processor cache size set to 1024 kBytes. Processor cache line size set to 32 bytes. File stride size set to 17 * record size. random random bkwd record stride kB reclen write rewrite read reread read write read rewrite read fwrite frewrite fread freread 102400 4 8789 15033 18076 18175 552 1550 102400 16 42530 51919 59579 61526 2178 8438 102400 512 103850 103808 107850 111118 35341 57268 102400 1024 102749 103920 106320 110932 56230 73157 102400 16384 86098 104648 105439 108904 100171 99910 iozone test complete.Kurzer Test von Platte zu Platte

rock64@rockpro64:/mnt$ dd if=/mnt/sd.img of=/media/testfile bs=1G count=1 oflag=direct 1+0 records in 1+0 records out 1073741824 bytes (1.1 GB, 1.0 GiB) copied, 13.0328 s, 82.4 MB/sSchaut soweit alles prima aus. Dem NAS bauen steht nichts mehr im Weg, oder!?