Wichtig!

-

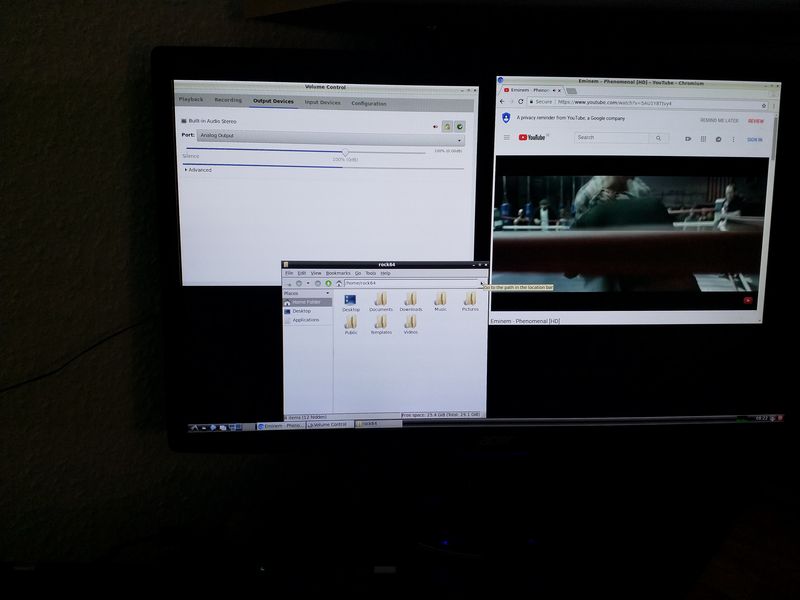

Bitte dran denken, wir haben hier noch kein optimiertes Release, sondern erste Gehversuche. Da sind noch ganz viele Dinge anzupassen, was sicherlich noch Wochen, wenn nicht Monate dauert!

Also, die Messergebnisse mit der nötigen Vorsicht genießen. Und dran denken, wenn @tkaiser das Ding richtig untersucht, dann haben wir auch ordentliche Meßergebnisse!

-

-

-

Kernel 4.4.x

Angeheftet Images -

-

-

-

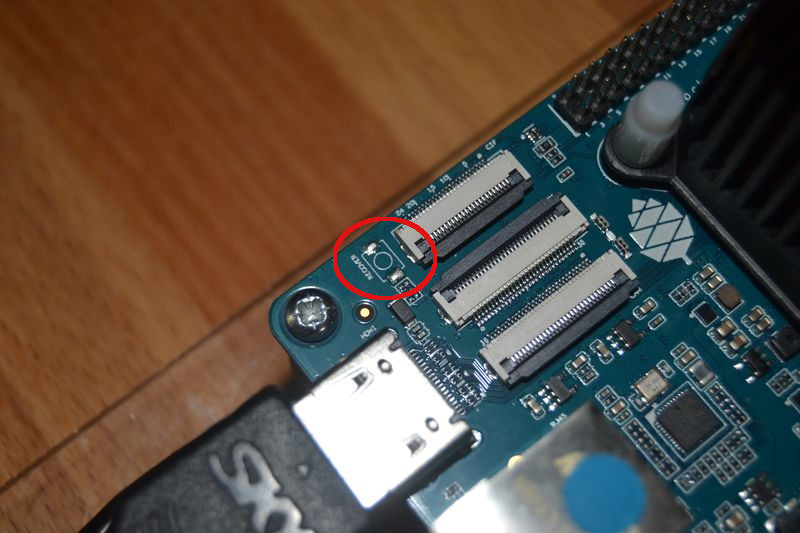

Image 0.6.57 - NVMe paar Notizen

Verschoben Archiv -