Ich kann heute die Fragen aller Fragen beantworten

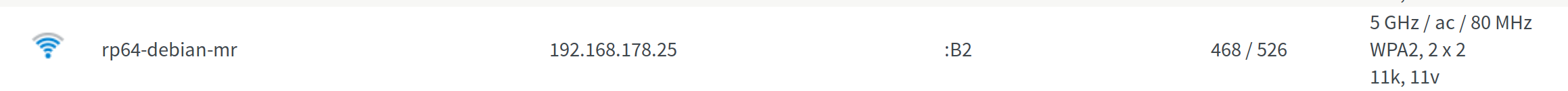

Damit ist leider die Frage immer noch unbeantwortet ob WLan und PCIe zusammen nutzbar ist!!

Es geht!!

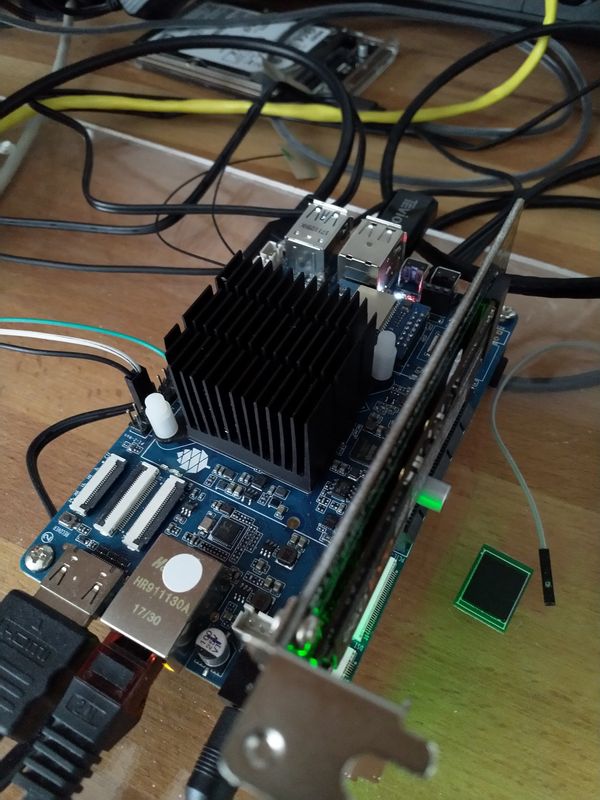

Ich habe von MrFixit ein Testimage der RecalBox, benutzt das selbe Debian wie oben. Die Tage konnte man im IRC verfolgen, wie man dem Grundproblem näher kam und wohl einen Fix gebastelt hat, damit beides zusammen funktioniert. Mr.Fixit hat das in RecalBox eingebaut und ich durfte testen.

# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <NO-CARRIER,BROADCAST,MULTICAST,UP8000> mtu 1500 qdisc pfifo_fast qlen 1000

link/ether 62:03:b0:d6:dc:b3 brd ff:ff:ff:ff:ff:ff

3: wlan0: <BROADCAST,MULTICAST,UP,LOWER_UP8000> mtu 1500 qdisc pfifo_fast qlen 1000

link/ether ac:83:f3:e6:1f:b2 brd ff:ff:ff:ff:ff:ff

inet 192.168.178.27/24 brd 192.168.178.255 scope global wlan0

valid_lft forever preferred_lft forever

inet6 2a02:908:1262:4680:ae83:f3ff:fee6:1fb2/64 scope global dynamic

valid_lft 7145sec preferred_lft 3545sec

inet6 fe80::ae83:f3ff:fee6:1fb2/64 scope link

valid_lft forever preferred_lft forever

# ls /mnt

bin etc media recalbox sd.img test2.img

boot home mnt root selinux tmp

crypthome lib opt run srv usr

dev lost+found proc sbin sys var

# fdisk

BusyBox v1.27.2 (2019-02-01 22:43:19 EST) multi-call binary.

Usage: fdisk [-ul] [-C CYLINDERS] [-H HEADS] [-S SECTORS] [-b SSZ] DISK

Change partition table

-u Start and End are in sectors (instead of cylinders)

-l Show partition table for each DISK, then exit

-b 2048 (for certain MO disks) use 2048-byte sectors

-C CYLINDERS Set number of cylinders/heads/sectors

-H HEADS Typically 255

-S SECTORS Typically 63

# fdisk -l

Disk /dev/mmcblk0: 15 GB, 15931539456 bytes, 31116288 sectors

486192 cylinders, 4 heads, 16 sectors/track

Units: cylinders of 64 * 512 = 32768 bytes

Device Boot StartCHS EndCHS StartLBA EndLBA Sectors Size Id Type

/dev/mmcblk0p1 * 2,10,9 10,50,40 32768 163839 131072 64.0M c Win95 FAT32 (LBA)

Partition 1 does not end on cylinder boundary

/dev/mmcblk0p2 * 16,81,2 277,102,17 262144 4456447 4194304 2048M 83 Linux

Partition 2 does not end on cylinder boundary

/dev/mmcblk0p3 277,102,18 1023,254,63 4456448 31115263 26658816 12.7G 83 Linux

Partition 3 does not end on cylinder boundary

Disk /dev/nvme0n1: 233 GB, 250059350016 bytes, 488397168 sectors

2543735 cylinders, 12 heads, 16 sectors/track

Units: cylinders of 192 * 512 = 98304 bytes

Device Boot StartCHS EndCHS StartLBA EndLBA Sectors Size Id Type

/dev/nvme0n1p1 1,0,1 907,11,16 2048 488397167 488395120 232G 83 Linux

#

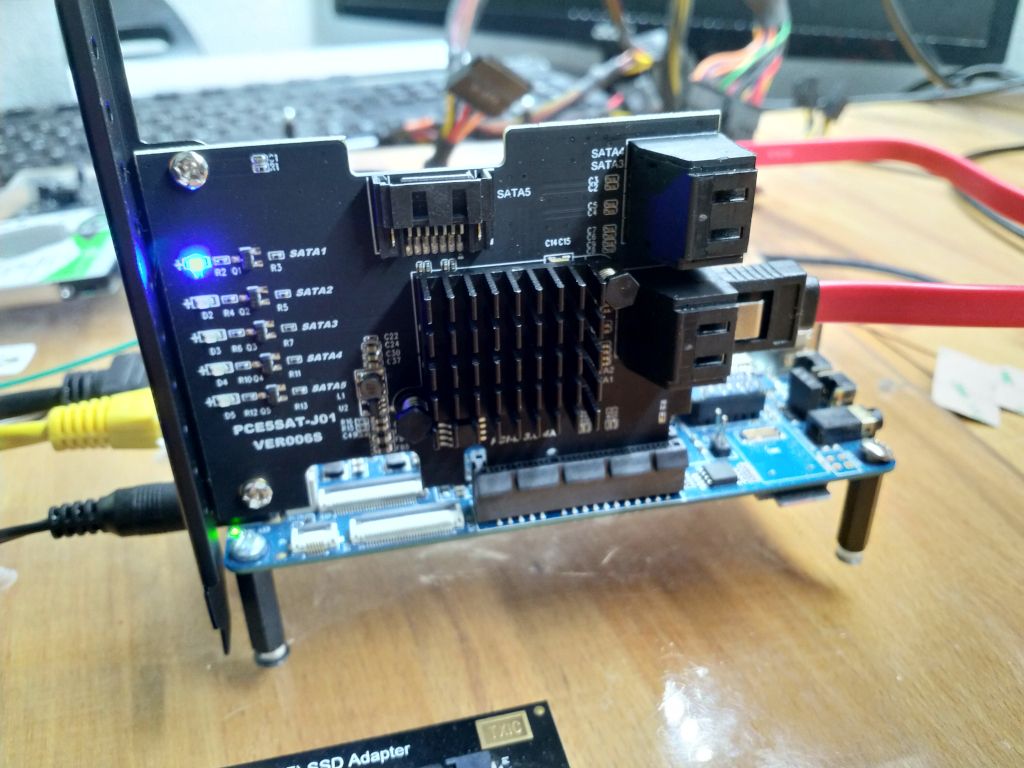

Oben sieht man eine funktionierende WLan-Verbindung, das LAN-Kabel war entfernt. Unten sieht man die PCIe NVMe SSD, gemountet nach /mnt und Inhaltsausgabe.

Das sollte beweisen, das der Ansatz der Lösung funktioniert. Leider kann ich nicht sagen, das es zum jetzigen Zeitpunkt stabil läuft. Ich habe einfach so Reboots, kann den Fehler aktuell aber nicht fangen. Mal sehen ob ich noch was finde.

Aber, es ist ein Anfang!