The end of Windows 10 is approaching, so it's time to consider Linux and LibreOffice

-

It's because we've seen this post 1000 times

schrieb am 16. Juni 2025, 23:56 zuletzt editiert vonand yet you persist. why?

(sorry, this is totally a troll)

-

Perhaps if you ran Linux you'd be less crankyyyyy

later edit : I hope this message was interpreted for the tongue in cheek that it was

schrieb am 17. Juni 2025, 00:10 zuletzt editiert vonFucking loser

-

Putting Bill Gates’ dick in my mouth is far too high of a licensing fee. I’ll just play Oregon Trail on an Apple IIe instead. But no judgment. You live your best life.

schrieb am 17. Juni 2025, 00:11 zuletzt editiert vonCringe douche

-

That's not the flex you think it is.

Your preoccupation with image here hurts you more than it helps you.

schrieb am 17. Juni 2025, 00:11 zuletzt editiert vonI really don't give a shit, loser

-

This post did not contain any content.schrieb am 17. Juni 2025, 00:54 zuletzt editiert von

Call me when Libre doesn't suck/feel like it's stuck in 2003.

I won't hold my breath.

-

I just rage-downgraded back to 10 a couple days ago. is there any reason why I shouldn't just keep using it after this year? are we ever going to see a risk for zero day exploits for it like happened for XP after it depreciated?

schrieb am 17. Juni 2025, 01:18 zuletzt editiert vonConsider running the LTSC version. It gets extended support.

-

I wish I could make parts in FreeCAD anywhere close to as good as I can in Fusion 360... I REALLY miss it since the move to Linux. I'm not anywhere near as excited about my 3D printer anymore since designing parts is a slog and the end result I am generally un-proud of.

I feel like my only option (which sucks) is buy a second GPU for pass through and install windows 10 in a VM that only touches the internet once every 2 weeks to keep Fusion happy.schrieb am 17. Juni 2025, 01:26 zuletzt editiert von

I feel like my only option (which sucks) is buy a second GPU for pass through and install windows 10 in a VM that only touches the internet once every 2 weeks to keep Fusion happy.schrieb am 17. Juni 2025, 01:26 zuletzt editiert vonIt’s possible to pass thru a single GPU. I followed this guide on my Fedora desktop

-

I don't understand how can critical buisness machines which work perfectly fine be switched to windows 11?

We have a machine at work which is beefy and works as a server and backups for many many years on windows 10. Why the hell should I upgrade my buisness critical system ?? Why would I take my risk breaking stuff. I am sure there are millions of critical systems running gon windows 10 which should not be distribed at any means, what would Microsoft do about them.

schrieb am 17. Juni 2025, 01:27 zuletzt editiert vonWe have a machine at work which is beefy and works as a server and backups for many many years on windows 10. Why the hell should I upgrade my buisness critical system ??

Because you should be using a server grade os instead of janking things together with desktop OS installs that just make everything so much harder (and aren't supported for as long).

Sorry, I have to clean up installs like this at least once a year when we take on clients from internal IT that just made things work instead of making something that works right, so I've got opinions.

-

Your business critical system will no longer be supported with security updates which will leave it vulnerable to attack.

I guess, if it's not connected to any sort of outside network, and has no way of accepting data from media like discs or thumb drives then it's perfectly safe, but if that's the case, and it works in isolation, how "business critical" is it?

schrieb am 17. Juni 2025, 01:29 zuletzt editiert vonHahahahahaha, I still periodically see win2k/2k3 on the network at some clients, with SMBv1 enabled across the domain to make the CISO's eye twitch

-

I always find it odd that posts like this get any downvotes at all. Like, are people really that in love with Windows and or Microsoft?

schrieb am 17. Juni 2025, 01:45 zuletzt editiert vonBecause the people that would or can switch would already switch after it's been posted for the 1000th time. It's not realistic because the vast majority of people simply don't care. People hate windows updates enough as it is, to most average people this is good news.

-

Call me when Libre doesn't suck/feel like it's stuck in 2003.

I won't hold my breath.

schrieb am 17. Juni 2025, 01:46 zuletzt editiert vonIf you don't like it, try OnlyOffice.

-

Because the people that would or can switch would already switch after it's been posted for the 1000th time. It's not realistic because the vast majority of people simply don't care. People hate windows updates enough as it is, to most average people this is good news.

schrieb am 17. Juni 2025, 01:53 zuletzt editiert vonNot caring is why these corporations have the power they do.

-

I've had windows update disabled for years so the fact that it's "end of life" don't mean shit to me. It'll keep chugging along for years more.

That said, I installed Mint a week ago and love it!

schrieb am 17. Juni 2025, 02:06 zuletzt editiert vonMint was my first Linux OS, and it's been really nice.

-

Nvidia Linux drivers are still kinda iffy these days but so are the Windows ones too

schrieb am 17. Juni 2025, 02:42 zuletzt editiert vonIt was definitely a Ubuntu thing - Pop, 2 version of Ubuntu, and Mint all failed at various points when dealing with GPU drivers, but I'm using closed-source nvidia drivers on the same GPU in Nobara (Fedora) without issue. Though I guess I haven't tried updating it yet, but all my hardware accelerated games work as they should.

-

I am greatful that Ubuntu ended up bringing the Linux desktop into the general publics eye, but at the same time out of all of the popular distro's today, I firmly believe there is always a better choice than Ubuntu for any user, new or veteran. It's just a pity that they are the most well known to people who aren't familiar with Linux while not being good at anything, although basically any Linux distro feels like fresh air when compared to the Microsoft experience.

schrieb am 17. Juni 2025, 02:43 zuletzt editiert vonWhy is that? What's the problem with ubuntu? I mean ubuntu-based distros seem to hate my bog-standard RTX3060 GPU for some reason, but besides that. I'm pretty happy with nobara tho, and wouldn't switch back to ubuntu even if I knew it'd work with my GPU.

-

This post did not contain any content.schrieb am 17. Juni 2025, 02:46 zuletzt editiert von

The end of windows 10 support is approaching. Windows 10 will go on for a while yet.

-

How many laptops do you own lol?

schrieb am 17. Juni 2025, 02:47 zuletzt editiert von isomorph@feddit.orgFamilies exist. I'm the "IT guy" for 3 people using laptops

-

Call me when Libre doesn't suck/feel like it's stuck in 2003.

I won't hold my breath.

schrieb am 17. Juni 2025, 03:17 zuletzt editiert vonCall me when Windows doesn't suck and it's not getting worse year by year. I will laugh every time they add more ads and more tracking.

-

Call me when Libre doesn't suck/feel like it's stuck in 2003.

I won't hold my breath.

schrieb am 17. Juni 2025, 03:18 zuletzt editiert vonBaby duck syndrome.

-

Mint was my first Linux OS, and it's been really nice.

schrieb am 17. Juni 2025, 03:19 zuletzt editiert vonNot my first, but the one I landed on after years. It's just so good.

-

Hi, I’m a product manager of an AI notetaker – would love your feedback

Technology196 vor 14 Tagenvor 14 Tagen 1

1

-

-

-

You're not alone: This email from Google's Gemini team is concerning

Technology 25. Juni 2025, 06:54 1

1

-

-

-

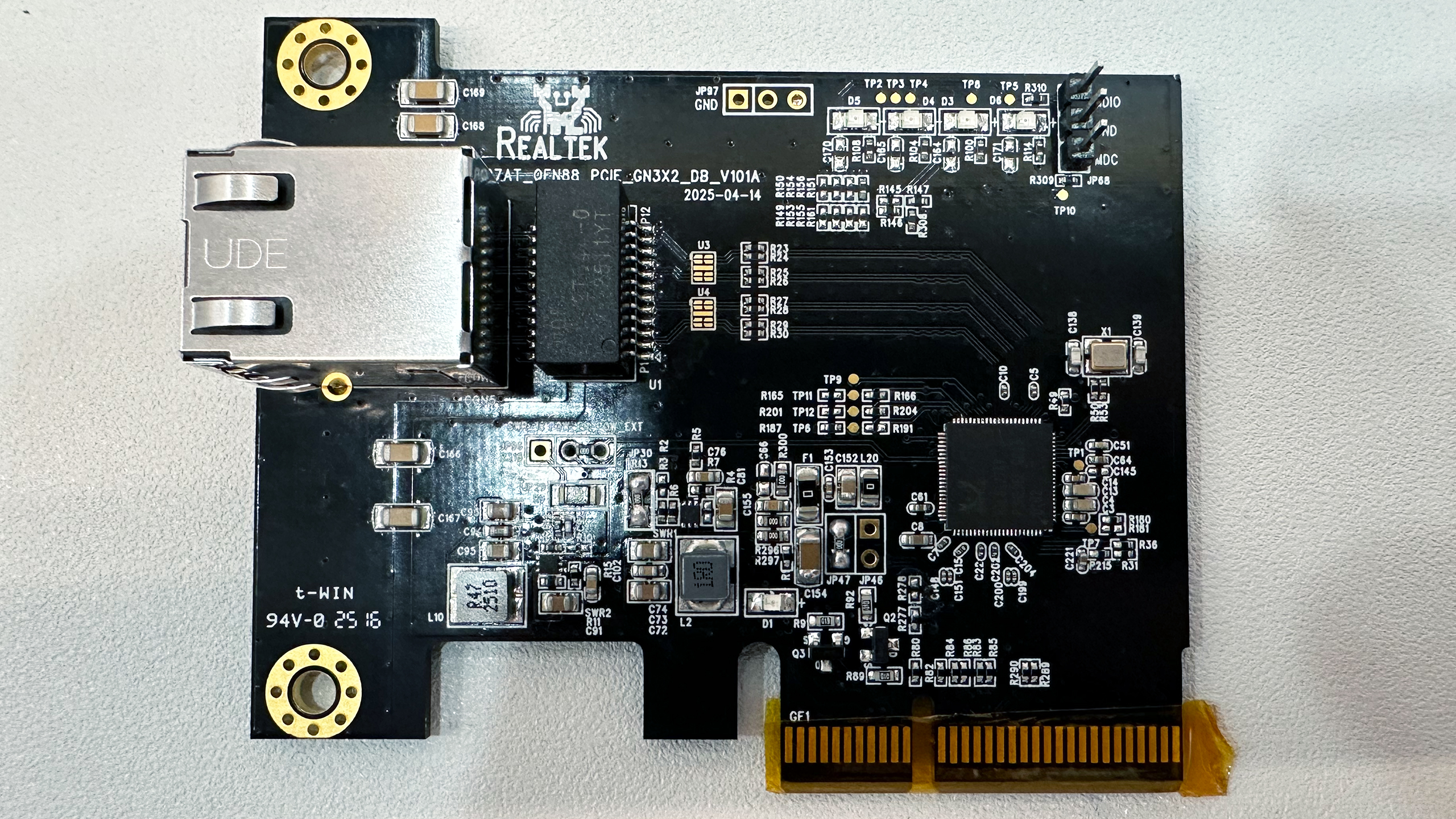

Realtek's $10 tiny 10GbE network adapter is coming to motherboards later this year

Technology 23. Mai 2025, 13:01 1

1

-

Market Structure Rules for Crypto Could End Up Governing Core of U.S. Finance: Le

Technology 13. Mai 2025, 00:05 1

1