Mainline Kernel 4.18.0-rc3

-

Methode 1

INFO'S

- 4.18.0-rc3-1035-ayufan

- Alle Files, die man benötigt um einen Mainline Kernel zu installieren!

- Downloadlink: https://github.com/ayufan-rock64/linux-mainline-kernel/releases

- Autor: Ayufan

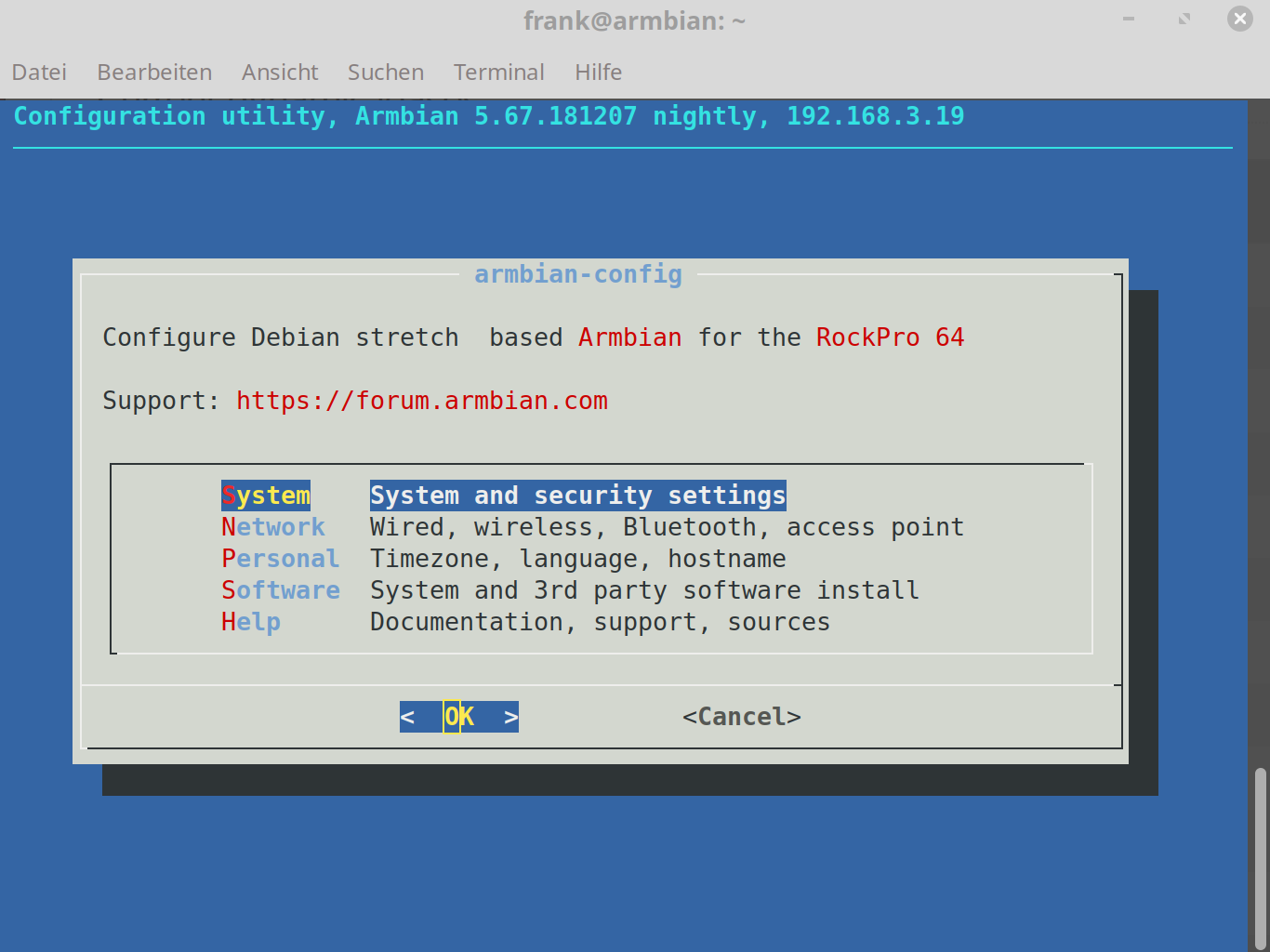

ANWENDUNG

Es muss vorher ein Image installiert worden sein. z.B. ein 0.6.58: jenkins-linux-build-rock-64-271

Dann alle .deb Files runterladen.

Danach ein

sudo dpkg -i *.debDann den ROCKPro64 neustarten.

Sollte der neue Kernel nicht booten oder Probleme machen, kann man den letzten Kernel benutzen. Dazu muss man den U-Boot überwachen

U-Boot 2017.09-gec1524d (Jun 03 2018 - 14:57:16 +0000), Build: jenkins-linux-build-rock-64-249 Model: Pine64 RockPro64 DRAM: 3.9 GiB MMC: sdhci@fe330000: 0, dwmmc@fe320000: 1 Card did not respond to voltage select! mmc_init: -95, time 21 *** Warning - No block device, using default environment In: serial@ff1a0000 Out: serial@ff1a0000 Err: serial@ff1a0000 Model: Pine64 RockPro64 Net: eth0: ethernet@fe300000 Hit any key to stop autoboot: 0 Card did not respond to voltage select! mmc_init: -95, time 21 switch to partitions #0, OK mmc1 is current device Scanning mmc 1:6... Found /extlinux/extlinux.conf Retrieving file: /extlinux/extlinux.conf reading /extlinux/extlinux.conf 688 bytes read in 3 ms (223.6 KiB/s) select kernel 1: kernel-latest 2: kernel-previous Enter choice: 2 2: kernel-previousBei select kernel eine "2" eingeben und die Taste "RETURN" betätigen, möglichst zügig

Danach wird der Alte Kernel geladen.

Danach wird der Alte Kernel geladen.

Methode 2

-

Auskommentieren von pre-releases in folgender Datei /etc/apt/sources.list.d/ayufan-rock64.list

-

Nach Kernel Paketen suchen apt-cache search linux-image

-

Den ausgewählten <kernel> installieren, z.B.: linux-image-4.15.0-rockchip-ayufan-177-g59389fa34

apt-get update apt-get install <kernel> -

Reboot

sudo reboot

Quelle: https://github.com/ayufan-rock64/linux-build/blob/master/recipes/kernel-upgrade.md

-

-

-

-

-

-

stretch-openmediavault-rockpro64

Verschoben Linux -

stretch-minimal-rockpro64

Verschoben Linux -