ChatGPT 5 power consumption could be as much as eight times higher than GPT 4 — research institute estimates medium-sized GPT-5 response can consume up to 40 watt-hours of electricity

-

It will not go away at this point. Too many daily users already, who uses it for study, work, chatting, looking things up.

If not OpenAI, it will be another service.

And most importantly the Pandora box has been opened for deep perfect scams and illegal usage. Nobody will put it in the box again, because even if everyone agreed to make it illegal everywhere it's already too late.

-

40 watt-hours? That's the energy usage of a very small laptop.

Well over the course of an hot or two, but it's correct that a dryer run even with heat pump is significantly more than 40wh

-

40 watt-hours? That's the energy usage of a very small laptop.

Imagine if you had to empty your whole laptop battery every time you had to generate a 20 lines response that may not even be correct... That'll end up consuming power really fast.

-

The University of Rhode Island's AI lab estimates that GPT-5 averages just over 18 Wh per query, so putting all of ChatGPT's reported 2.5 billion requests a day through the model could see energy usage as high as 45 GWh.

A daily energy use of 45 GWh is enormous. A typical modern nuclear power plant produces between 1 and 1.6 GW of electricity per reactor per hour, so data centers running OpenAI's GPT-5 at 18 Wh per query could require the power equivalent of two to three nuclear power reactors, an amount that could be enough to power a small country.

That's alright. When they've got a generation of people who can't even hold a conversation without it, let alone do a job, that price increase will drop that energy use pretty rapidly.

-

I don't care how rough the estimate is, LLMs are using insane amounts of power, and the message I'm getting here is that the newest incarnation uses even more.

BTW a lot of it seems to be just inefficient coding as Deepseek has shown.

Also don't forget how people like wasting resources by asking questions like "what's the weather today".

-

I don't care how rough the estimate is, LLMs are using insane amounts of power, and the message I'm getting here is that the newest incarnation uses even more.

BTW a lot of it seems to be just inefficient coding as Deepseek has shown.

And water usage which will also increase as fires increase and people have trouble getting access to clean water

AI water footprint suggests that large language models are thirsty

Analysis warns that enormous AI water footprint could pose a major roadblock to sustainable evolution of large language models such as GPT-4.

TechHQ (techhq.com)

-

Can you give some examples of those technologies? I'd be interested in how many weren't replaced with something more efficient or convenient.

Technologies come and go, but often when a worldwide popular one vanishes, it's because it got replaced with something else.

So lets say we need LLM's to go away. What should that be? Impossible to answer, I know, but that's what it would take.

We cant even get rid of Facebook and Twitter.

BUT that being said. LLMs will be 100x more efficient at some point - like any other new technology. We are just not there yet.

-

It takes less energy to dry a full load of clothes

A standard dryer is more like 2-5kWh for a load.. Far more than 40Wh.

-

Technologies come and go, but often when a worldwide popular one vanishes, it's because it got replaced with something else.

So lets say we need LLM's to go away. What should that be? Impossible to answer, I know, but that's what it would take.

We cant even get rid of Facebook and Twitter.

BUT that being said. LLMs will be 100x more efficient at some point - like any other new technology. We are just not there yet.

@themurphy @rigatti There is one difference ... LLM's can't be more efficient there is an inherent limitation to the technology.

https://blog.dshr.org/2021/03/internet-archive-storage.html

In 2021 they used 200PB and they for sure didn't make a copy of the complete internet. Now aks yourself if all this information without loosing informations can fit into a 1TB Model ?? ( Sidenote deepseek r1 is 404GB so not even 1TB ) ... local llm's usually < 16GB ...

This technology has been and will be never able to 100% replicate the original informations.

It has a certain use ( Machine Learning has been used much longer already ) but not what people want it to be (imho).

-

The University of Rhode Island's AI lab estimates that GPT-5 averages just over 18 Wh per query, so putting all of ChatGPT's reported 2.5 billion requests a day through the model could see energy usage as high as 45 GWh.

A daily energy use of 45 GWh is enormous. A typical modern nuclear power plant produces between 1 and 1.6 GW of electricity per reactor per hour, so data centers running OpenAI's GPT-5 at 18 Wh per query could require the power equivalent of two to three nuclear power reactors, an amount that could be enough to power a small country.

Tech hasn't improved that much in the last in the last decade. All that's happened is that more cores have been added. The single-thread speed of a CPU is stagnant.

My home PC consumes more power than my Pentium 3 consumed 25 years ago. All efficiency gains are lost to scaling for more processing power. All improvements in processing power are lost to shitty, bloated code.

We don't have the tech for AI. We're just scaling up to the electrical senand demand of a small country and pretending we have the tech for AI.

-

It takes less energy to dry a full load of clothes

Maybe you're mixing Wh with kWh. 40Wh is not that much, but it's still a lot for a single request.

-

Maybe you're mixing Wh with kWh. 40Wh is not that much, but it's still a lot for a single request.

Yeah I think I have

-

And water usage which will also increase as fires increase and people have trouble getting access to clean water

AI water footprint suggests that large language models are thirsty

Analysis warns that enormous AI water footprint could pose a major roadblock to sustainable evolution of large language models such as GPT-4.

TechHQ (techhq.com)

It would only take one regulation to fix that:

Datacenters that use liquid cooling must use closed loop systems.

The reason they dont, and why they setup in the desert, is because water is incredibly cheap and energy to cool a closed loop system is expensive. So they use evaporative open loop systems.

-

they vibe calculated it.

Doesn't matter, their audience isn't intetested in accuracy they only want more things to feel outraged about

-

It would only take one regulation to fix that:

Datacenters that use liquid cooling must use closed loop systems.

The reason they dont, and why they setup in the desert, is because water is incredibly cheap and energy to cool a closed loop system is expensive. So they use evaporative open loop systems.

Unfortunately I wonder if it’s more expensive to set up a closed loop system that’s really expensive or to buy lawmakers that will vote against bills saying you should do so and it’s a tale old as time

-

Unfortunately I wonder if it’s more expensive to set up a closed loop system that’s really expensive or to buy lawmakers that will vote against bills saying you should do so and it’s a tale old as time

Politicians are cheap

-

Politicians are cheap

Yeah sorry forgot my /s there

-

The University of Rhode Island's AI lab estimates that GPT-5 averages just over 18 Wh per query, so putting all of ChatGPT's reported 2.5 billion requests a day through the model could see energy usage as high as 45 GWh.

A daily energy use of 45 GWh is enormous. A typical modern nuclear power plant produces between 1 and 1.6 GW of electricity per reactor per hour, so data centers running OpenAI's GPT-5 at 18 Wh per query could require the power equivalent of two to three nuclear power reactors, an amount that could be enough to power a small country.

that's a lot. remember to add "-noai" to your google searches.

-

Can you give some examples of those technologies? I'd be interested in how many weren't replaced with something more efficient or convenient.

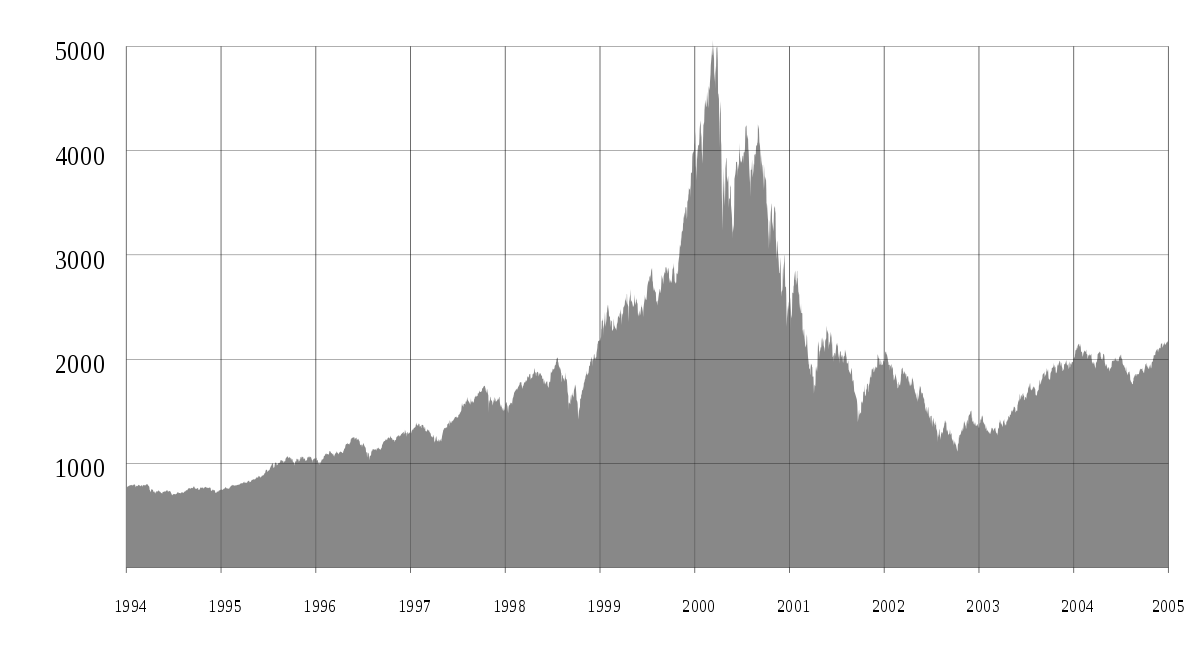

There were certainly companies that survived, because yes, the idea of websites being interactive rather than informational was huge, but everyone jumped on that bandwagon to build useless shit.

As an example, this is today’s ProductHunt

And yesterday’s was AI, and the day before that it was AI, but most of them are demonstrating little value with high valuations.

LLMs will survive, likely improve into coordinator models that request data from SLMs and connect through MCP, but the investment bubble can’t sustain

-

that's a lot. remember to add "-noai" to your google searches.

I'm just going to ignore the AI recommendations, let them burn money.

-

Trump says he plans to put a 100% tariff on computer chips, likely pushing up cost of electronics

Technology 1

1

-

-

-

-

-

Russian Drones Are Attacking Ukrainian Civilians in Kherson; 93-page HRW Report Exposes Russian Military Drones Committing War Crimes Against Civilians For The Purpose of Instilling Terror.

Technology 1

1

-

-