Benchmark Mainline 4.17.0-rc6

-

LAN (Version 4.17.0-rc6)

Geschwindigkeit der Schnittstelle

rock64@rockpro64:~$ iperf3 -c 192.168.3.213 Connecting to host 192.168.3.213, port 5201 [ 4] local 192.168.3.7 port 50632 connected to 192.168.3.213 port 5201 [ ID] Interval Transfer Bandwidth Retr Cwnd [ 4] 0.00-1.00 sec 99.5 MBytes 834 Mbits/sec 1 344 KBytes [ 4] 1.00-2.00 sec 98.5 MBytes 826 Mbits/sec 0 351 KBytes [ 4] 2.00-3.00 sec 98.5 MBytes 826 Mbits/sec 0 359 KBytes [ 4] 3.00-4.00 sec 98.2 MBytes 824 Mbits/sec 1 298 KBytes [ 4] 4.00-5.00 sec 97.9 MBytes 821 Mbits/sec 0 324 KBytes [ 4] 5.00-6.00 sec 97.9 MBytes 821 Mbits/sec 0 337 KBytes [ 4] 6.00-7.00 sec 97.9 MBytes 821 Mbits/sec 0 352 KBytes [ 4] 7.00-8.00 sec 97.9 MBytes 821 Mbits/sec 0 359 KBytes [ 4] 8.00-9.00 sec 97.3 MBytes 816 Mbits/sec 0 361 KBytes [ 4] 9.00-10.00 sec 97.8 MBytes 821 Mbits/sec 0 361 KBytes - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth Retr [ 4] 0.00-10.00 sec 981 MBytes 823 Mbits/sec 2 sender [ 4] 0.00-10.00 sec 979 MBytes 822 Mbits/sec receiver iperf Done. rock64@rockpro64:~$ iperf3 -s ----------------------------------------------------------- Server listening on 5201 ----------------------------------------------------------- Accepted connection from 192.168.3.213, port 58606 [ 5] local 192.168.3.7 port 5201 connected to 192.168.3.213 port 58608 [ ID] Interval Transfer Bandwidth [ 5] 0.00-1.00 sec 109 MBytes 914 Mbits/sec [ 5] 1.00-2.00 sec 112 MBytes 941 Mbits/sec [ 5] 2.00-3.00 sec 112 MBytes 942 Mbits/sec [ 5] 3.00-4.00 sec 112 MBytes 941 Mbits/sec [ 5] 4.00-5.00 sec 112 MBytes 942 Mbits/sec [ 5] 5.00-6.00 sec 112 MBytes 941 Mbits/sec [ 5] 6.00-7.00 sec 112 MBytes 941 Mbits/sec [ 5] 7.00-8.00 sec 112 MBytes 942 Mbits/sec [ 5] 8.00-9.00 sec 112 MBytes 941 Mbits/sec [ 5] 9.00-10.00 sec 112 MBytes 942 Mbits/sec [ 5] 10.00-10.03 sec 3.09 MBytes 932 Mbits/sec - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth [ 5] 0.00-10.03 sec 0.00 Bytes 0.00 bits/sec sender [ 5] 0.00-10.03 sec 1.10 GBytes 939 Mbits/sec receiver ----------------------------------------------------------- Server listening on 5201 ----------------------------------------------------------- ^Ciperf3: interrupt - the server has terminatedDa gibt es noch Raum für Verbesserungen, das Ergebnis beim 4.4.126 war besser.

-

Kleiner Stresstest für die CPU

Installation sudo apt-get install p7zip p7zip-full p7zip-rarTest

rock64@rockpro64:~$ 7zr b 7-Zip (a) [64] 16.02 : Copyright (c) 1999-2016 Igor Pavlov : 2016-05-21 p7zip Version 16.02 (locale=C,Utf16=off,HugeFiles=on,64 bits,6 CPUs LE) LE CPU Freq: 1796 1798 1798 1798 1798 1798 1798 1798 1798 RAM size: 3875 MB, # CPU hardware threads: 6 RAM usage: 1323 MB, # Benchmark threads: 6 Compressing | Decompressing Dict Speed Usage R/U Rating | Speed Usage R/U Rating KiB/s % MIPS MIPS | KiB/s % MIPS MIPS 22: 3653 373 953 3555 | 93351 522 1525 7961 23: 3598 363 1010 3667 | 93257 531 1519 8069 24: 4631 488 1021 4980 | 89849 520 1516 7886 25: 4811 493 1115 5494 | 88398 522 1506 7867 ---------------------------------- | ------------------------------ Avr: 429 1025 4424 | 524 1516 7946 Tot: 477 1271 6185Ziemlich gleich, wie mit der 4.4.126. Die Frequenzen sehen aber komisch aus..

-

Speichertest

rock64@rockpro64:~/tinymembench$ ./tinymembench tinymembench v0.4.9 (simple benchmark for memory throughput and latency) ========================================================================== == Memory bandwidth tests == == == == Note 1: 1MB = 1000000 bytes == == Note 2: Results for 'copy' tests show how many bytes can be == == copied per second (adding together read and writen == == bytes would have provided twice higher numbers) == == Note 3: 2-pass copy means that we are using a small temporary buffer == == to first fetch data into it, and only then write it to the == == destination (source -> L1 cache, L1 cache -> destination) == == Note 4: If sample standard deviation exceeds 0.1%, it is shown in == == brackets == ========================================================================== C copy backwards : 3410.2 MB/s C copy backwards (32 byte blocks) : 3409.1 MB/s C copy backwards (64 byte blocks) : 3409.6 MB/s C copy : 3442.3 MB/s C copy prefetched (32 bytes step) : 3419.2 MB/s C copy prefetched (64 bytes step) : 3418.5 MB/s C 2-pass copy : 3135.1 MB/s (22.4%) C 2-pass copy prefetched (32 bytes step) : 3184.8 MB/s C 2-pass copy prefetched (64 bytes step) : 3183.4 MB/s C fill : 7834.3 MB/s (1.0%) C fill (shuffle within 16 byte blocks) : 7861.3 MB/s (1.0%) C fill (shuffle within 32 byte blocks) : 7720.5 MB/s C fill (shuffle within 64 byte blocks) : 7716.6 MB/s --- standard memcpy : 1815.5 MB/s standard memset : 7751.3 MB/s (0.1%) --- NEON LDP/STP copy : 1866.9 MB/s (0.2%) NEON LDP/STP copy pldl2strm (32 bytes step) : 1225.5 MB/s (0.3%) NEON LDP/STP copy pldl2strm (64 bytes step) : 1542.8 MB/s NEON LDP/STP copy pldl1keep (32 bytes step) : 1951.1 MB/s NEON LDP/STP copy pldl1keep (64 bytes step) : 1955.7 MB/s NEON LD1/ST1 copy : 1854.5 MB/s (0.7%) NEON STP fill : 7745.0 MB/s (0.3%) NEON STNP fill : 4083.9 MB/s (16.4%) ARM LDP/STP copy : 1869.4 MB/s (0.2%) ARM STP fill : 7751.5 MB/s (0.2%) ARM STNP fill : 2843.5 MB/s (4.7%) ========================================================================== == Framebuffer read tests. == == == == Many ARM devices use a part of the system memory as the framebuffer, == == typically mapped as uncached but with write-combining enabled. == == Writes to such framebuffers are quite fast, but reads are much == == slower and very sensitive to the alignment and the selection of == == CPU instructions which are used for accessing memory. == == == == Many x86 systems allocate the framebuffer in the GPU memory, == == accessible for the CPU via a relatively slow PCI-E bus. Moreover, == == PCI-E is asymmetric and handles reads a lot worse than writes. == == == == If uncached framebuffer reads are reasonably fast (at least 100 MB/s == == or preferably >300 MB/s), then using the shadow framebuffer layer == == is not necessary in Xorg DDX drivers, resulting in a nice overall == == performance improvement. For example, the xf86-video-fbturbo DDX == == uses this trick. == ========================================================================== NEON LDP/STP copy (from framebuffer) : 231.8 MB/s NEON LDP/STP 2-pass copy (from framebuffer) : 222.4 MB/s NEON LD1/ST1 copy (from framebuffer) : 59.9 MB/s NEON LD1/ST1 2-pass copy (from framebuffer) : 59.3 MB/s ARM LDP/STP copy (from framebuffer) : 118.5 MB/s ARM LDP/STP 2-pass copy (from framebuffer) : 116.1 MB/s ========================================================================== == Memory latency test == == == == Average time is measured for random memory accesses in the buffers == == of different sizes. The larger is the buffer, the more significant == == are relative contributions of TLB, L1/L2 cache misses and SDRAM == == accesses. For extremely large buffer sizes we are expecting to see == == page table walk with several requests to SDRAM for almost every == == memory access (though 64MiB is not nearly large enough to experience == == this effect to its fullest). == == == == Note 1: All the numbers are representing extra time, which needs to == == be added to L1 cache latency. The cycle timings for L1 cache == == latency can be usually found in the processor documentation. == == Note 2: Dual random read means that we are simultaneously performing == == two independent memory accesses at a time. In the case if == == the memory subsystem can't handle multiple outstanding == == requests, dual random read has the same timings as two == == single reads performed one after another. == ========================================================================== block size : single random read / dual random read, [MADV_NOHUGEPAGE] 1024 : 0.0 ns / 0.0 ns 2048 : 0.0 ns / 0.0 ns 4096 : 0.0 ns / 0.0 ns 8192 : 0.0 ns / 0.0 ns 16384 : 0.0 ns / 0.0 ns 32768 : 0.0 ns / 0.0 ns 65536 : 4.8 ns / 8.1 ns 131072 : 7.4 ns / 11.1 ns 262144 : 8.7 ns / 12.5 ns 524288 : 10.2 ns / 14.3 ns 1048576 : 85.6 ns / 133.1 ns 2097152 : 127.1 ns / 173.5 ns 4194304 : 153.4 ns / 194.8 ns 8388608 : 167.3 ns / 205.0 ns 16777216 : 175.4 ns / 211.7 ns 33554432 : 180.5 ns / 216.4 ns 67108864 : 183.5 ns / 219.0 ns block size : single random read / dual random read, [MADV_HUGEPAGE] 1024 : 0.0 ns / 0.0 ns 2048 : 0.0 ns / 0.0 ns 4096 : 0.0 ns / 0.0 ns 8192 : 0.0 ns / 0.0 ns 16384 : 0.0 ns / 0.0 ns 32768 : 0.0 ns / 0.0 ns 65536 : 4.8 ns / 8.1 ns 131072 : 7.4 ns / 11.3 ns 262144 : 8.7 ns / 12.7 ns 524288 : 10.2 ns / 14.1 ns 1048576 : 85.6 ns / 133.0 ns 2097152 : 126.4 ns / 172.7 ns 4194304 : 147.0 ns / 186.2 ns 8388608 : 157.3 ns / 191.3 ns 16777216 : 162.6 ns / 193.4 ns 33554432 : 165.2 ns / 194.3 ns 67108864 : 166.5 ns / 194.7 ns -

iozone

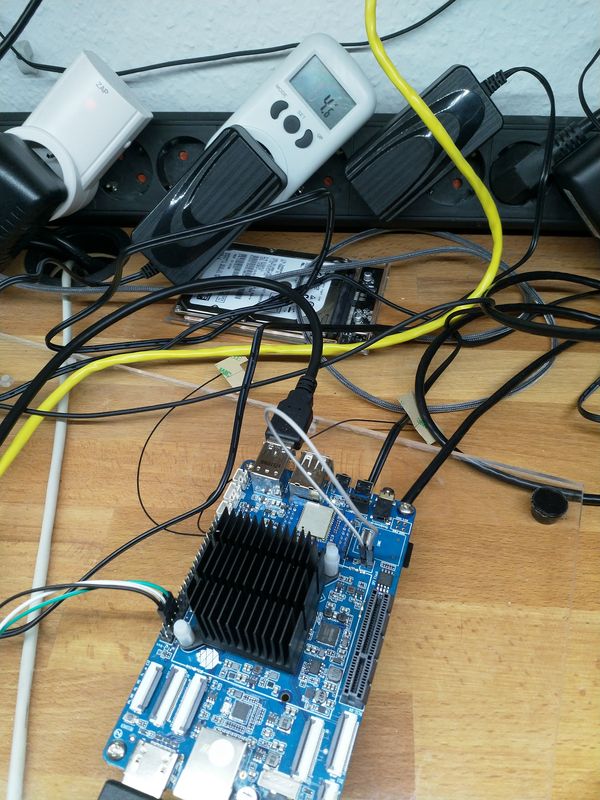

5GT/s x2

rock64@rockpro64:/mnt$ sudo iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Iozone: Performance Test of File I/O Version $Revision: 3.429 $ Compiled for 64 bit mode. Build: linux Contributors:William Norcott, Don Capps, Isom Crawford, Kirby Collins Al Slater, Scott Rhine, Mike Wisner, Ken Goss Steve Landherr, Brad Smith, Mark Kelly, Dr. Alain CYR, Randy Dunlap, Mark Montague, Dan Million, Gavin Brebner, Jean-Marc Zucconi, Jeff Blomberg, Benny Halevy, Dave Boone, Erik Habbinga, Kris Strecker, Walter Wong, Joshua Root, Fabrice Bacchella, Zhenghua Xue, Qin Li, Darren Sawyer, Vangel Bojaxhi, Ben England, Vikentsi Lapa. Run began: Sat Jun 16 06:34:43 2018 Include fsync in write timing O_DIRECT feature enabled Auto Mode File size set to 102400 kB Record Size 4 kB Record Size 16 kB Record Size 512 kB Record Size 1024 kB Record Size 16384 kB Command line used: iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Output is in kBytes/sec Time Resolution = 0.000001 seconds. Processor cache size set to 1024 kBytes. Processor cache line size set to 32 bytes. File stride size set to 17 * record size. random random bkwd record stride kB reclen write rewrite read reread read write read rewrite read fwrite frewrite fread freread 102400 4 48672 104754 115838 116803 47894 103606 102400 16 168084 276437 292660 295458 162550 273703 102400 512 566572 597648 580005 589209 534508 597007 102400 1024 585621 624443 590545 599177 569452 630098 102400 16384 504871 754710 765558 780592 777696 753426 iozone test complete.2,5GT/s x2

rock64@rockpro64:/mnt$ sudo iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Iozone: Performance Test of File I/O Version $Revision: 3.429 $ Compiled for 64 bit mode. Build: linux Contributors:William Norcott, Don Capps, Isom Crawford, Kirby Collins Al Slater, Scott Rhine, Mike Wisner, Ken Goss Steve Landherr, Brad Smith, Mark Kelly, Dr. Alain CYR, Randy Dunlap, Mark Montague, Dan Million, Gavin Brebner, Jean-Marc Zucconi, Jeff Blomberg, Benny Halevy, Dave Boone, Erik Habbinga, Kris Strecker, Walter Wong, Joshua Root, Fabrice Bacchella, Zhenghua Xue, Qin Li, Darren Sawyer, Vangel Bojaxhi, Ben England, Vikentsi Lapa. Run began: Sun Jun 17 06:54:02 2018 Include fsync in write timing O_DIRECT feature enabled Auto Mode File size set to 102400 kB Record Size 4 kB Record Size 16 kB Record Size 512 kB Record Size 1024 kB Record Size 16384 kB Command line used: iozone -e -I -a -s 100M -r 4k -r 16k -r 512k -r 1024k -r 16384k -i 0 -i 1 -i 2 Output is in kBytes/sec Time Resolution = 0.000001 seconds. Processor cache size set to 1024 kBytes. Processor cache line size set to 32 bytes. File stride size set to 17 * record size. random random bkwd record stride kB reclen write rewrite read reread read write read rewrite read fwrite frewrite fread freread 102400 4 49420 91310 102658 103415 47023 90099 102400 16 138141 202088 224648 225918 141642 202457 102400 512 335055 347517 375096 378596 364668 350005 102400 1024 345508 354999 378947 382733 375315 354783 102400 16384 306262 383155 424403 429423 428670 377476 iozone test complete.