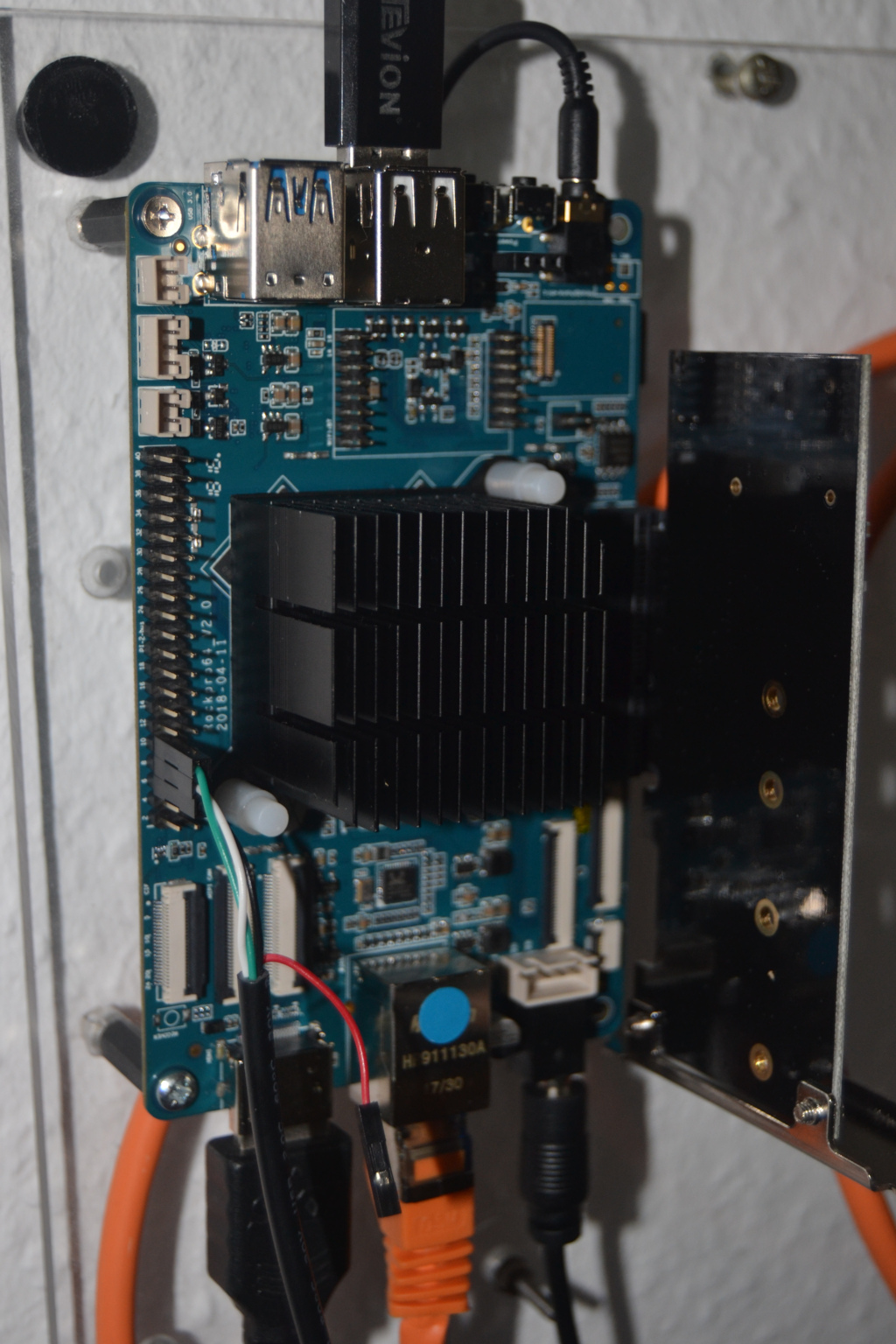

ROCKPro64 - DKMS im Release RC12 möglich

-

Mit dem Image 0.8.0rc12 hat Kamil ein paar Infos veröffentlicht.

# DKMS The DKMS (Dynamic Kernel Module Support) is convinient method for installing additional drivers that are outside of kernel tree. There's awesome documentation about [DKMS](https://wiki.archlinux.org/index.php/Dynamic_Kernel_Module_Support) on ArchWiki, get familiar with it to understand what and how to use it in general. To use DKMS you need to have `linux-headers` installed. This is by default if you use images generated by this repository. ## Install DKMS (arm64) The first step is to install and configure DKMS: ```bash sudo apt-get update -y sudo apt-get install dkms git-core ``` ## Install DKMS (armhf) **This currently does not work due to missing `gcc-aarch64-linux-gnu`.** ## Wireguard Installing Wireguard is very simple with DKMS and makes Wireguard to be auto-updated after kernel change. Following the documentation from https://www.wireguard.com/install/: ```bash sudo add-apt-repository ppa:wireguard/wireguard sudo apt-get install python wireguard ``` ## RTL 8812AU (WiFi USB adapter) The Realtek 8812AU is very popular chipset for USB dongles. The 8812AU is being sold by Pine64, Inc. as well. ```bash sudo git clone https://github.com/greearb/rtl8812AU_8821AU_linux.git /usr/src/rtl8812au-4.3.14 sudo dkms build rtl8812au/4.3.14 ``` ## tn40xx driver (10Gbps PCIe adapter) ```bash sudo git clone https://github.com/ayufan-rock64/tn40xx-driver /usr/src/tn40xx-001 sudo dkms build tn40xx/001 ``` ## exfat-nofuse ExFat is by default available in Debian/Ubuntu repositories, but this uses FUSE (Filesystem in Userspace). There's a very good linux kernel driver available: ```bash sudo git clone https://github.com/barrybingo/exfat-nofuse /usr/src/exfat-1.2.8 sudo dkms build exfat/1.2.8 ```Quelle: https://gitlab.com/ayufan-repos/rock64/linux-build/commit/c6ebc51ab5c2e347897f98cab474016d7dce354f

Da wären

- DKMS für arm64

- Wireguard

- RTL 8812AU (WiFi USB adapter)

- tn40xx driver (10Gbps PCIe adapter)

- exfat-nofuse

DKMS Erklärung gibt es hier. Ich versuche das mal zu erklären.

DKMS ist ein Programm was es ermöglicht Kernel Module außerhalb des Kernels zu ermöglichen. Diese Kernelmodule werden nach der Installation eines neuen Kernels aber automatisch neu gebaut.

Das habe ich mit Wireguard ja schon erfolgreich ausprobiert, hier nachzulesen.

DKMS Status

Hier sieht man, das wireguard installiert ist.

root@rockpro64:~# dkms status wireguard, 0.0.20190406, 4.4.167-1189-rockchip-ayufan-gea9ef7a80268, aarch64: installedMehr Infos zu DKMS findet man hier.

Damit kann man nun z.B. diesen USB WiFi Adapter von pine64.org benutzen.

Außerdem 10Gbps PCIe Netzwerkkarten. Schnelle Datenübertragungen für zu Hause? Nun kein Problem mehr, aber noch ein recht teurer Spaß

exFAT ist ein Dateisystem von Microsoft, was speziell für Flash-Wechselspeicher entwickelt wurde.

Dann viel Spaß beim Ausprobieren