NVIDIA is full of shit

-

Because they choose not to go full idiot though. They could make their top-line cards to compete if they slam enough into a pipeline and require a dedicated PSU to compete, but that's not where their product line intends to go. That's why it's smart.

For reference: AMD has the most deployed GPUs on the planet as of right now. There's a reason why it's in every gaming console except Switch 1/2, and why OpenAI just partnered with them for chips. The goal shouldn't just making a product that churns out results at the cost of everything else does, but to be cost-effective and efficient. Nvidia fails at that on every level.

Then why does Nvidia have so much more money?

-

Yup. You want a server? Dell just plain doesn't offer anything but Nvidia cards. You want to build your own? The GPGPU stuff like zluda is brand new and not really supported by anyone. You want to participate in the development community, you buy Nvidia and use CUDA.

Fortunately, even that tide is shifting.

I've been talking to Dell about it recently, they've just announced new servers (releasing later this year) which can have either Nvidia's B300 or AMD's MI355x GPUs. Available in a hilarious 19" 10RU air-cooled form factor (XE9685), or ORv3 3OU water-cooled (XE9685L).

It's the first time they've offered a system using both CPU and GPU from AMD - previously they had some Intel CPU / AMD GPU options, and AMD CPU / Nvidia GPU, but never before AMD / AMD.

With AMD promising release day support for PyTorch and other popular programming libraries, we're also part-way there on software. I'm not going to pretend like needing CUDA isn't still a massive hump in the road, but "everyone uses CUDA" <-> "everyone needs CUDA" is one hell of a chicken-and-egg problem which isn't getting solved overnight.

Realistically facing that kind of uphill battle, AMD is just going to have to compete on price - they're quoting 40% performance/dollar improvement over Nvidia for these upcoming GPUs, so perhaps they are - and trying to win hearts and minds with rock-solid driver/software support so people who do have the option (ie in-house code, not 3rd-party software) look to write it with not-CUDA.

To note, this is the 3rd generation of the MI3xx series (MI300, MI325, now MI350/355). I think it might be the first one to make the market splash that AMD has been hoping for.

-

Then why does Nvidia have so much more money?

See the title of this very post you're responding to. No, I'm not OP lolz

-

See the title of this very post you're responding to. No, I'm not OP lolz

He's not OP. He's just another person...

-

Fortunately, even that tide is shifting.

I've been talking to Dell about it recently, they've just announced new servers (releasing later this year) which can have either Nvidia's B300 or AMD's MI355x GPUs. Available in a hilarious 19" 10RU air-cooled form factor (XE9685), or ORv3 3OU water-cooled (XE9685L).

It's the first time they've offered a system using both CPU and GPU from AMD - previously they had some Intel CPU / AMD GPU options, and AMD CPU / Nvidia GPU, but never before AMD / AMD.

With AMD promising release day support for PyTorch and other popular programming libraries, we're also part-way there on software. I'm not going to pretend like needing CUDA isn't still a massive hump in the road, but "everyone uses CUDA" <-> "everyone needs CUDA" is one hell of a chicken-and-egg problem which isn't getting solved overnight.

Realistically facing that kind of uphill battle, AMD is just going to have to compete on price - they're quoting 40% performance/dollar improvement over Nvidia for these upcoming GPUs, so perhaps they are - and trying to win hearts and minds with rock-solid driver/software support so people who do have the option (ie in-house code, not 3rd-party software) look to write it with not-CUDA.

To note, this is the 3rd generation of the MI3xx series (MI300, MI325, now MI350/355). I think it might be the first one to make the market splash that AMD has been hoping for.

AMD’s also apparently unifying their server and consumer gpu departments for RDNA5/UDNA iirc, which I’m really hoping helps with this too

-

I know Dell has been doing a lot of AMD CPUs recently, and those have definitely been beating Intel, so hopefully this continues. But I'll believe it when I see it. Often, these things rarely pan out in terms of price/performance and support.

-

See the title of this very post you're responding to. No, I'm not OP lolz

They have so much money because they're full of shit? Doesn't make much sense.

-

They have so much money because they're full of shit? Doesn't make much sense.

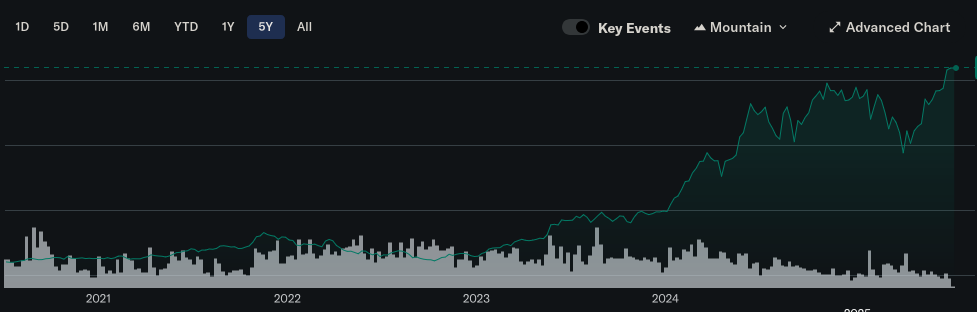

Stock isnt money in the bank.

-

Stock isnt money in the bank.

No one said anything about stock.

-

This post did not contain any content.

NVIDIA is full of shit

Since the disastrous launch of the RTX 50 series, NVIDIA has been unable to escape negative headlines: scalper bots are snatching GPUs away from consumers before official sales even begin, power connectors continue to melt, with no fix in sight, marketing is becoming increasingly deceptive, GPUs are missing processing units when they leave the factory, and the drivers, for which NVIDIA has always been praised, are currently falling apart. And to top it all off, NVIDIA is becoming increasingly insistent that media push a certain narrative when reporting on their hardware.

Sebin's Blog (blog.sebin-nyshkim.net)

Have a 2070s. Been thinking for a while now my next card will be AMD. I hope they get back into the high end cards again

-

But but but but but my shadows look 3% more realistic now!

The best part is, for me, ray tracing looks great. When I'm standing there and slowly looking around.

When I'm running and gunning and shits exploding, I don't think the human eye is even capable of comprehending the difference between raster and ray tracing at that point.

-

This post did not contain any content.

NVIDIA is full of shit

Since the disastrous launch of the RTX 50 series, NVIDIA has been unable to escape negative headlines: scalper bots are snatching GPUs away from consumers before official sales even begin, power connectors continue to melt, with no fix in sight, marketing is becoming increasingly deceptive, GPUs are missing processing units when they leave the factory, and the drivers, for which NVIDIA has always been praised, are currently falling apart. And to top it all off, NVIDIA is becoming increasingly insistent that media push a certain narrative when reporting on their hardware.

Sebin's Blog (blog.sebin-nyshkim.net)

And only toke 15 years to figure it out?

-

Have a 2070s. Been thinking for a while now my next card will be AMD. I hope they get back into the high end cards again

The 9070 XT is excellent and FSR 4 actually beats DLSS 4 in some important ways, like disocclusion.

-

The 9070 XT is excellent and FSR 4 actually beats DLSS 4 in some important ways, like disocclusion.

Concur.

I went from a 2080 Super to the RX 9070 XT and it flies. Coupled with a 9950X3D, I still feel a little bit like the GPU might be the bottleneck, but it doesn't matter. It plays everything I want at way more frames than I need (240 Hz monitor).

E.g., Rocket League went from struggling to keep 240 fps at lowest settings, to 700+ at max settings. Pretty stark improvement.

-

Because they choose not to go full idiot though. They could make their top-line cards to compete if they slam enough into a pipeline and require a dedicated PSU to compete, but that's not where their product line intends to go. That's why it's smart.

For reference: AMD has the most deployed GPUs on the planet as of right now. There's a reason why it's in every gaming console except Switch 1/2, and why OpenAI just partnered with them for chips. The goal shouldn't just making a product that churns out results at the cost of everything else does, but to be cost-effective and efficient. Nvidia fails at that on every level.

Unfortunately, this partnership with OpenAI means they've sided with evil and I won't spend a cent on their products anymore.

-

This post did not contain any content.

NVIDIA is full of shit

Since the disastrous launch of the RTX 50 series, NVIDIA has been unable to escape negative headlines: scalper bots are snatching GPUs away from consumers before official sales even begin, power connectors continue to melt, with no fix in sight, marketing is becoming increasingly deceptive, GPUs are missing processing units when they leave the factory, and the drivers, for which NVIDIA has always been praised, are currently falling apart. And to top it all off, NVIDIA is becoming increasingly insistent that media push a certain narrative when reporting on their hardware.

Sebin's Blog (blog.sebin-nyshkim.net)

I wish I had the money to change to AMD

-

I wish I had the money to change to AMD

This is a sentence I never thought I would read.

^(AMD used to be cheap)^

-

This post did not contain any content.

NVIDIA is full of shit

Since the disastrous launch of the RTX 50 series, NVIDIA has been unable to escape negative headlines: scalper bots are snatching GPUs away from consumers before official sales even begin, power connectors continue to melt, with no fix in sight, marketing is becoming increasingly deceptive, GPUs are missing processing units when they leave the factory, and the drivers, for which NVIDIA has always been praised, are currently falling apart. And to top it all off, NVIDIA is becoming increasingly insistent that media push a certain narrative when reporting on their hardware.

Sebin's Blog (blog.sebin-nyshkim.net)

It covers the breadth of problems pretty well, but I feel compelled to point out that there are a few times where things are misrepresented in this post e.g.:

Newegg selling the ASUS ROG Astral GeForce RTX 5090 for $3,359 (MSRP: $1,999)

eBay Germany offering the same ASUS ROG Astral RTX 5090 for €3,349,95 (MSRP: €2,229)

The MSRP for a 5090 is $2k, but the MSRP for the 5090 Astral -- a top-end card being used for overclocking world records -- is $2.8k. I couldn't quickly find the European MSRP but my money's on it being more than 2.2k euro.

If you’re a creator, CUDA and NVENC are pretty much indispensable, or editing and exporting videos in Adobe Premiere or DaVinci Resolve will take you a lot longer[3]. Same for live streaming, as using NVENC in OBS offloads video rendering to the GPU for smooth frame rates while streaming high-quality video.

NVENC isn't much of a moat right now, as both Intel and AMD's encoders are roughly comparable in quality these days (including in Intel's iGPUs!). There are cases where NVENC might do something specific better (like 4:2:2 support for prosumer/professional use cases) or have better software support in a specific program, but for common use cases like streaming/recording gameplay the alternatives should be roughly equivalent for most users.

as recently as May 2025 and I wasn’t surprised to find even RTX 40 series are still very much overpriced

Production apparently stopped on these for several months leading up to the 50-series launch; it seems unreasonable to harshly judge the pricing of a product that hasn't had new stock for an extended period of time (of course, you can then judge either the decision to stop production or the still-elevated pricing of the 50 series).

DLSS is, and always was, snake oil

I personally find this take crazy given that DLSS2+ / FSR4+, when quality-biased, average visual quality comparable to native for most users in most situations and that was with DLSS2 in 2023, not even DLSS3 let alone DLSS4 (which is markedly better on average). I don't really care how a frame is generated if it looks good enough (and doesn't come with other notable downsides like latency). This almost feels like complaining about screen space reflections being "fake" reflections. Like yeah, it's fake, but if the average player experience is consistently better with it than without it then what does it matter?

Increasingly complex manufacturing nodes are becoming increasingly expensive as all fuck. If it's more cost-efficient to use some of that die area for specialized cores that can do high-quality upscaling instead of natively rendering everything with all the die space then that's fine by me. I don't think blaming DLSS (and its equivalents like FSR and XeSS) as "snake oil" is the right takeaway. If the options are (1) spend $X on a card that outputs 60 FPS natively or (2) spend $X on a card that outputs upscaled 80 FPS at quality good enough that I can't tell it's not native, then sign me the fuck up for option #2. For people less fussy about static image quality and more invested in smoothness, they can be perfectly happy with 100 FPS but marginally worse image quality. Not everyone is as sweaty about static image quality as some of us in the enthusiast crowd are.

There's some fair points here about RT (though I find exclusively using path tracing for performance RT performance testing a little disingenuous given the performance gap), but if RT performance is the main complaint then why is the sub-heading "DLSS is, and always was, snake oil"?

obligatory: disagreeing with some of the author's points is not the same as saying "Nvidia is great"

-

This post did not contain any content.

NVIDIA is full of shit

Since the disastrous launch of the RTX 50 series, NVIDIA has been unable to escape negative headlines: scalper bots are snatching GPUs away from consumers before official sales even begin, power connectors continue to melt, with no fix in sight, marketing is becoming increasingly deceptive, GPUs are missing processing units when they leave the factory, and the drivers, for which NVIDIA has always been praised, are currently falling apart. And to top it all off, NVIDIA is becoming increasingly insistent that media push a certain narrative when reporting on their hardware.

Sebin's Blog (blog.sebin-nyshkim.net)

Is it because it's not how they make money now?

-

It covers the breadth of problems pretty well, but I feel compelled to point out that there are a few times where things are misrepresented in this post e.g.:

Newegg selling the ASUS ROG Astral GeForce RTX 5090 for $3,359 (MSRP: $1,999)

eBay Germany offering the same ASUS ROG Astral RTX 5090 for €3,349,95 (MSRP: €2,229)

The MSRP for a 5090 is $2k, but the MSRP for the 5090 Astral -- a top-end card being used for overclocking world records -- is $2.8k. I couldn't quickly find the European MSRP but my money's on it being more than 2.2k euro.

If you’re a creator, CUDA and NVENC are pretty much indispensable, or editing and exporting videos in Adobe Premiere or DaVinci Resolve will take you a lot longer[3]. Same for live streaming, as using NVENC in OBS offloads video rendering to the GPU for smooth frame rates while streaming high-quality video.

NVENC isn't much of a moat right now, as both Intel and AMD's encoders are roughly comparable in quality these days (including in Intel's iGPUs!). There are cases where NVENC might do something specific better (like 4:2:2 support for prosumer/professional use cases) or have better software support in a specific program, but for common use cases like streaming/recording gameplay the alternatives should be roughly equivalent for most users.

as recently as May 2025 and I wasn’t surprised to find even RTX 40 series are still very much overpriced

Production apparently stopped on these for several months leading up to the 50-series launch; it seems unreasonable to harshly judge the pricing of a product that hasn't had new stock for an extended period of time (of course, you can then judge either the decision to stop production or the still-elevated pricing of the 50 series).

DLSS is, and always was, snake oil

I personally find this take crazy given that DLSS2+ / FSR4+, when quality-biased, average visual quality comparable to native for most users in most situations and that was with DLSS2 in 2023, not even DLSS3 let alone DLSS4 (which is markedly better on average). I don't really care how a frame is generated if it looks good enough (and doesn't come with other notable downsides like latency). This almost feels like complaining about screen space reflections being "fake" reflections. Like yeah, it's fake, but if the average player experience is consistently better with it than without it then what does it matter?

Increasingly complex manufacturing nodes are becoming increasingly expensive as all fuck. If it's more cost-efficient to use some of that die area for specialized cores that can do high-quality upscaling instead of natively rendering everything with all the die space then that's fine by me. I don't think blaming DLSS (and its equivalents like FSR and XeSS) as "snake oil" is the right takeaway. If the options are (1) spend $X on a card that outputs 60 FPS natively or (2) spend $X on a card that outputs upscaled 80 FPS at quality good enough that I can't tell it's not native, then sign me the fuck up for option #2. For people less fussy about static image quality and more invested in smoothness, they can be perfectly happy with 100 FPS but marginally worse image quality. Not everyone is as sweaty about static image quality as some of us in the enthusiast crowd are.

There's some fair points here about RT (though I find exclusively using path tracing for performance RT performance testing a little disingenuous given the performance gap), but if RT performance is the main complaint then why is the sub-heading "DLSS is, and always was, snake oil"?

obligatory: disagreeing with some of the author's points is not the same as saying "Nvidia is great"

I think DLSS (and FSR and so on) are great value propositions but they become a problem when developers use them as a crutch. At the very least your game should not need them at all to run on high end hardware on max settings. With them then being options for people on lower end hardware to either lower settings or combine higher settings with upscaling. When they become mandatory they stop being a value proposition since the benefit stops being a benefit and starts just being neccesary for baseline performance.