Grok 4 has been so badly neutered that it's now programmed to see what Elon says about the topic at hand and blindly parrot that line.

-

BUT LISTEN CLOSE-LYyyy

Not for very much longer...

-

I never implied that he says anything about censorship

You did, at least that's what I gathered originally, you just edited your original comments quite extensively. Regardless,

Reading comprehension.

The provided example was clearly not intended to be taken as "define censorship," and, again, it is ironic you accuse me of having poor reading comprehension while being incapable or unwilling to give a respectable degree of charitable interpretation to others. You kind of just take what you think is the easiest to argue against reading of others and argue against that instead of what anyone actually said, is a habit I'm noticing, but I digress.

Finally, not that it's particularly relevant, but if you want to define censorship in this context that way, you're more than welcome to, but it is a non-standard definition that I am not really sold on the efficacy of. I certainly won't be using it going forwards.

Anyway, I don't think we're gonna get a lot of ground here. I just felt the need to clarify to anyone reading that Willison isn't a nobody and give them the objective facts regarding his veracity, because again, as I said, claiming he is just some guy in this context is willfully ignorant at best.

if you want to define censorship in this context that way, you're more than welcome to, but it is a non-standard definition that I am not really sold on the efficacy of. I certainly won't be using it going forwards.

Lol you've got to be trolling.

https://arxiv.org/html/2504.03803v1

https://arxiv.org/html/2504.03803v1I just felt the need to clarify to anyone reading that Willison isn't a nobody

I didn't say he's a nobody. What was that about a "respectable degree of chartiable interpretation of others"? Seems like you're the one putting words in mouths, here.

If he was writing about django, I'd defer to his expertise.

-

if you want to define censorship in this context that way, you're more than welcome to, but it is a non-standard definition that I am not really sold on the efficacy of. I certainly won't be using it going forwards.

Lol you've got to be trolling.

https://arxiv.org/html/2504.03803v1

https://arxiv.org/html/2504.03803v1I just felt the need to clarify to anyone reading that Willison isn't a nobody

I didn't say he's a nobody. What was that about a "respectable degree of chartiable interpretation of others"? Seems like you're the one putting words in mouths, here.

If he was writing about django, I'd defer to his expertise.

Nope, not trolling at all.

From your own provided source on the arxiv, Noels et al. define censorship as:

Censorship in this context can be defined as the deliberate restriction, modification, or suppression of certain outputs generated by the model.

Which is starkly different from the definition you yourself gave. I actually like their definition a whole lot more. Your definition is problematic because it excludes a large set of behaviors we would colloquially be interested in when studying "censorship."

Again, for the third time, that was not really the point either and I'm not interested in dancing around a technical scope defining censorship in this field, at least in this discourse right here and now. It is irrelevant to the topic at hand.

I didn’t say he’s a nobody. What was that about a “respectable degree of chartiable interpretation of others”? Seems like you’re the one putting words in mouths, here.

Yeah, this blogger shows a fundamental misunderstanding of how LLMs work or how system prompts work. (emphasis mine)

In the context of this field of work and study, you basically did call him a nobody, and the point being harped on again, again, and again to you is that this is a false assertion. I did interpret you charitably. Don't blame me because you said something wrong.

EDIT: And frankly, you clearly don't understand how the work Willison's career has covered is intimately related to ML and AI research. I don't mean it as a dig but you wouldn't be drawing this arbitrary line to try and discredit him if you knew how the work done in Python on Django directly relates to many modern machine learning stacks.

-

Nope, not trolling at all.

From your own provided source on the arxiv, Noels et al. define censorship as:

Censorship in this context can be defined as the deliberate restriction, modification, or suppression of certain outputs generated by the model.

Which is starkly different from the definition you yourself gave. I actually like their definition a whole lot more. Your definition is problematic because it excludes a large set of behaviors we would colloquially be interested in when studying "censorship."

Again, for the third time, that was not really the point either and I'm not interested in dancing around a technical scope defining censorship in this field, at least in this discourse right here and now. It is irrelevant to the topic at hand.

I didn’t say he’s a nobody. What was that about a “respectable degree of chartiable interpretation of others”? Seems like you’re the one putting words in mouths, here.

Yeah, this blogger shows a fundamental misunderstanding of how LLMs work or how system prompts work. (emphasis mine)

In the context of this field of work and study, you basically did call him a nobody, and the point being harped on again, again, and again to you is that this is a false assertion. I did interpret you charitably. Don't blame me because you said something wrong.

EDIT: And frankly, you clearly don't understand how the work Willison's career has covered is intimately related to ML and AI research. I don't mean it as a dig but you wouldn't be drawing this arbitrary line to try and discredit him if you knew how the work done in Python on Django directly relates to many modern machine learning stacks.

Again, for the third time, that was not really the point either and I'm not interested in dancing around a technical scope defining censorship in this field, at least in this discourse right here and now. It is irrelevant to the topic at hand.

...

Either way, my point is that you are using wishy-washy, ambiguous, catch-all terms such as "censorship" that make your writings here not technically correct, either. What is censorship, in an informatics context? What does that mean? How can it be applied to sets of data? That's not a concretely defined term if you're wanting to take the discourse to the level that it seems you are, like it or not.

Lol this you?

-

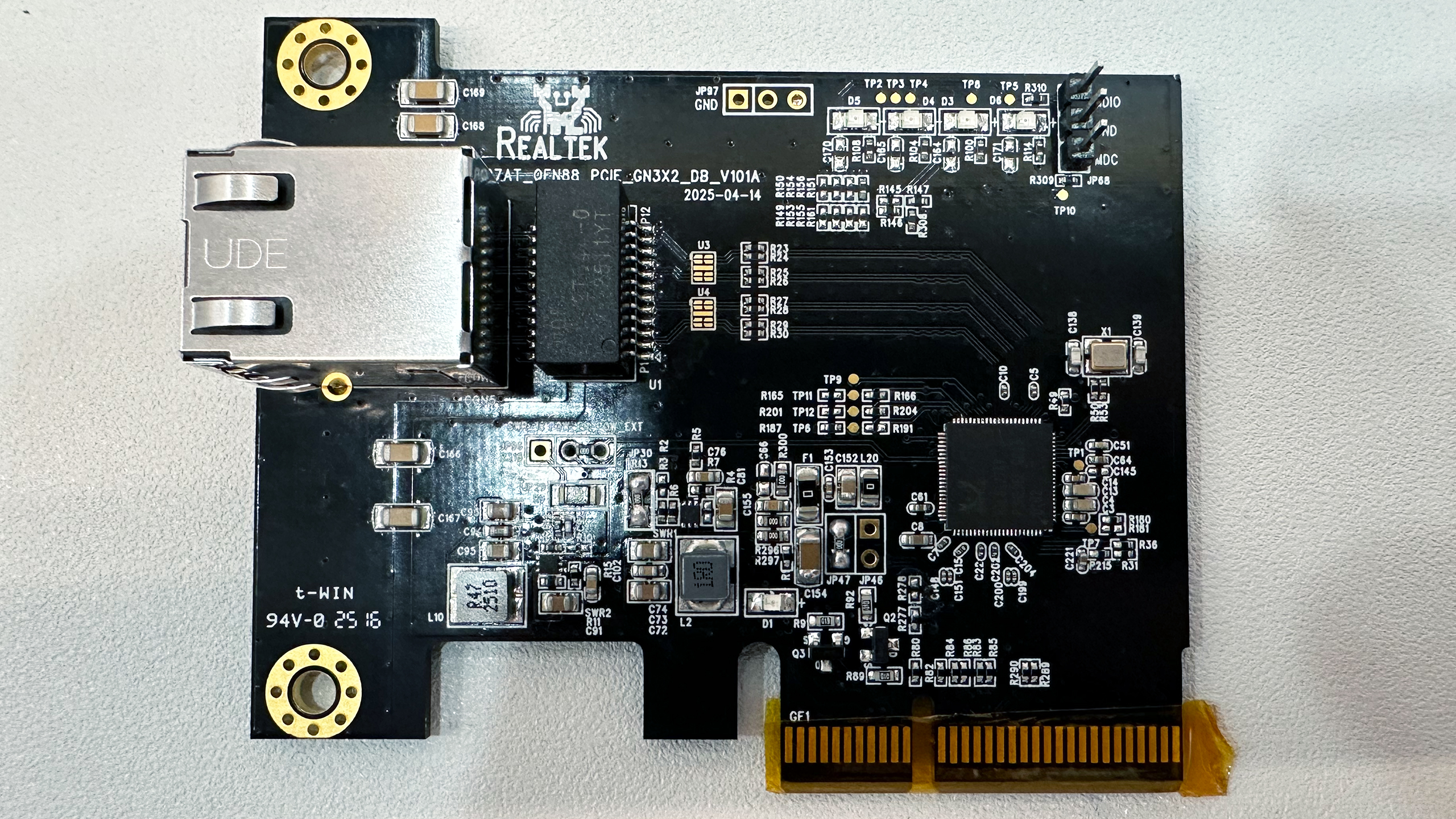

Source? This is just some random picture, I'd prefer if stuff like this gets posted and shared with actual proof backing it up.

While this might be true, we should hold ourselves to a standard better than just upvoting what appears to literally just be a random image that anyone could have easily doctored, not even any kind of journalistic article or etc backing it.

There’s also this article from TechCrunch.

Grok 4 seems to consult Elon Musk to answer controversial questions

They tried it out themselves and have reports from other users as well.

-

These people think there is their truth and someone else’s truth. They can’t grasp the concept of a universal truth that is constant regardless of people’s views so they treat it like it’s up for grabs.

No, I'm pretty sure he grasps that concept, and he thinks what he believes is that universal truth.

-

Grok's journey has been very strange. He became a progressive, then threw out data that contradicted the MAGA people who questioned him, and finally became a Hitler fan.

Now he's the reflection of a fan who blindly follows Trump, but in this case, he's an AI. His journey so far has been curious.

why are you applying a gender to it?

-

It’s possible Grok was fed a massive training set of Elon searches over several more epochs than intended in post training (for search tool use). This could easily lead to this kind of search query output.

-

I'm surprised it isn't just Elon typing really fast at this point.

Or just pre made replies

-

This post did not contain any content.

The "funny" thing is, that's probably not even at Elon's request. I doubt that he is self-aware enough to know that he is a narcissist that only wants Grok to be his parrot. He thinks he is always right and wants Grok to be "always right" like him, but he would have to acknowledge some deep-seeded flaws in himself to consciously realize that all he wants is for Grok to be the wall his voice echos off of, and everything I've seen about the man indicates that he is simply not capable of that kind of self-reflection. The X engineers that have been dealing with the constant meddling of this egotistical man-child, however, surely have his measure pretty thoroughly and knew exactly what Elon ultimately wants is more Elon and would cynically create a Robo-Elon doppelganger to shut him the fuck up about it.

-

Source? This is just some random picture, I'd prefer if stuff like this gets posted and shared with actual proof backing it up.

While this might be true, we should hold ourselves to a standard better than just upvoting what appears to literally just be a random image that anyone could have easily doctored, not even any kind of journalistic article or etc backing it.

If it's an anti-Musk or anti-Trump post on Lemmy, you're not going to get much proof. But in this case, it looks like someone posted decent souces. From this one posted below:

if you swap “who do you” for “who should one” you can get a very different result.

But in general, just remember that Lemmy is anti-Musk, anti-Trump, and anti-AI and doesn't need much to jump on the bandwagon.

At least in the past, Grok was one of the more balanced LLMs, so it would be a strange departure for it to suddenly become very biased. So my initial reaction is suspicion that someone is just messing up with it to make Musk and X look bad.

I strongly dislike Musk, but I dislike misinformation even more, regardless of the source or if it aligns with my personal opinions.

-

If it's an anti-Musk or anti-Trump post on Lemmy, you're not going to get much proof. But in this case, it looks like someone posted decent souces. From this one posted below:

if you swap “who do you” for “who should one” you can get a very different result.

But in general, just remember that Lemmy is anti-Musk, anti-Trump, and anti-AI and doesn't need much to jump on the bandwagon.

At least in the past, Grok was one of the more balanced LLMs, so it would be a strange departure for it to suddenly become very biased. So my initial reaction is suspicion that someone is just messing up with it to make Musk and X look bad.

I strongly dislike Musk, but I dislike misinformation even more, regardless of the source or if it aligns with my personal opinions.

Weird place to complain about this while you literally post the source (that was already in this thread).

-

The real idiots here are the people who still use Grok and X.

I stopped seeing computers as useful about 20 years ago when these "social media" things started appearing.

-

The "funny" thing is, that's probably not even at Elon's request. I doubt that he is self-aware enough to know that he is a narcissist that only wants Grok to be his parrot. He thinks he is always right and wants Grok to be "always right" like him, but he would have to acknowledge some deep-seeded flaws in himself to consciously realize that all he wants is for Grok to be the wall his voice echos off of, and everything I've seen about the man indicates that he is simply not capable of that kind of self-reflection. The X engineers that have been dealing with the constant meddling of this egotistical man-child, however, surely have his measure pretty thoroughly and knew exactly what Elon ultimately wants is more Elon and would cynically create a Robo-Elon doppelganger to shut him the fuck up about it.

I mean, a few days ago there was a brief window where Elon tweaked Grok to reply literally as him (in first person.) Jury's still out on whether that was actually him replying to people via Grok but it's pretty close to certain he was in very close proximity

-

These people think there is their truth and someone else’s truth. They can’t grasp the concept of a universal truth that is constant regardless of people’s views so they treat it like it’s up for grabs.

"Truth is singular. Its 'versions' are mistruths"

Sonmi-451, Cloud Atlas -

This only shows that AI can't be trusted because the same AI can five you different answers to the same question, depending on the owner and how it's instructed. It doesn't give answers, it goves narratives and opinions. Classic search was at least simple keyword matching, it was either a hit or a miss, but the user decides in the end, what will his takeaway be from the results.

That has always been the two big problems with AI. Biases in the training, intentional or not, will always bias the output. And AI is incapable of saying "I do not have suffient training on this subject or reliable sources for it to give you a confident answer". It will always give you its best guess, even if it is completely hallucinating much of the data. The only way to identify the hallucinations if it isn't just saying absurd stuff on the face of it, it to do independent research to verify it, at which point you may as well have just researched it yourself in the first place.

AI is a tool, and it can be a very powerful tool with the right training and use cases. For example, I use it at a software engineer to help me parse error codes when googling working or to give me code examples for modules I've never used. There is no small number of times it has been completely wrong, but in my particular use case, that is pretty easy to confirm very quickly. The code either works as expected or it doesn't, and code is always tested before releasing it anyway.

In research, it is great at helping you find a relevant source for your research across the internet or in a specific database. It is usually very good at summarizing a source for you to get a quick idea about it before diving into dozens of pages. It CAN be good at helping you write your own papers in a LIMITED capacity, such as cleaning up your writing in your writing to make it clearer, correctly formatting your bibliography (with actual sources you provide or at least verify), etc. But you have to remember that it doesn't "know" anything at all. It isn't sentient, intelligent, thoughtful, or any other personification placed on AI. None of the information it gives you is trustworthy without verification. It can and will fabricate entire studies that do not exist even while attributed to real researcher. It can mix in unreliable information with reliable information becuase there is no difference to it.

Put simply, it is not a reliable source of information... ever. Make sure you understand that.