This new 40TB hard drive from Seagate is just the beginning—50TB is coming fast!

-

I have killed every single type of magnetic platter drive from every brand they are all bad

Not "bad", consumable.

-

My main data usage is game installs and pretty unimportant temporary stuff so it doesn't need backup fortunately. Game saves do of course but a simple bash script and the file size for that is tiny in comparison.

SSD performance would be nice to have, but costs extra.

SSDs are getting reasonable, you might want to look into it again if that's your use case.

-

This post did not contain any content.

You thought 50TB was it? LOL! Hold on to your butts because 53.713TB SSDs are coming! These will cost you all your vital organs at 35years of age. Brains included.

-

Just wondering, why do you run a monero node?

That's the default setting when setting up a local wallet. It is also more private due to not being dependent on someone else's node.

-

This post did not contain any content.

Can't wait to see how these 40 TB hard drives, a wonderment of technology, will be used to further shove AI down my throat.

-

20 of them? Just curious, how would you use 800 or 1600 TB of storage?

A mirror of Anna’s Archive.

Information is meant to be free.

-

I know people love to dunk on Seagate drives, but it was really just the one gen that was the cause of that bad rep. Before that the most hated drives were the "deathstars" (Deskstars). I have a 1TB Seagate drive that is 10 years old and still in use daily. Just do some research on which drive to buy, no OEM is sacrosanct. I'd personally wait 6 months to a year before buying one of these drives though, so enough people have time to find out if this generation is trouble or not.

There are loads of people who think a company is bad because of one product, one service etc.

A friend of mine hates Seagate, but he bought 10 drives of the same model. Pretty sure he even bought some after the first one failed ... or people (like me) put desktop drives in a NAS or service with other drives. While mine are still good I expect them to fail any time since well they are not desinged for the use case I am using them for. -

And IIRC moved their headquarters to some Caribbean island to avoid paying US corporate taxes.

Pretty sure they are fiscally located in Ireland like a lot of big companies for tax reason and for EU VAT reasons.

-

We had failure rates over 90% on them. We sold around 8000 computers on contract to the local schools that year and took a hit to our rep. We started going from school to school replacing them before they could fail.

The drive in the picture is dated mar 16 97. I'm pretty sure it was one of thousands of warranty replacements we received. Like I said its still good but really hasn't been in service in over 30 years. I keep it because its a reminder of how bad, bad can be.

JT storage went out of business in 98. When we heard they had no one was surprised.

That is an absolutely wild fail rate.

-

They’re slow, but they’re WAY more robust than most SSDs - and in terms of $/TB, it’s not even close. Especially if you’re comparing to SLC enterprise-grade.

I've definitely seen more hdd failures than ssd failures in my life, that said, enterprise storage is indeed very robust. My WD red pros have all been workhorses. And right now the price per dollar is definitely in favor of HDDs. That really needs to change though. The raw materials alone make HDDs more expensive to produce, the problem is only that there are less manufacturers with the means to actually produce the chips necessary for SSDs because HDDs have been around for a million years. Once that changes, I think HDDs will and should go the way of every obsolete storage medium thats existed prior.

-

A mirror of Anna’s Archive.

Information is meant to be free.

Oh, nice idea! Maybe that's kind of what Simon on Firefly meant by a "source box".

-

I specifically said to go off price and availability, just have a backup because they will fail.

Sure, they will. But some sooner than others. Therefore, you can save money by buying the more reliable alternative.

-

Pretty sure they are fiscally located in Ireland like a lot of big companies for tax reason and for EU VAT reasons.

You are now correct. They move a lot.

https://storageioblog.com/seagate-to-say-goodbye-to-cayman-islands-hello-ireland/amp/ -

I think people say this because there was one specific 6TB model that does really poorly in BackBlaze reports, combined with a generally poor understanding of statistics ("I bought a Seagate and it failed but I've never had a WD fail").

I will also point out that BackBlaze themselves consistently say that Seagate and WD are pretty much the same (apart from the one model), in those exact same reports

Repair technicians see by far the most of seagate drives

-

-

-

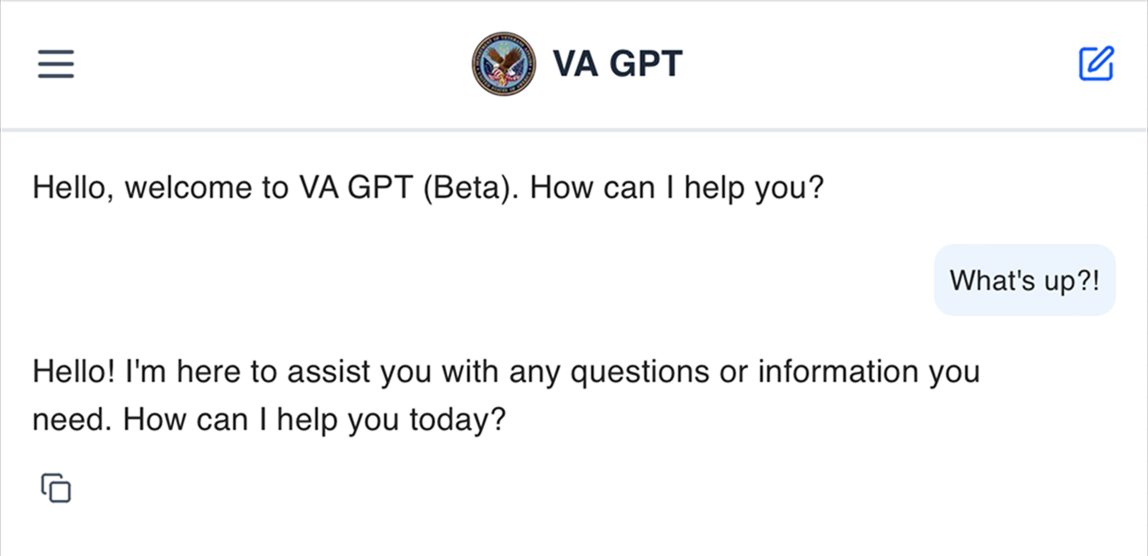

Gumroad Founder Sahil Lavingia Reveals He Was Let Go from DOGE as Software Engineer for the Department of Veterans Affairs After Just 55 Days

Technology 1

1

-

Is it feasible and scalable to combine self-replicating automata (after von Neumann) with federated learning and the social web?

Technology 1

1

-

-

Gig Companies Violate Workers’ Rights: Amazon Flex, DoorDash, Favor, Instacart, Lyft, Shipt, and Uber claim to offer workers flexibility but end up paying them less than state or local minimum wages.

Technology 1

1

-

-