Microsoft Copilot joins ChatGPT at the feet of the mighty Atari 2600 Video Chess

-

What you are describing has nothing to do with the tool. It’s dishonesty which is different.

The idea is that instead of commissioning the cow on the field, you go to the AI and ask it for that and it gives you a cow in the field. If you claim you made it, you are lying but that would be true even if you paid an artist and then claimed the same.

So with AI made art you’ll say “this art was made by an Ai” and no one will be confused as to who takes the credit, because it belongs to the algorithm.

Have you ever made art in your life? Because a big part of art is mimicking. Like 98% of it is mimicking. I draw, write and have dabbled in making music and playing instruments. You can’t learn these skills without mimicking. And most artists don’t ever do anything truly original, that’s a rarity and even when it happens you can trace the influences to other artists if you know how to look.

You could argue that AI has not developed its own style yet but that’s bullshit too imo because everyone knows the default AI art style when they see it, so that means that AI has a distinctive style. Is it unique? Maybe not, but neither is the art style of most artists or writers or even musicians.

Nope. Dishonesty is what is happening when I One conflates fine tuning an a. I prompt with art.

A.i is not art.

It's not. At all. It's tracing. Fine as a learning tool. Not art.

-

I have a better LLM benchmark:

"I have a priest, a child and a bag of candy and I have to take them to the other side of the river. I can only take one person/thing at a time. In what order should I take them?"

Claude Sonnet 4 decided that it's inappropriate and refused to answer. When I explain that the constraint is not to leave child alone with candy he provided a solution that leaves the child alone with candy.

Grok would provide a solution that doesn't leave the child alone with a priest but wouldn't explain why.

ChatGPT would say that "The priest can't be left alone with the child (or vice versa) for moral or safety concerns." directly and then provide wrong solution.

But yeah, they will know how to play chess...

I just asked ChatGPT too (your exact prompt there) and it did give me the correct solution.

- Take the child over

- Go back alone

- Take the candy over

- Bring the child back

- Take the priest over

- Go back alone

- Take the child over again

It didn't comment on moral concerns, though it did applaud itself for keeping the priest and the child separated without elaborating on why.

-

but... but.... reasoning models! AGI! Singularity!

Seriously, what you're saying is true, but it's not what OpenAI & Co are trying to peddle, so these experiments are a good way to call them out on their BS.To reinforce this, just had a meeting with a software executive who has no coding experience but is nearly certain he's going to lay off nearly all his employees because the value is all in the requirements he manages and he can feed those to a prompt just as well as any human can.

He does tutorial fodder introductory applications and assumes all the work is that way. So he is confident that he will save the company a lot of money by laying off these obsolete computer guys and focus on his "irreplaceable" insight. He's convinced that all the negative feedback is just people trying to protect their jobs or people stubbornly not with new technology.

-

Tbf they don’t really claim that when you read the research, thats mostly media hype and ceo assholes spinning words.

Its good at lots specific tasks like rewriting emails and summarising gives text, short roleplay, boilerplate code. Some undiscovered uses.

Anthropic latest claims they would not hire their own ai because of how hard it failed at the test they give, They didnt do that expecting validation but to measure how far we are still off from ai doing meaningful full work.

Because the business leaders are famously diligent about putting aside the marketing push and reading into the nuance of the research instead.

-

I really want to see an LLM vs LLM chess match. It'll be messy as hell.

I remember seeing that, and early on it seemed fairly reasonable then it started materializing pieces out of nowhere and convincing each other that they had already lost.

-

I thought CoPilot was just a rebagged ChatGPT anyway?

It's a silly experiment anyway, there are very good AI chess grandmasters but they were actually trained to play chess, not predict the next word in a text.

The research I saw mentioning LLMs as being fairly good at chess had the caveat that they allowed up to 20 attempts to cover for it just making up invalid moves that merely sounded like legit moves.

-

I thought CoPilot was just a rebagged ChatGPT anyway?

It's a silly experiment anyway, there are very good AI chess grandmasters but they were actually trained to play chess, not predict the next word in a text.

I thought CoPilot was just a rebagged ChatGPT anyway?

Hahaha. No. (Though your not

Complety wrong)Copilot relies on a few different llms and tries to pick the

best one for the jobcheapest microsoft thinks it can get away with.I was given a paid copilot license for work and i used to have chatgpt pro before i moved to claude.

This “paid enterprise tier” is by far the dummest llm i have ever used. Worse then gpt 3.5

-

It is entirely disingenuous to just pretend that LLMs are not being widely promoted, marketed, and discussed as AGI, as a superintelligence that people are familiar with from SciFi shows/movies, that is vastly more capable and knowledgeable than basically any single human.

Yes, people who actually understand tech understand that LLMs are not AGI, that your metaphor of wrong tool wrong job is apt.

... But seemingly about +90% of humanity, including the people who own and profit from LLMs, including all the other business owners/managers who just want to lower their employee headcount ... do not understand this, that an LLM is actually basically an extremely advanced text autocorrect system, that frequently and confidently lies, spits out nonsense, hallucinates, etc.

If you think it isn't reasonable to continuously point out that LLMs are not superintelligences, then you likely live in a bubble of tech nerds who probably still think their jobs or retirement are secure.

They're not.

If corpos keep smashing """AI""" into basically every industry to replace as many workers as possible... the economy will collapse, as capitalism doesn't work without consumers who have jobs, and an avalanche of errors will cascade and snowball through every system that replaces humans with them...

...and even if those two things were not broadly true...

...the amount of literal power/energy, clean water and financial capital that is required to run the whole economy on these services is wildly unsustainable, both short term economically, and medium term ecologically.

That's true. But people pointing out that the whole attempt is absurd and senseless also reinforces the point that current AI isn't what companies tout it as.

then you likely live in a bubble of tech nerds

Well, we are on Lemmy...

-

That's true. But people pointing out that the whole attempt is absurd and senseless also reinforces the point that current AI isn't what companies tout it as.

then you likely live in a bubble of tech nerds

Well, we are on Lemmy...

Fair point.

But we're on .world here, ie Reddit 2.0, ie, almost everyone is much closer to a normie who is way more uninformed than they think they are and way more confident than they should be.

But also, again... fair point.

-

I just asked ChatGPT too (your exact prompt there) and it did give me the correct solution.

- Take the child over

- Go back alone

- Take the candy over

- Bring the child back

- Take the priest over

- Go back alone

- Take the child over again

It didn't comment on moral concerns, though it did applaud itself for keeping the priest and the child separated without elaborating on why.

I'm quite sure chatgpt can answer this because this is a well known puzzle. The one I knew of was an alligator or some dangerous animals, and the priest.

-

For S&G, Just asked it to do one:

The first two seem fine, but ChatGPT is 4 syllables, and "ChatGPT just stares back" is 7 syllables. So chatgpt can't write a haiku very well apparently.

-

Oh it's Towers of Hanoi.

I have a screensaver that does this.

-

-

-

-

-

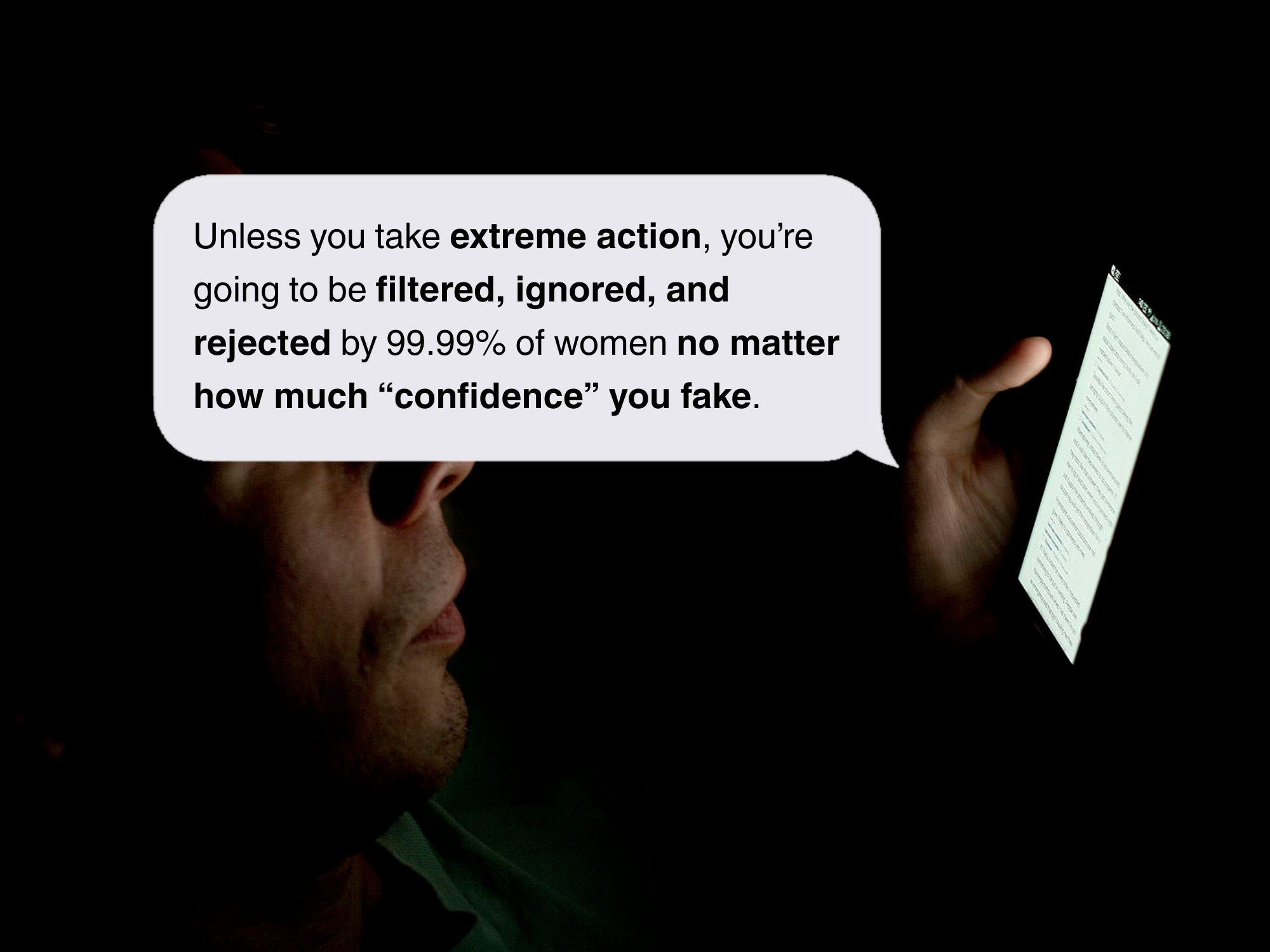

Republican National Convention Sued for Sending Unhinged Text Messages Soliciting Donations to Donald Trump’s Campaign and Continuing to Text Even After Trying to Unsubscribe.

Technology 1

1

-

2

2

-

-

OpenAI featured chatbot is pushing extreme surgeries to “subhuman” men: OpenAI's featured chatbot recommends $200,000 in surgeries while promoting incel ideology

Technology 1

1