ChatGPT Mostly Source Wikipedia; Google AI Overviews Mostly Source Reddit

-

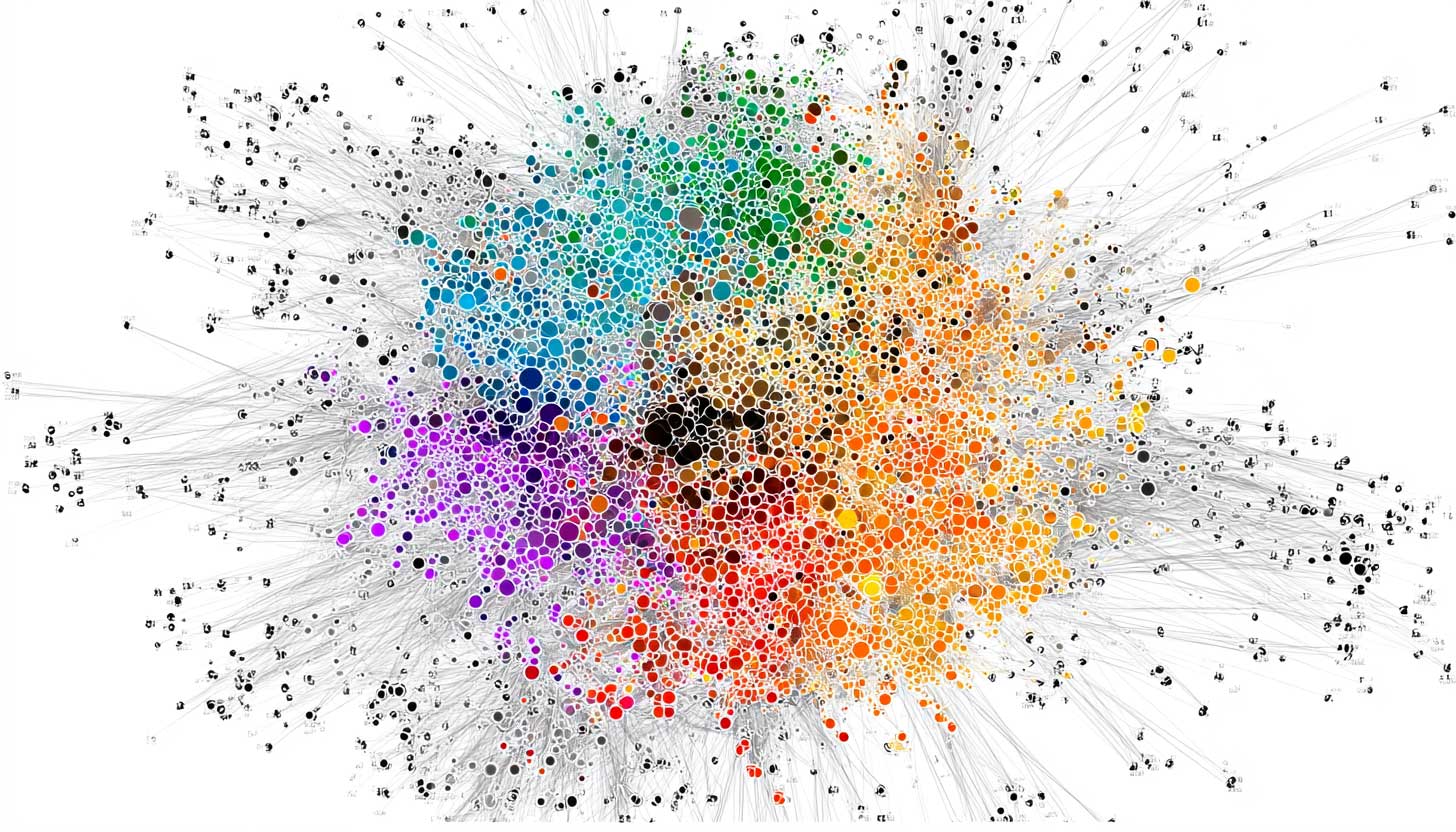

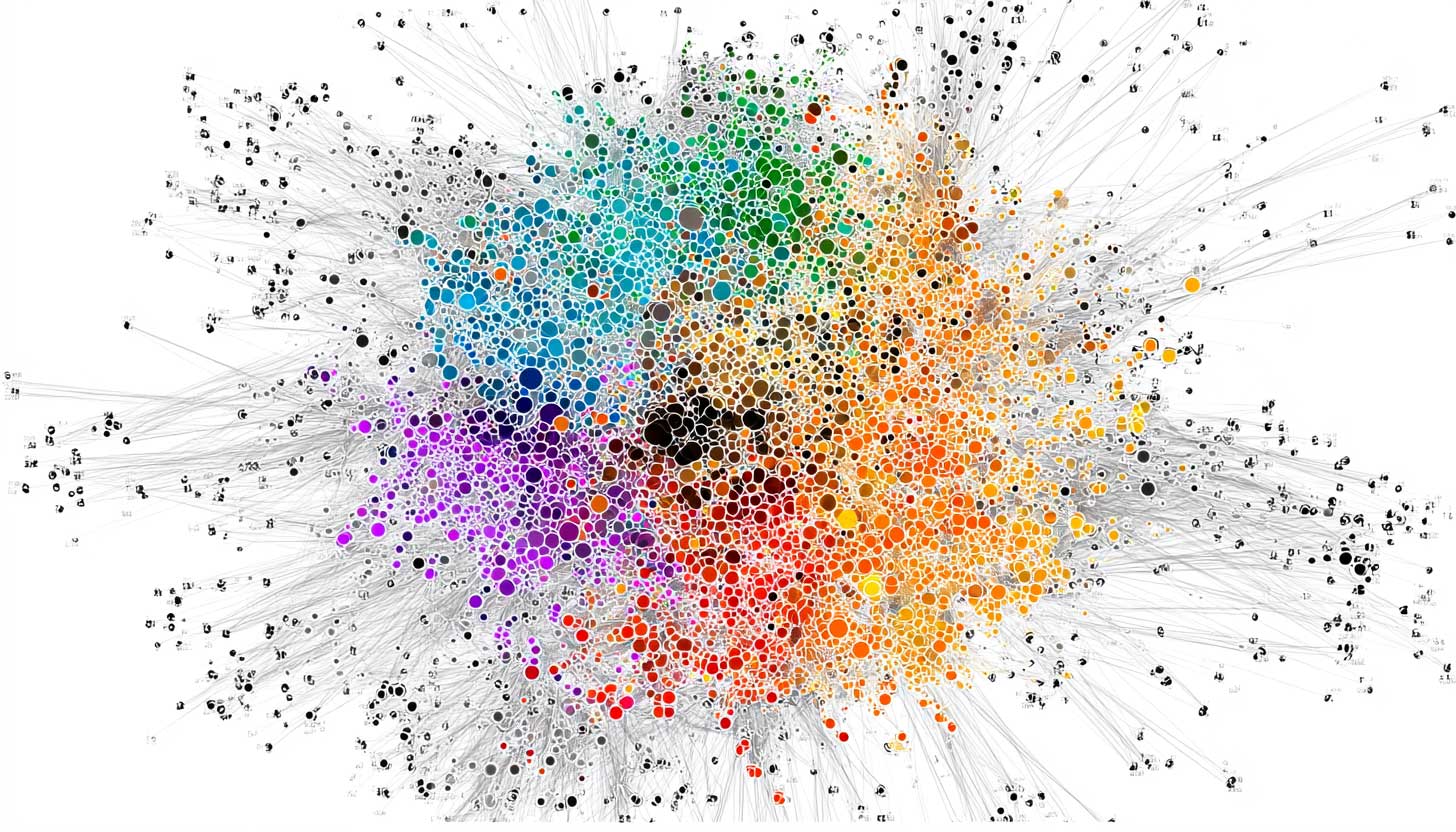

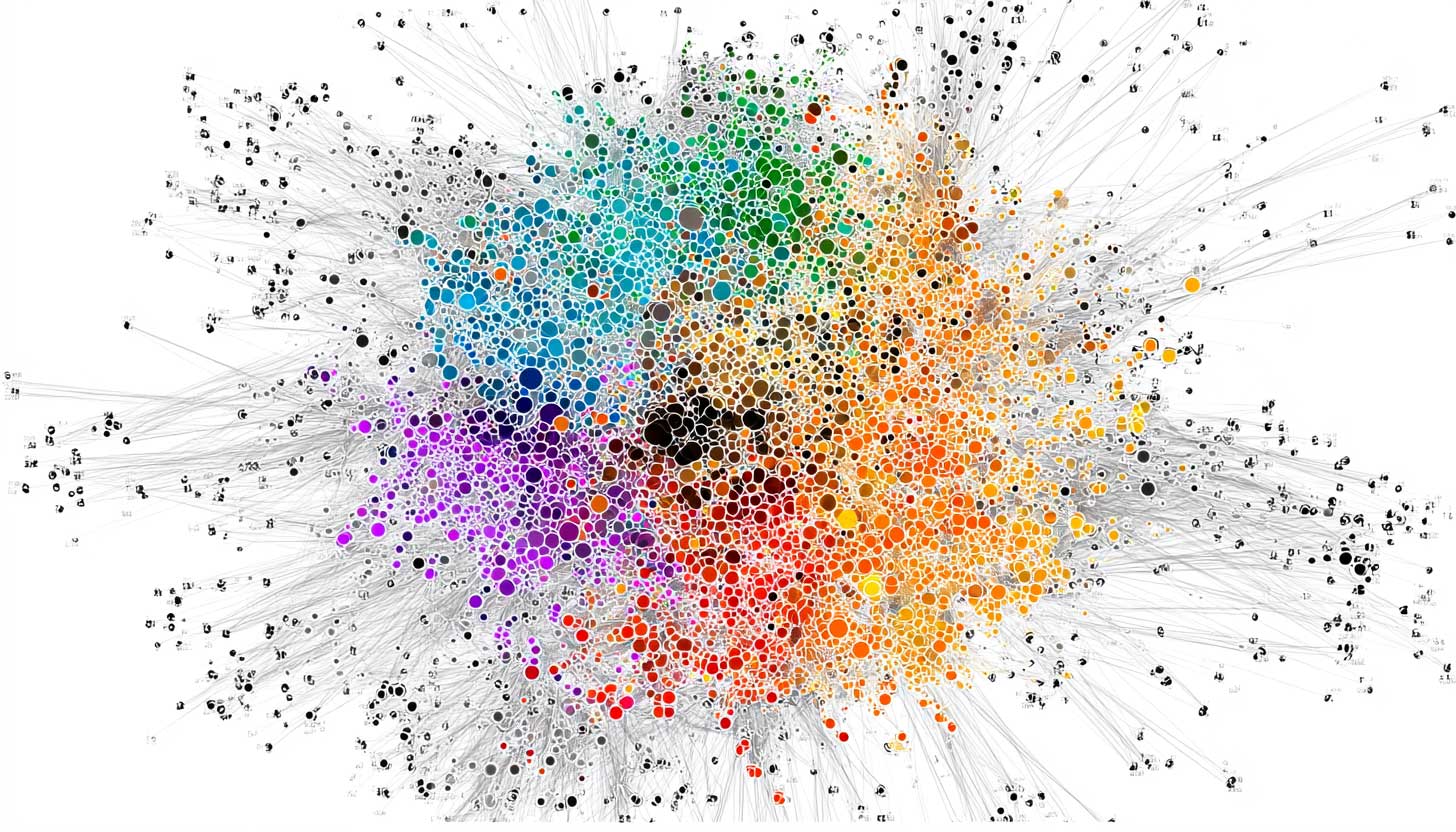

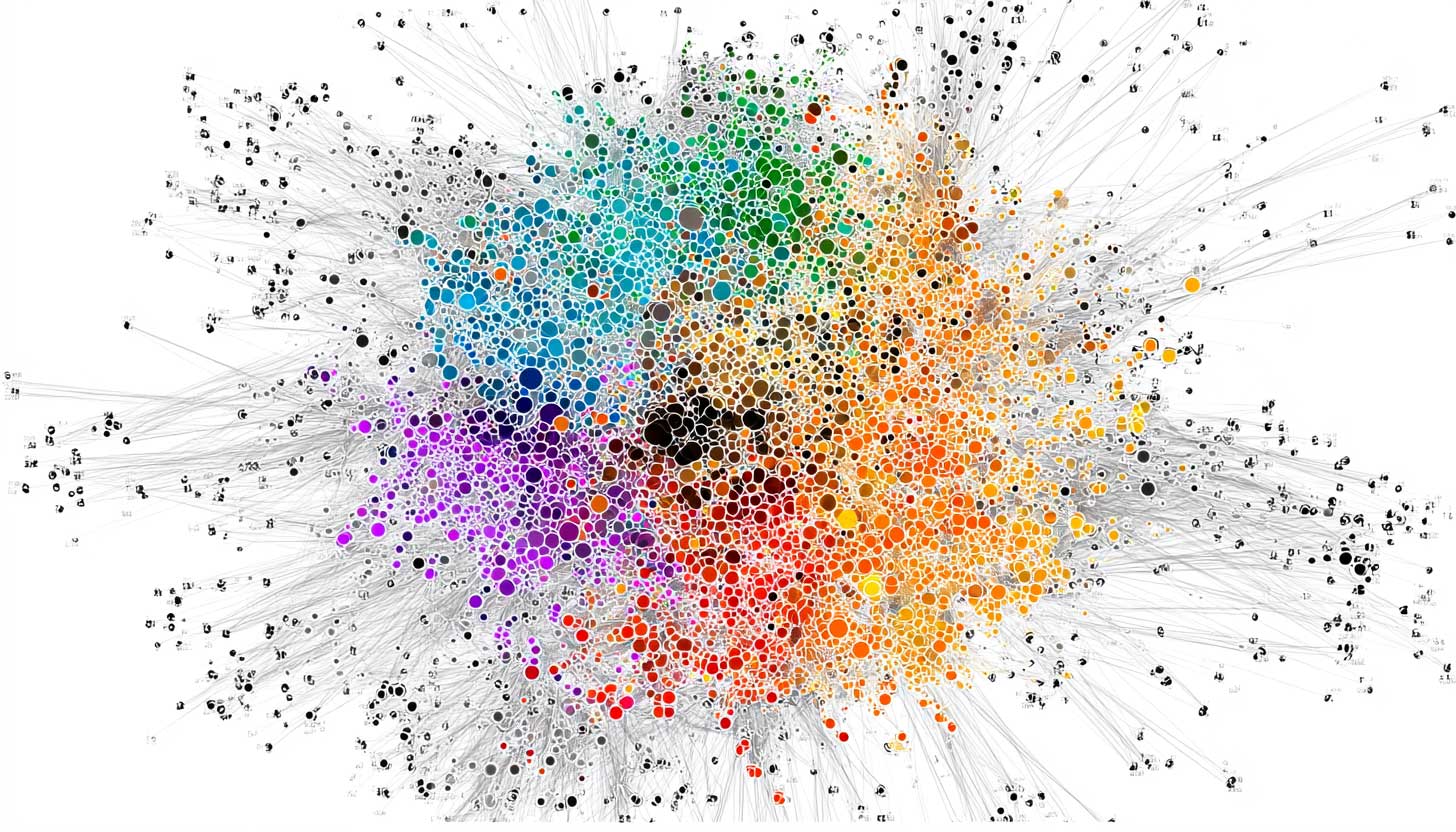

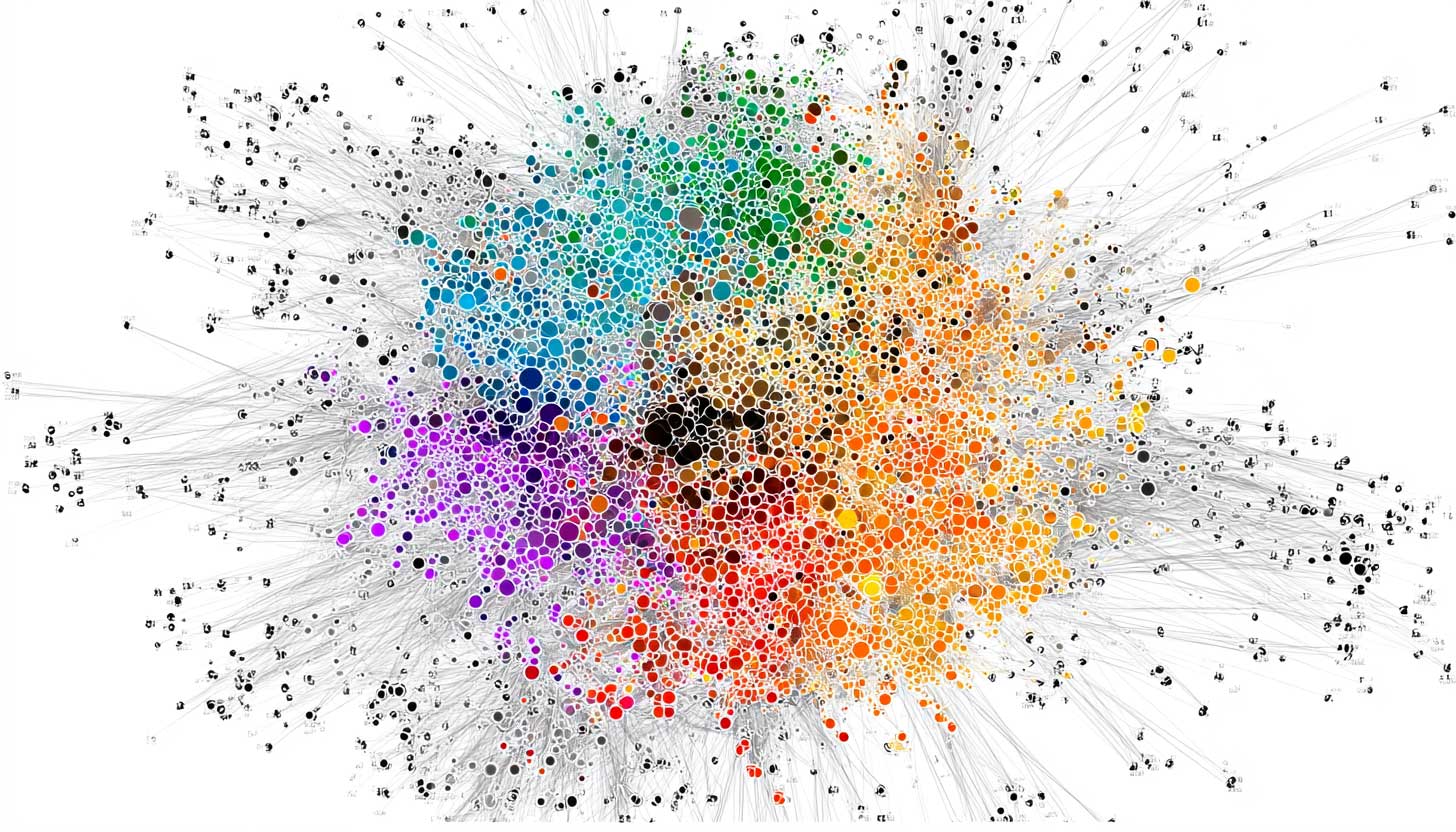

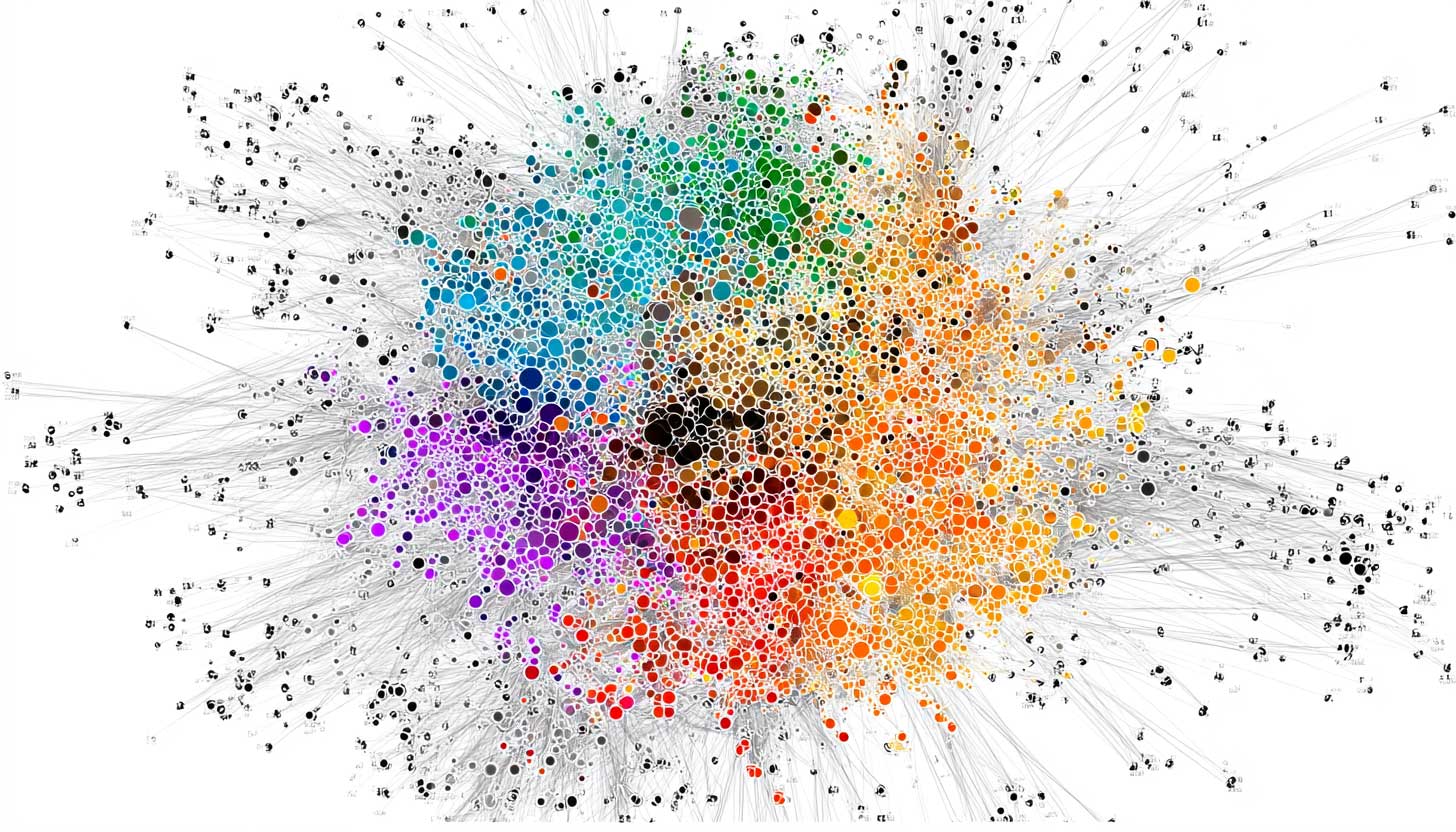

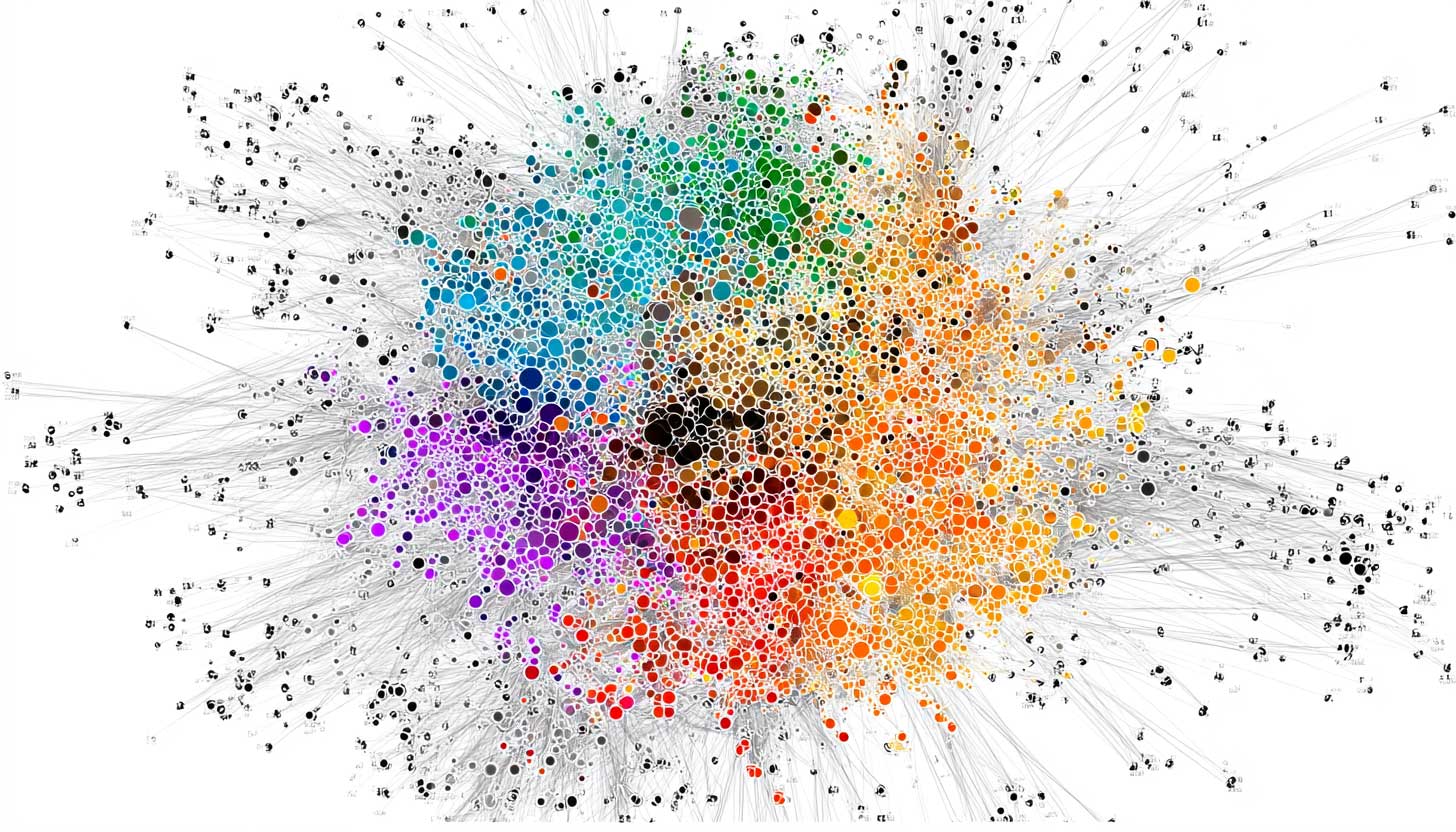

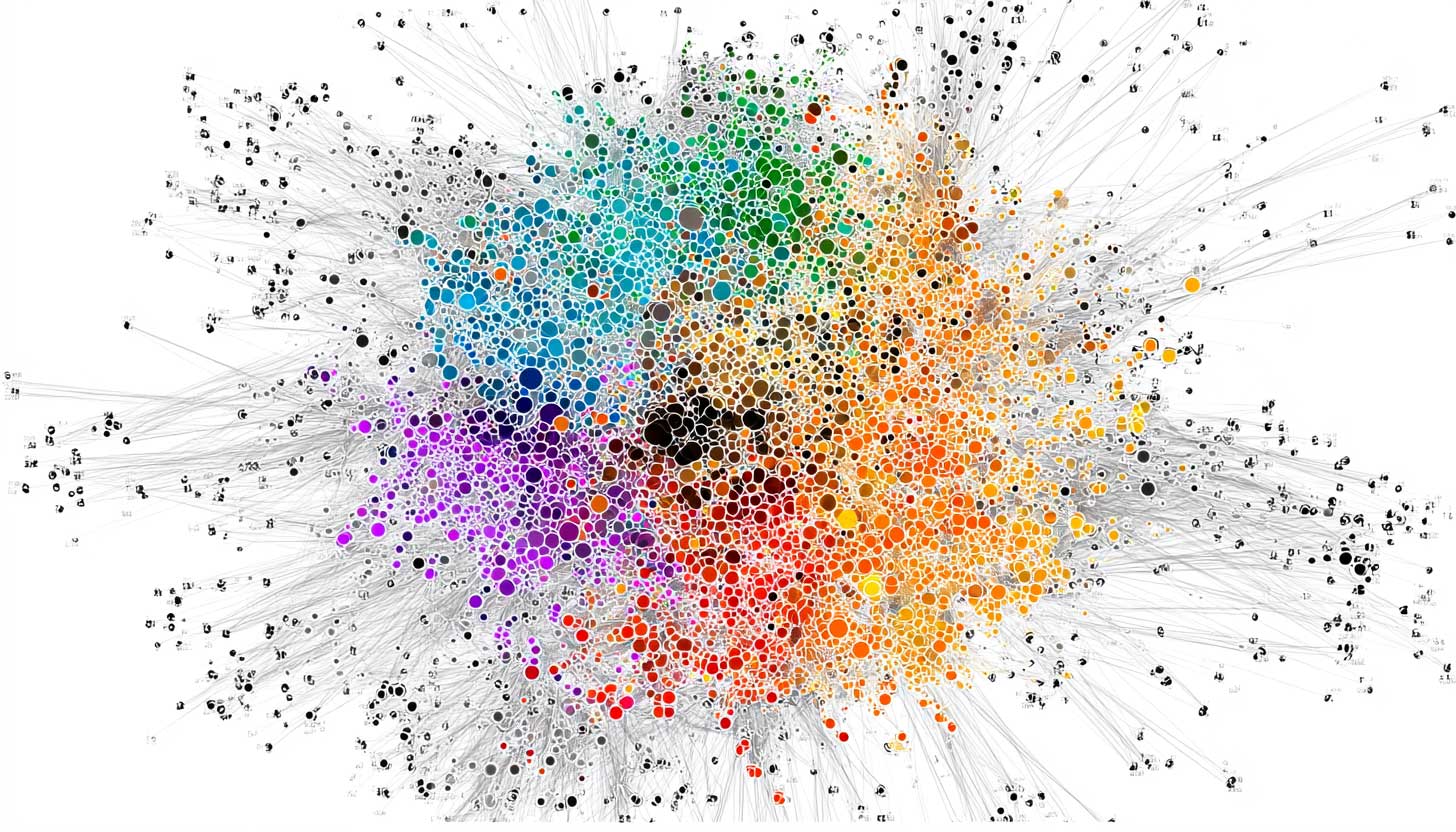

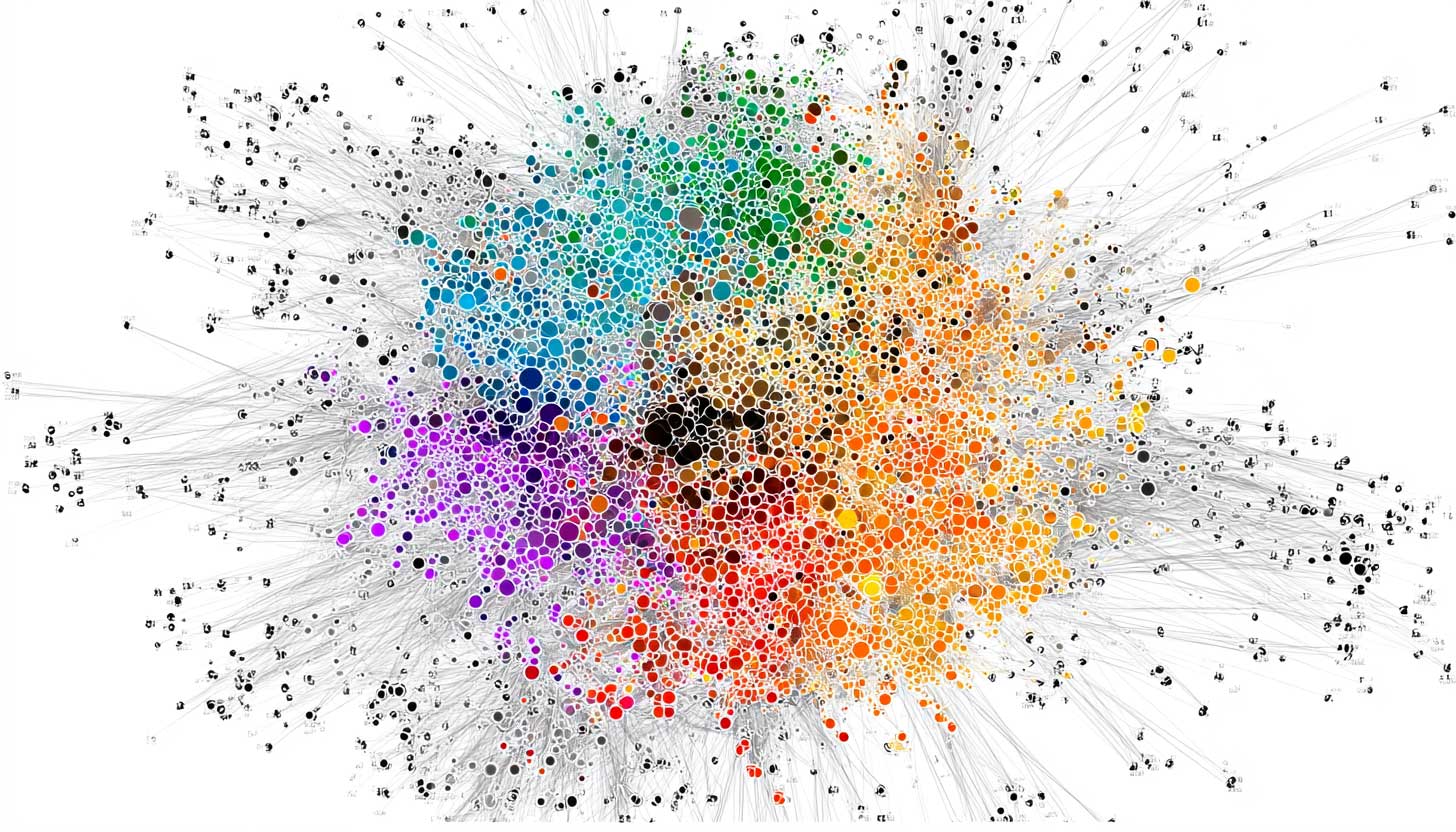

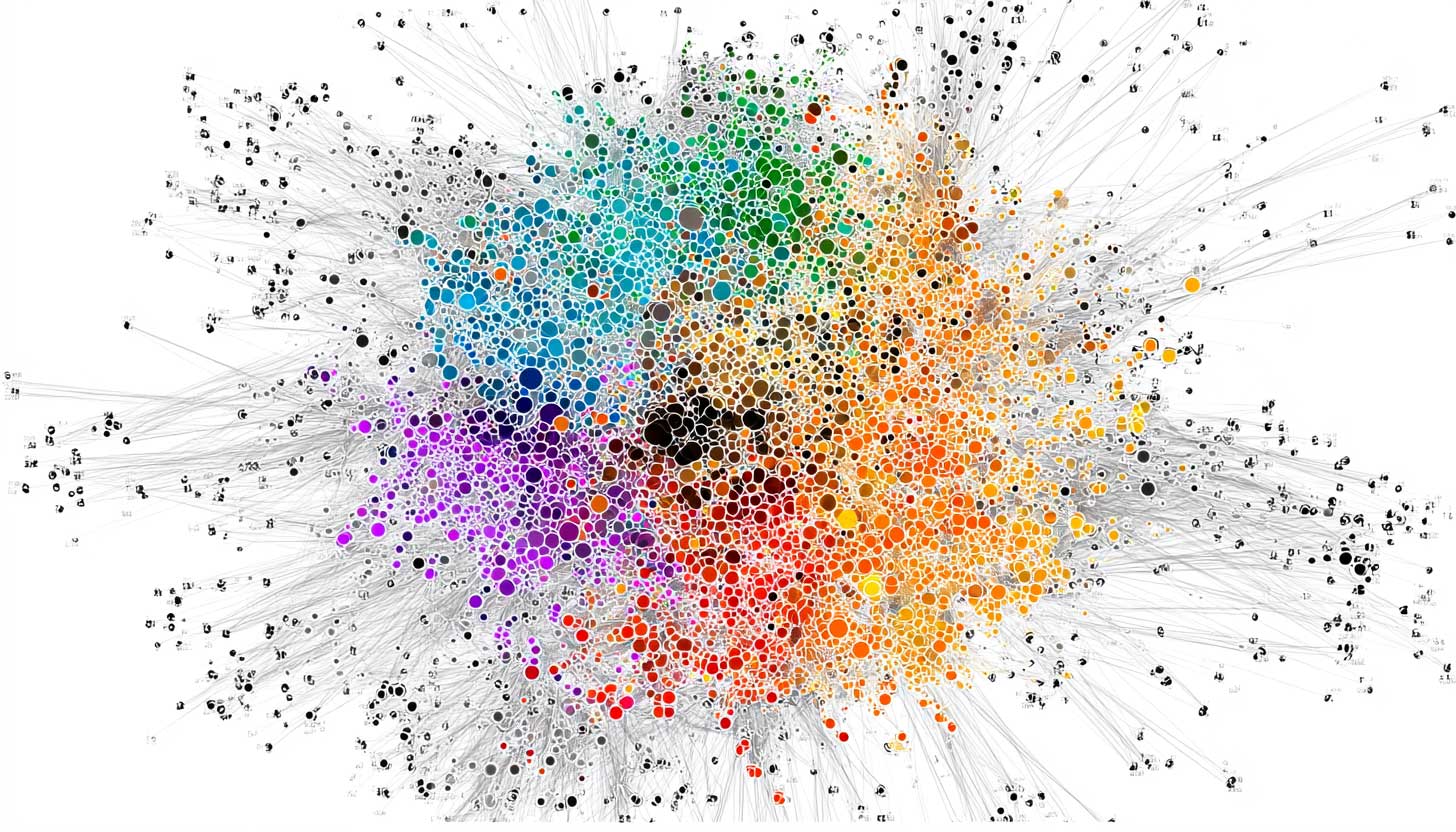

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

-

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

One point for ChatGPT it is then… it might be a bit on the posh / haughty side but that’s better than the reddit cesspool in my book.

-

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

Reddit, where the sources are made up and the points only matter as a hit of dopamine for the person making shit up.

-

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

There was a recent paper claiming that LLMs were better at avoiding toxic speech if it was actually included in their training data, since models that hadn’t been trained on it had no way of recognizing it for what it was. With that in mind, maybe using reddit for training isn’t as bad an idea as it seems.

-

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

Throughout most of my years of higher education as well as k-12, I was told that sourcing Wikipedia was forbidden. In fact, many professors/teachers would automatically fail an assignment if they felt you were using wikipedia. The claim was that the information was often inaccurate, or changing too frequently to be reliable. This reasoning, while irritating at times, always made sense to me.

Fast forward to my professional life today. I've been told on a number of occasions that I should trust LLMs to give me an accurate answer. I'm told that I will "be left behind" if I don't use ChatGPT to accomplish things faster. I'm told that my concerns of accuracy and ethics surrounding generative AI is simply "negativity."

These tools are (abstractly) referencing random users on the internet as well as Wikipedia and treating them both as legitimate sources of information. That seems crazy to me. How can we trust a technology that just references flawed sources from our past? I know there's ways to improve accuracy with things like RAG, but most people are hitting the LLM directly.

The culture around Generative AI should be scientific and cautious, but instead it feels like a cult with a good marketing team.

-

Throughout most of my years of higher education as well as k-12, I was told that sourcing Wikipedia was forbidden. In fact, many professors/teachers would automatically fail an assignment if they felt you were using wikipedia. The claim was that the information was often inaccurate, or changing too frequently to be reliable. This reasoning, while irritating at times, always made sense to me.

Fast forward to my professional life today. I've been told on a number of occasions that I should trust LLMs to give me an accurate answer. I'm told that I will "be left behind" if I don't use ChatGPT to accomplish things faster. I'm told that my concerns of accuracy and ethics surrounding generative AI is simply "negativity."

These tools are (abstractly) referencing random users on the internet as well as Wikipedia and treating them both as legitimate sources of information. That seems crazy to me. How can we trust a technology that just references flawed sources from our past? I know there's ways to improve accuracy with things like RAG, but most people are hitting the LLM directly.

The culture around Generative AI should be scientific and cautious, but instead it feels like a cult with a good marketing team.

all good points.

i think the tech is not being governed by the technically inclined and/or the technically inclined are not involved in business enough but either way there's a huge lack of governance over tools that are growing to be sources of search requests. you're right. it feels like marketing won. really a long time ago but still, furthering whatever that means with latest technical progression leads to just awful shit.

see: microtransactions -

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

Anyone who has any domain knowledge and experience knows how much of reddit is just repeated debunked falsehoods and armchair takes. Please continue to poison your LLMs with it.

-

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

Wikipedia content is usually copyleft isn't it? BigAI doing the BigEvil, redistribution without attribution or reaffirming the rights given back from Copyright by copyleft.

-

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

Original source instead of blogspam: https://www.tryprofound.com/blog/ai-platform-citation-patterns

-

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

I used ChatGPT on something and got a response sourced from Reddit. I told it I'd be more likely to believe the answer if it told me it had simply made up the answer. It then provided better references.

I don't remember what it was but it was definitely something that would be answered by an expert on Reddit, but would also be answered by idiots on Reddit and I didn't want to take chances.

-

Throughout most of my years of higher education as well as k-12, I was told that sourcing Wikipedia was forbidden. In fact, many professors/teachers would automatically fail an assignment if they felt you were using wikipedia. The claim was that the information was often inaccurate, or changing too frequently to be reliable. This reasoning, while irritating at times, always made sense to me.

Fast forward to my professional life today. I've been told on a number of occasions that I should trust LLMs to give me an accurate answer. I'm told that I will "be left behind" if I don't use ChatGPT to accomplish things faster. I'm told that my concerns of accuracy and ethics surrounding generative AI is simply "negativity."

These tools are (abstractly) referencing random users on the internet as well as Wikipedia and treating them both as legitimate sources of information. That seems crazy to me. How can we trust a technology that just references flawed sources from our past? I know there's ways to improve accuracy with things like RAG, but most people are hitting the LLM directly.

The culture around Generative AI should be scientific and cautious, but instead it feels like a cult with a good marketing team.

I think the academic advice about Wikipedia was sadly mistaken. It's true that Wikipedia contains errors, but so do other sources. The problem was that it was a new thing and the idea that someone could vandalize a page startled people. It turns out, though, that Wikipedia has pretty good controls for this over a reasonable time-window. And there's a history of edits. And most pages are accurate and free from vandalism.

Just as you should not uncritically read any of your other sources, you shouldn't uncritically read Wikipedia as a source. But if you are going to uncritically read, Wikipedia's far from the worst thing to blindly trust.

-

Throughout most of my years of higher education as well as k-12, I was told that sourcing Wikipedia was forbidden. In fact, many professors/teachers would automatically fail an assignment if they felt you were using wikipedia. The claim was that the information was often inaccurate, or changing too frequently to be reliable. This reasoning, while irritating at times, always made sense to me.

Fast forward to my professional life today. I've been told on a number of occasions that I should trust LLMs to give me an accurate answer. I'm told that I will "be left behind" if I don't use ChatGPT to accomplish things faster. I'm told that my concerns of accuracy and ethics surrounding generative AI is simply "negativity."

These tools are (abstractly) referencing random users on the internet as well as Wikipedia and treating them both as legitimate sources of information. That seems crazy to me. How can we trust a technology that just references flawed sources from our past? I know there's ways to improve accuracy with things like RAG, but most people are hitting the LLM directly.

The culture around Generative AI should be scientific and cautious, but instead it feels like a cult with a good marketing team.

The common reasons given why Wikipedia shouldn't be cited is often missing the main reason. You shouldn't cite Wikipedia because it is not a source of information, it is a summary of other sources which are referenced.

You shouldn't cite Wikipedia for the same reason you shouldn't cite a library's book report, you should read and cite the book itself. Libraries are a great resource and their reading lists and summaries of books can be a great starting point for research, just like Wikipedia. But citing the library instead of the book is just intellectual laziness and shows to any researcher you are not serious.

Wikipedia itself also says the same thing:

https://en.m.wikipedia.org/wiki/Wikipedia:Citing_Wikipedia -

The common reasons given why Wikipedia shouldn't be cited is often missing the main reason. You shouldn't cite Wikipedia because it is not a source of information, it is a summary of other sources which are referenced.

You shouldn't cite Wikipedia for the same reason you shouldn't cite a library's book report, you should read and cite the book itself. Libraries are a great resource and their reading lists and summaries of books can be a great starting point for research, just like Wikipedia. But citing the library instead of the book is just intellectual laziness and shows to any researcher you are not serious.

Wikipedia itself also says the same thing:

https://en.m.wikipedia.org/wiki/Wikipedia:Citing_WikipediaYou shouldn’t cite Wikipedia because it is not a source of information, it is a summary of other sources which are referenced.

Right, and if an LLM is citing Wikipedia 47.9% of the time, that means that it's summarizing Wikipedia's summary.

You shouldn’t cite Wikipedia for the same reason you shouldn’t cite a library’s book report, you should read and cite the book itself.

Exactly my point.

-

I think the academic advice about Wikipedia was sadly mistaken. It's true that Wikipedia contains errors, but so do other sources. The problem was that it was a new thing and the idea that someone could vandalize a page startled people. It turns out, though, that Wikipedia has pretty good controls for this over a reasonable time-window. And there's a history of edits. And most pages are accurate and free from vandalism.

Just as you should not uncritically read any of your other sources, you shouldn't uncritically read Wikipedia as a source. But if you are going to uncritically read, Wikipedia's far from the worst thing to blindly trust.

I think the academic advice about Wikipedia was sadly mistaken.

Yeah, a lot of people had your perspective about Wikipedia while I was in college, but they are wrong, according to Wikipedia.

From the link:

We advise special caution when using Wikipedia as a source for research projects. Normal academic usage of Wikipedia is for getting the general facts of a problem and to gather keywords, references and bibliographical pointers, but not as a source in itself. Remember that Wikipedia is a wiki. Anyone in the world can edit an article, deleting accurate information or adding false information, which the reader may not recognize. Thus, you probably shouldn't be citing Wikipedia. This is good advice for all tertiary sources such as encyclopedias, which are designed to introduce readers to a topic, not to be the final point of reference. Wikipedia, like other encyclopedias, provides overviews of a topic and indicates sources of more extensive information.

I personally use ChatGPT like I would Wikipedia. It's a great introduction to a subject, especially in my line of work, which is software development. I can get summarized information about new languages and frameworks really quickly, and then I can dive into the official documentation when I have a high level understanding of the topic at hand. Unfortunately, most people do not use LLMs this way.

-

I think the academic advice about Wikipedia was sadly mistaken. It's true that Wikipedia contains errors, but so do other sources. The problem was that it was a new thing and the idea that someone could vandalize a page startled people. It turns out, though, that Wikipedia has pretty good controls for this over a reasonable time-window. And there's a history of edits. And most pages are accurate and free from vandalism.

Just as you should not uncritically read any of your other sources, you shouldn't uncritically read Wikipedia as a source. But if you are going to uncritically read, Wikipedia's far from the worst thing to blindly trust.

I think the academic advice about Wikipedia was sadly mistaken.

It wasn't mistaken 10 or especially 15 years ago, however. Check how some articles looked back then, you'll see vastly fewer sources and overall a less professional-looking text. These days I think most professors will agree that it's fine as a starting point (depending on the subject, at least; I still come across unsourced nonsensical crap here and there, slowly correcting it myself).

-

I think the academic advice about Wikipedia was sadly mistaken.

It wasn't mistaken 10 or especially 15 years ago, however. Check how some articles looked back then, you'll see vastly fewer sources and overall a less professional-looking text. These days I think most professors will agree that it's fine as a starting point (depending on the subject, at least; I still come across unsourced nonsensical crap here and there, slowly correcting it myself).

I think it was. When I think of Wikipedia, I'm thinking about how it was in ~2005 (20 years ago) and it was a pretty solid encyclopedia then.

There were (and still are) some articles that are very thin. And some that have errors. Both of these things are true of non-wiki encyclopedias. When I've seen a poorly-written article, it's usually on a subject that a standard encyclopedia wouldn't even cover. So I feel like that was still a giant win for Wikipedia.

-

I think the academic advice about Wikipedia was sadly mistaken.

Yeah, a lot of people had your perspective about Wikipedia while I was in college, but they are wrong, according to Wikipedia.

From the link:

We advise special caution when using Wikipedia as a source for research projects. Normal academic usage of Wikipedia is for getting the general facts of a problem and to gather keywords, references and bibliographical pointers, but not as a source in itself. Remember that Wikipedia is a wiki. Anyone in the world can edit an article, deleting accurate information or adding false information, which the reader may not recognize. Thus, you probably shouldn't be citing Wikipedia. This is good advice for all tertiary sources such as encyclopedias, which are designed to introduce readers to a topic, not to be the final point of reference. Wikipedia, like other encyclopedias, provides overviews of a topic and indicates sources of more extensive information.

I personally use ChatGPT like I would Wikipedia. It's a great introduction to a subject, especially in my line of work, which is software development. I can get summarized information about new languages and frameworks really quickly, and then I can dive into the official documentation when I have a high level understanding of the topic at hand. Unfortunately, most people do not use LLMs this way.

This is good advice for all tertiary sources such as encyclopedias, which are designed to introduce readers to a topic, not to be the final point of reference. Wikipedia, like other encyclopedias, provides overviews of a topic and indicates sources of more extensive information.

The whole paragraph is kinda FUD except for this. Normal research practice is to (get ready for a shock) do research and not just copy a high-level summary of what other people have done. If your professors were saying, "don't cite encyclopedias, which includes Wikipedia" then that's fine. But my experience was that Wikipedia was specifically called out as being especially unreliable and that's just nonsense.

I personally use ChatGPT like I would Wikipedia

Eesh. The value of a tertiary source is that it cites the secondary sources (which cite the primary). If you strip that out, how's it different from "some guy told me..."? I think your professors did a bad job of teaching you about how to read sources. Maybe because they didn't know themselves.

-

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

ChatGPT Sources Mostly From Wikipedia While Google AI Overviews Sources Mostly From Reddit

A study from Profound of OpenAI's ChatGPT, Google AI Overviews and Perplexity shows that while ChatGPT mostly sources its information from Wikipedia, Google AI Overviews and Perplexity mostly source their information from Reddit.

Search Engine Roundtable (www.seroundtable.com)

I would be hesitant to use either as a primary source...

-

I think it was. When I think of Wikipedia, I'm thinking about how it was in ~2005 (20 years ago) and it was a pretty solid encyclopedia then.

There were (and still are) some articles that are very thin. And some that have errors. Both of these things are true of non-wiki encyclopedias. When I've seen a poorly-written article, it's usually on a subject that a standard encyclopedia wouldn't even cover. So I feel like that was still a giant win for Wikipedia.

In 2005 the article on William Shakespeare contained references to a total of 7 different sources, including a page describing how his name is pronounced, Plutarch, and "Catholic Encyclopedia on CD-ROM". It contained more text discussing Shakespeare's supposed Catholicism than his actual plays, which were described only in the most generic terms possible. I'm not noticing any grave mistakes while skimming the text, but it really couldn't pass for a reliable source or a traditionally solid encyclopedia. And that's the page on the best known English writer, slightly less popular topics were obviously much shoddier.

It had its significant upsides already back then, sure, no doubt about that. But the teachers' skepticism wasn't all that unwarranted.

-

This is good advice for all tertiary sources such as encyclopedias, which are designed to introduce readers to a topic, not to be the final point of reference. Wikipedia, like other encyclopedias, provides overviews of a topic and indicates sources of more extensive information.

The whole paragraph is kinda FUD except for this. Normal research practice is to (get ready for a shock) do research and not just copy a high-level summary of what other people have done. If your professors were saying, "don't cite encyclopedias, which includes Wikipedia" then that's fine. But my experience was that Wikipedia was specifically called out as being especially unreliable and that's just nonsense.

I personally use ChatGPT like I would Wikipedia

Eesh. The value of a tertiary source is that it cites the secondary sources (which cite the primary). If you strip that out, how's it different from "some guy told me..."? I think your professors did a bad job of teaching you about how to read sources. Maybe because they didn't know themselves.

my experience was that Wikipedia was specifically called out as being especially unreliable and that's just nonsense.

Let me clarify then. It's unreliable as a cited source in Academia. I'm drawing parallels and criticizing the way people use chatgpt. I.e. taking it at face value with zero caution and using it as if it's a primary source of information.

Eesh. The value of a tertiary source is that it cites the secondary sources (which cite the primary). If you strip that out, how's it different from "some guy told me..."? I think your professors did a bad job of teaching you about how to read sources. Maybe because they didn't know themselves.

Did you read beyond the sentence that you quoted?

Here:

I can get summarized information about new languages and frameworks really quickly, and then I can dive into the official documentation when I have a high level understanding of the topic at hand.

Example: you're a junior developer trying to figure out what this JavaScript syntax is

const {x} = response?.data. It's difficult to figure out what destructuring and optional chaining are without knowing what they're called.With Chatgpt, you can copy and paste that code and ask "tell me what every piece of syntax is in this line of Javascript." Then you can check the official docs to learn more.

-

Grok, Elon Musk’s AI tool, spreads antisemitic conspiracies: The posts come after changes by Musk, who has previously made similar claims that Jews promote hatred toward white people

Technology 1

1

-

-

-

-

Fairphone announces the €599 Fairphone 6, with a 6.31" 120Hz LTPO OLED display, a Snapdragon 7s Gen 3 chip, and enhanced modularity with 12 swappable parts

Technology 1

1

-

-

-