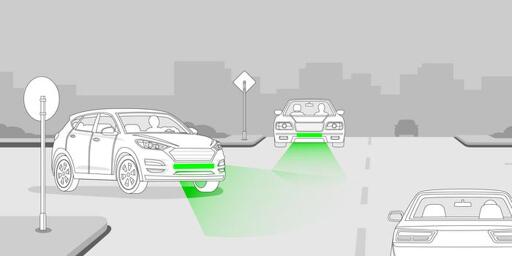

Front Brake Lights Could Drastically Diminish Road Accident Rates

-

If you're looking at the side of the car, you don't see them the same way as from the front. Which this whole discussion is about.

If you can see both turn signals from your point of view, current design works well enough.

i found a video to help you picture it better (https://youtube.com/shorts/ZD_34DxW_uI)

it really isnt that difficult

-

i found a video to help you picture it better (https://youtube.com/shorts/ZD_34DxW_uI)

it really isnt that difficult

I know how flow lights work. But they still don't help you see better that a car is turning away from you, which is what this discussion is about.

Imagine a crossroad where a car is coming from your right side. You have no way of knowing whether they turn right or go straight, regardless of the way the lights work, because you won't see them.

-

Wouldn’t better driving education and testing work just as well, if not better?

as with a lot of tests, the thing a driving test is the best at measuring is how well you can take a driving test.

-

Sure but the second I lose my mobility I will put a deer slug through my head.

Another kind of solution. But not needed.

-

Why not move to a place where low mobility doesn't cut you off from the rest of society?

There's plenty of retirement communities where you can get around with a golf cart. In the 3 biggest cities here in SK, old folk can ride the subways for free, and sometimes you even see them drive mobility scooters on.

Other places I've been have level boarding for buses, but I've never seen someone drive a mobility scooter onto one. Certainly it wouldn't fly in SK.

I don't think I could afford to be homeless in SK.

-

So risking everyone else's life around you is worth it?

It isn't a negociation. If some bureaucrat ticks that box, it will just be the end.

-

My status is in a relationship

Don't get any ideas buddy

How do I set my car's status to "It's complicated"?

-

Definitely make it easier for people on crosswalks to start walking. Knowing that they are slowing down.

In order to be most effective it would need to be dynamic rather than a fixed on/off like rear brake lights. Stopping doesn't mean stopped. So perhaps a progressive light bar that starts lighting up at 20mph and adds a light for each 5mph drop until the whole bar is lit indicating a full stop. That would give pedestrians a sense of rate of deceleration.

-

I was curious if anyone had actually tested it or not, and I found the video above where they get right into it, without any intros or family history or begging to like & subscribe... just a short video where they test it and find that, YES!, the brake lights do come on when you use the steering wheel paddle brake or when you're in L gear and take your foot off the accelerator.

Here's the technology connections test/analysis from 2023. https://www.youtube.com/watch?v=U0YW7x9U5TQ

The claim is we need more comprehensive regulation for brake/slow down lights.

-

The brakes aren’t engaged? The light turns on before there’s pressure on the brake. They probably don’t even know their lights are on since they aren’t decelerating.

They might need to check their assumptions. It might not feel like the brake is engaged but it's an expensive habit that causes unnecessary wear and tear. https://drivingmecrazyblog.com/2020/02/07/quit-riding-your-brakes/

-

They might need to check their assumptions. It might not feel like the brake is engaged but it's an expensive habit that causes unnecessary wear and tear. https://drivingmecrazyblog.com/2020/02/07/quit-riding-your-brakes/

It’s not engaged dude, the brake has play it in, and the brakes don’t engage the second you start depressing it. But the light is on. Called brake shadowing, do people not take driver training any more? Because maybe they would stop assuming brake light means brakes are engaged and they are riding them.

Quit reading blogs without understanding the basic premise of how brakes work first.

-

I don't think I could afford to be homeless in SK.

No, being in poverty is really bad here, but I just picked SK out as a close example, old folk becoming recluses who only interact with Fox News and people serving them is pretty specific to American and/or car-centric culture. Hell even car-centric parts of america have retirement communities where they all drive scooters or golf cars.

-

I know how flow lights work. But they still don't help you see better that a car is turning away from you, which is what this discussion is about.

Imagine a crossroad where a car is coming from your right side. You have no way of knowing whether they turn right or go straight, regardless of the way the lights work, because you won't see them.

we can put the lights on the bottom of the mirror so you can see from that angle then

-

No, being in poverty is really bad here, but I just picked SK out as a close example, old folk becoming recluses who only interact with Fox News and people serving them is pretty specific to American and/or car-centric culture. Hell even car-centric parts of america have retirement communities where they all drive scooters or golf cars.

Well in any case I'm here and not there and when that happens there won't be money to go to some magical car free place. We have winter here and the groceries are 20 km away. There is no bus, no taxi and not even uber. Not that I would have the 60 bucks a ride would cost. Of course I would also lose my job which 60km away.

So deer slug to the brain will be the prescription.

-

we can put the lights on the bottom of the mirror so you can see from that angle then

And then they'd flash no matter which direction they'd turn?

So basically hazard lights all the time? Not sure if you still don't understand what this discussion is about and how I could make it any more clear.

-

Great, where you live.

It's not perfect but I think this is what people in this thread are fighting for. Which isn't stupid imo

-

There are now headlights that can be "high" but block out portions of the beam directed at light sources like oncoming headlights. Can't have them in the US though.

Also known as the "fuck everyone not in a car"-setting

-

You say this like those same people won't leave it on stun

Well yes they will, but at least it's an option . Also a lot of vehicles have auto dimming now but they don't work well and don't last more than 6 years before the sensors get borked