AI agents wrong ~70% of time: Carnegie Mellon study

-

sounds like the fault of the researchers not to build better tests or understand the limits of the software to use it right

Are you arguing they should have built a test that makes AI perform better? How are you offended on behalf of AI?

-

you're right, the dumb of AI is completely comparable to the dumb of human, there's no difference worth talking about, sorry i even spoke the fuck up

No worries.

-

This post did not contain any content.

Why would they be right beyond word sequence frecuencies?

-

There's a sleep button on my laptop. Doesn't mean I would use it.

I'm just trying to say you're saying the feature that everyone kind of knows doesn't work. Chatgpt is not trained to do calculations well.

I just like technology and I think and fully believe the left hatred of it is not logical. I believe it stems from a lot of media be and headlines. Why there's this push From media is a question I would like to know more. But overall, I see a lot of the same makers of bullshit yellow journalism for this stuff on the left as I do for similar bullshit on the right wing spaces towards other things.

Again with dismissing the evidence of my own eyes!

I wasn't asking it to do calculations, I was asking it to put the data into a super formulaic sentence. It was good at the first couple of rows then it would get stuck in a rut and start lying. It was crap. A seven year old would have done it far better, and if I'd told a seven year old that they had made a couple of mistakes and to check it carefully, they would have done.

Again, I didn't read it in a fucking article, I read it on my fucking computer screen, so if you'd stop fucking telling me I'm stupid for using it the way it fucking told me I could use it, or that I'm stupid for believing what the media tell me about LLMs, when all I'm doing is telling you my own experience, you'd sound a lot less like a desperate troll or someone who is completely unable to assimilate new information that differs from your dogma.

-

That looks better. Even with a fair coin, 10 heads in a row is almost impossible.

And if you are feeding the output back into a new instance of a model then the quality is highly likely to degrade.

Whereas if you ask a human to do the same thing ten times, the probability that they get all ten right is astronomically higher than 0.0000059049.

-

Again with dismissing the evidence of my own eyes!

I wasn't asking it to do calculations, I was asking it to put the data into a super formulaic sentence. It was good at the first couple of rows then it would get stuck in a rut and start lying. It was crap. A seven year old would have done it far better, and if I'd told a seven year old that they had made a couple of mistakes and to check it carefully, they would have done.

Again, I didn't read it in a fucking article, I read it on my fucking computer screen, so if you'd stop fucking telling me I'm stupid for using it the way it fucking told me I could use it, or that I'm stupid for believing what the media tell me about LLMs, when all I'm doing is telling you my own experience, you'd sound a lot less like a desperate troll or someone who is completely unable to assimilate new information that differs from your dogma.

What does "I give it data to put in a formulaic sentence." mean here

Why not just share the details. I often find a lot of people saying it's doing crazy things and never like to share the details. It's very similar to discussing things with Trump supporters who do the same shit when pressed on details about stuff they say occurs. Like the same "you're a troll for asking for evidence of my claim" that trumpets do. It's wild how similar it is.

And yes asking to do things like iterate over rows isn't how it works. It's getting better but that's not what it's primarily used for. It could be but isn't. It only catches so many tokens. It's getting better and has some persistence but it's nowhere near what its strength is.

-

Whereas if you ask a human to do the same thing ten times, the probability that they get all ten right is astronomically higher than 0.0000059049.

Dunno. Asking 10 humans at random to do a task and probably one will do it better than AI. Just not as fast.

-

What does "I give it data to put in a formulaic sentence." mean here

Why not just share the details. I often find a lot of people saying it's doing crazy things and never like to share the details. It's very similar to discussing things with Trump supporters who do the same shit when pressed on details about stuff they say occurs. Like the same "you're a troll for asking for evidence of my claim" that trumpets do. It's wild how similar it is.

And yes asking to do things like iterate over rows isn't how it works. It's getting better but that's not what it's primarily used for. It could be but isn't. It only catches so many tokens. It's getting better and has some persistence but it's nowhere near what its strength is.

I would be in breach of contract to tell you the details. How about you just stop trying to blame me for the clear and obvious lies that the LLM churned out and start believing that LLMs ARE are strikingly fallible, because, buddy, you have your head so far in the sand on this issue it's weird.

The solution to the problem was to realise that an LLM cannot be trusted for accuracy even if the first few results are completely accurate, the bullshit well creep in. Don't trust the LLM. Check every fucking thing.

In the end I wrote a quick script that broke the input up on tab characters and wrote the sentence. That's how formulaic it was. I regretted deeply trying to get an LLM to use data.

The frustrating thing is that it is clearly capable of doing the task some of the time, but drifting off into FANTASY is its strong suit, and it doesn't matter how firmly or how often you ask it to be accurate or use the input carefully. It's going to lie to you before long. It's an LLM. Bullshitting is what it does. Get it to do ONE THING only, then check the fuck out of its answer. Don't trust it to tell you the truth any more than you would trust Donald J Trump to.

-

Dunno. Asking 10 humans at random to do a task and probably one will do it better than AI. Just not as fast.

You're better off asking one human to do the same task ten times. Humans get better and faster at things as they go along. Always slower than an LLM, but LLMs get more and more likely to veer off on some flight of fancy, further and further from reality, the more it says to you. The chances of it staying factual in the long term are really low.

It's a born bullshitter. It knows a little about a lot, but it has no clue what's real and what's made up, or it doesn't care.

If you want some text quickly, that sounds right, but you genuinely don't care whether it is right at all, go for it, use an LLM. It'll be great at that.

-

This post did not contain any content.

Reading with CEO mindset. 3 out of 10 employees can be fired.

-

I would be in breach of contract to tell you the details. How about you just stop trying to blame me for the clear and obvious lies that the LLM churned out and start believing that LLMs ARE are strikingly fallible, because, buddy, you have your head so far in the sand on this issue it's weird.

The solution to the problem was to realise that an LLM cannot be trusted for accuracy even if the first few results are completely accurate, the bullshit well creep in. Don't trust the LLM. Check every fucking thing.

In the end I wrote a quick script that broke the input up on tab characters and wrote the sentence. That's how formulaic it was. I regretted deeply trying to get an LLM to use data.

The frustrating thing is that it is clearly capable of doing the task some of the time, but drifting off into FANTASY is its strong suit, and it doesn't matter how firmly or how often you ask it to be accurate or use the input carefully. It's going to lie to you before long. It's an LLM. Bullshitting is what it does. Get it to do ONE THING only, then check the fuck out of its answer. Don't trust it to tell you the truth any more than you would trust Donald J Trump to.

This is crazy. I've literally been saying they are fallible. You're saying your professional fed and LLM some type of dataset. So I can't really say what it was you're trying to accomplish but I'm just arguing that trying to have it process data is not what they're trained to do. LLM are incredible tools and I'm tired of trying to act like they're not because people keep using them for things they're not built to do. It's not a fire and forget thing. It does need to be supervised and verified. It's not exactly an answer machine. But it's so good at parsing text and documents, summarizing, formatting and acting like a search engine that you can communicate with rather than trying to grok some arcane sentence. Its power is in language applications.

It is so much fun to just play around with and figure out where it can help. I'm constantly doing things on my computer it's great for instructions. Especially if I get a problem that's kind of unique and needs a big of discussion to solve.

-

This is crazy. I've literally been saying they are fallible. You're saying your professional fed and LLM some type of dataset. So I can't really say what it was you're trying to accomplish but I'm just arguing that trying to have it process data is not what they're trained to do. LLM are incredible tools and I'm tired of trying to act like they're not because people keep using them for things they're not built to do. It's not a fire and forget thing. It does need to be supervised and verified. It's not exactly an answer machine. But it's so good at parsing text and documents, summarizing, formatting and acting like a search engine that you can communicate with rather than trying to grok some arcane sentence. Its power is in language applications.

It is so much fun to just play around with and figure out where it can help. I'm constantly doing things on my computer it's great for instructions. Especially if I get a problem that's kind of unique and needs a big of discussion to solve.

it’s so good at parsing text and documents, summarizing

No. Not when it matters. It makes stuff up. The less you carefully check every single fucking thing it says, the more likely you are to believe some lies it subtly slipped in as it went along. If truth doesn't matter, go ahead and use LLMs.

If you just want some ideas that you're going to sift through, independently verify and check for yourself with extreme skepticism as if Donald Trump were telling you how to achieve world peace, great, you're using LLMs effectively.

But if you're trusting it, you're doing it very, very wrong and you're going to get humiliated because other people are going to catch you out in repeating an LLM's bullshit.

-

it’s so good at parsing text and documents, summarizing

No. Not when it matters. It makes stuff up. The less you carefully check every single fucking thing it says, the more likely you are to believe some lies it subtly slipped in as it went along. If truth doesn't matter, go ahead and use LLMs.

If you just want some ideas that you're going to sift through, independently verify and check for yourself with extreme skepticism as if Donald Trump were telling you how to achieve world peace, great, you're using LLMs effectively.

But if you're trusting it, you're doing it very, very wrong and you're going to get humiliated because other people are going to catch you out in repeating an LLM's bullshit.

If it's so bad as if you say, could you give an example of a prompt where it'll tell you incorrect information.

-

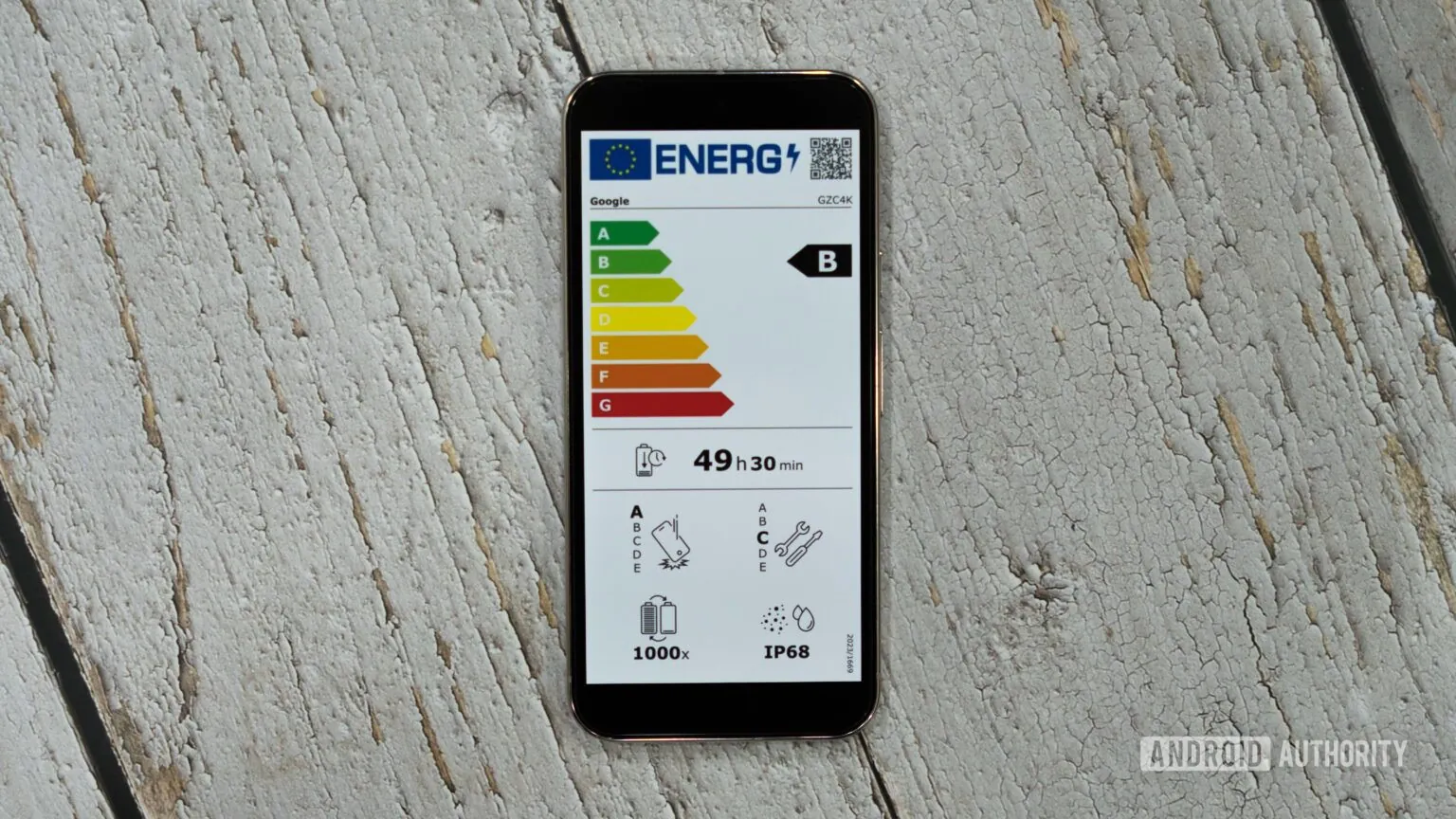

Samsung phones can survive twice as many charges as Pixel and iPhone, according to EU data

Technology 1

1

-

-

NO KINGS! Tomorrow on Trump's birthday, we protest across the entire nation. Check the website for No Kings events near you!

Technology 2

2

-

-

-

Prototype of RTX 5090 Appears With Four 16-Pin Power Connectors, Capable of Delivering 2,400W

Technology 1

1

-

-