AI slows down some experienced software developers, study finds

-

Experienced software developer, here. "AI" is useful to me in some contexts. Specifically when I want to scaffold out a completely new application (so I'm not worried about clobbering existing code) and I don't want to do it by hand, it saves me time.

And... that's about it. It sucks at code review, and will break shit in your repo if you let it.

Same. I also like it for basic research and helping with syntax for obscure SQL queries, but coding hasn't worked very well. One of my less technical coworkers tried to vibe code something and it didn't work well. Maybe it would do okay on something routine, but generally speaking it would probably be better to use a library for that anyway.

-

I’ve had too many arguments with management about letting them merge and I’m not letting that ruin my code base

I guess I'm lucky, before here I always had 100% control of the code I was responsible for. Here (last 12 years) we have a big team, but nobody merges to master/main without a review and screwups in the section of the repository I am primarily responsible for have been rare.

We have a new VP collecting metrics on everyone, including lines of code, number of merge requests, times per day using ai, days per week in the office vs at home

I have been getting actively recruited - six figures+ - for multiple openings right here in town (not a huge market here, either...) this may be the time...

Interesting idea… we actually have a plan to go public in a couple years and I’m holding a few options, but the economy is hitting us like everyone else. I’m no longer optimistic we can reach the numbers for those options to activate

-

"Using something that you're not experienced with and haven't yet worked out how to best integrate into your workflow slows some people down"

Wow, what an insight! More at 8!

As I said on this article when it was posted to another instance:

AI is a tool to use. Like with all tools, there are right ways and wrong ways and inefficient ways and all other ways to use them. You can’t say that they slow people down as a whole just because some people get slowed down.

I use github copilot as it does speed things up. but you have to keep tight reins on it, but it does work because it sees my code, sees what i'm trying to do, etc. So a good chunk of the time it helps.

Now something like Claude AI? yeah...no. Claude doesn't know how to say "I don't know." it simply doesn't. it NEEDS to provide you a solution even if the vast majority of time it's one it just creates off the top of it's head. it simply cannot say it doesn't know. and it'll get desperate. it'll provided you with libraries or repos that have been orphaned for years. It'll make stuff up saying that something can magically do what you're really looking for when in truth it can't do that thing at all and was never intended to. As long as it sounds good to Claude, then it must be true. It's a shit AI and absolutely worthless. I don't even trust it to simply build out a framework for something.

Chatgpt? it's good for providing me with place holder content or bouncing ideas off of. that's it. like you said they're simply tools not anything that should be replacements for...well...anything.

Could they slow people down? I think so but that person has to be an absolute moron or have absolutely zero experience with whatever their trying to get the AI to do.

-

Fun how the article concludes that AI tools are still good anyway, actually.

This AI hype is a sickness

Upper management said a while back we need to use copilot. So far just used Deepseek to fill out the stupid forms that management keep getting us to fill out

-

As a developer

- I can jot down a bunch of notes and have ai turn it into a reasonable presentation or documentation or proposal

- zoom has an ai agent which is pretty good about summarizing a meeting. It usually just needs minor corrections and you can send it out much faster than taking notes

- for coding I mostly use ai like autocomplete. Sometimes it’s able to autocomplete entire code blocks

- for something new I might have ai generate a class or something, and use it as a first draft where I then make it work

I’ve had success with:

- dumping email threads into it to generate user stories,

- generating requirements documentation templates so that everyone has to fill out the exact details needed to make the project a success

- generating quick one-off scripts

- suggesting a consistent way to refactor a block of code (I’m not crazy enough to let it actually do all the refactoring)

- summarize the work done for a slide deck and generate appropriate infographics

Essentially, all the stuff that I’d need to review anyway, but use of AI means that actually generating the content can be done in a consistent manner that I don’t have to think about. I don’t let it create anything, just transform things in blocks that I can quickly review for correctness and appropriateness. Kind of like getting a junior programmer to do something for me.

-

That's happening right now. I have a few friends who are looking for entry-level jobs and they find none.

It really sucks.

That said, the future lack of developers is a corporate problem, not a problem for developers. For us it just means that we'll earn a lot more in a few years.

Only if you are able to get that experience though.

-

They wanted someone with experience, who can hit the ground running, but didn't want to pay for it, either with cash or time.

- cheap

- quick

- experience

You can only pick two.

I know which 2 I am in my current role and I don't know if I like it or not. At least I don't have to try very hard.

-

Not really.

Linter in the build pipeline is generally not useful because most people won’t give results time or priority. You usually can’t fail the build for lint issues so all it does is fill logs. I usually configure a linter and prettifier in a precommit hook, to shift that left. People are more willing to fix their code in small pieces as they try to commit.

But this is also why SonarQube is a key tool. The scanners are lint-like, and you can even import some lint output. But the important part is it tries to prioritize them, score them, and enforce a quality gate based on them. I usually can’t fail a build for lint errors but SonarQube can if there are too many or too priority, or if they are security related.

But this is not the same as a code review. If an ai can use the code base as context, it should be able to add checks for consistency and maintainability similar to the rest of the code. For example I had a junior developer blindly follow the AI to use a different mocking framework than the rest of the code, for no reason other than it may have been more common in the training data. A code review ai should be able to notice that. Maybe this is too advanced for current ai, but the same guy blindly followed ai to add classes that already existed. They were just different enough that SonarQube didn’t flag is as duplicate code but ai ought to be able to summarize functionality and realize they were the same. Or I wonder if ai could do code organization? Junior guys spew classes and methods everywhere without any effort in organizing like with like, so someone can maintain it all. Or how about style? I hope yo never revisit style wars but when you’re modifying code you really need to follow style and naming of what’s already there. Maybe ai code review can pick up on that

Yeah, I’ve added AI to my review process. Sure, things take a bit longer, but the end result has been reviewed by me AND compared against a large body of code in the training data.

It regularly catches stuff I miss or ignore on a first review based on ignoring context that shouldn’t matter (eg, how reliable the person is who wrote the code).

-

Who says I made my webapp with ChatGPT in an afternoon?

I built it iteratively using ChatGPT, much like any other application. I started with the scaffolding and then slowly added more and more features over time, just like I would have done had I not used any AI at all.

Like everybody knows, Rome wasn't built in a day.

So you treated it like a junior developer and did a thorough review of its output.

I think the only disagreement here is on the semantics.

-

It may also be self fulfilling. Our new ceo said all upcoming projects must save 15% using ai, and while we’re still hiring it’s only in India.

So 6 months from now we will have status reports talking about how we saved 15% in every project

And those status reports will be generated by AI, because that’s where the real savings is.

-

Explain this too me AI. Reads back exactly what's on the screen including comments somehow with more words but less information

Ok....Ok, this is tricky. AI, can you do this refactoring so I don't have to keep track of everything. No... Thats all wrong... Yeah I know it's complicated, that's why I wanted it refactored. No you can't do that... fuck now I can either toss all your changes and do it myself or spend the next 3 hours rewriting it.

Yeah I struggle to find how anyone finds this garbage useful.

If you give it the right task, it’s super helpful. But you can’t ask it to write anything with any real complexity.

Where it thrives is being given pseudo code for something simple and asking for the specific language code for it. Or translate between two languages.

That’s… about it. And even that it fucks up.

-

If you give it the right task, it’s super helpful. But you can’t ask it to write anything with any real complexity.

Where it thrives is being given pseudo code for something simple and asking for the specific language code for it. Or translate between two languages.

That’s… about it. And even that it fucks up.

I bet it slows down the idiot software developers more than anything.

Everything can be broken into smaller easily defined chunks and for that AI is amazing.

Give me a function in Python that if I provide it a string of XYZ it will provide me an array of ABC.

The trick is knowing how it fits in your larger codebase. That's where your developer skill is. It's no different now than it was when coding was offshored to India. We replaced Ravinder with ChatGPT.

Edit - what I hate about AI is the blatant lying. I asked it for some ServiceNow code Friday and it told me to use the sys_audit_report table which doesn't exist. I told it so and then it gave me the sys_audit table.

The future will be those who are smart enough to know when AI is lying and know how to fix it when it is. Ideally you are using AI for code you can do, you just don't want to. At least that's my experience. In that, it's invaluable.

-

This post did not contain any content.

I work for an adtech company and im pretty much the only developer for the javascript library that runs on client sites and shows our ads. I dont use AI at all because it keeps generating crap

-

So you treated it like a junior developer and did a thorough review of its output.

I think the only disagreement here is on the semantics.

Sure, but it still built out a full-featured webapp, not just a bit of greenfielding here or there.

-

Explain this too me AI. Reads back exactly what's on the screen including comments somehow with more words but less information

Ok....Ok, this is tricky. AI, can you do this refactoring so I don't have to keep track of everything. No... Thats all wrong... Yeah I know it's complicated, that's why I wanted it refactored. No you can't do that... fuck now I can either toss all your changes and do it myself or spend the next 3 hours rewriting it.

Yeah I struggle to find how anyone finds this garbage useful.

This was the case a year or two ago but now if you have an MCP server for docs and your project and goals outlined properly it's pretty good.

-

Same. I also like it for basic research and helping with syntax for obscure SQL queries, but coding hasn't worked very well. One of my less technical coworkers tried to vibe code something and it didn't work well. Maybe it would do okay on something routine, but generally speaking it would probably be better to use a library for that anyway.

I actively hate the term "vibe coding." The fact is, while using an LLM for certain tasks is helpful, trying to build out an entire, production-ready application just by prompts is a huge waste of time and is guaranteed to produce garbage code.

At some point, people like your coworker are going to have to look at the code and work on it, and if they don't know what they're doing, they'll fail.

I commend them for giving it a shot, but I also commend them for recognizing it wasn't working.

-

I like the saying that LLMs are good at stuff you don’t know. That’s about it.

FreedomAdvocate is right, IMO the best use case of ai is things you have an understanding of, but need some assistance. You need to understand enough to catch atleast impactful errors by the llm

-

Fun how the article concludes that AI tools are still good anyway, actually.

This AI hype is a sickness

LLMs are very good In the correct context, forcing people to use them for things they are already great at is not the correct context.

-

That's still not actually knowing anything. It's just temporarily adding more context to its model.

And it's always very temporary. I have a yarn project I'm working on right now, and I used Copilot in VS Code in agent mode to scaffold it as an experiment. One of the refinements I included in the prompt file to build it is reminders throughout for things it wouldn't need reminding of if it actually "knew" the repo.

- I had to constantly remind it that it's a yarn project, otherwise it would inevitably start trying to use NPM as it progressed through the prompt.

- For some reason, when it's in agent mode and it makes a mistake, it wants to delete files it has fucked up, which always requires human intervention, so I peppered the prompt with reminders not to do that, but to blank the file out and start over in it.

- The frontend of the project uses TailwindCSS. It could not remember not to keep trying to downgrade its configuration to an earlier version instead of using the current one, so I wrote the entire configuration for it by hand and inserted it into the prompt file. If I let it try to build the configuration itself, it would inevitably fuck it up and then say something completely false, like, "The version of TailwindCSS we're using is still in beta, let me try downgrading to the previous version."

I'm not saying it wasn't helpful. It probably cut 20% off the time it would have taken me to scaffold out the app myself, which is significant. But it certainly couldn't keep track of the context provided by the repo, even though it was creating that context itself.

Working with Copilot is like working with a very talented and fast junior developer whose methamphetamine addiction has been getting the better of it lately, and who has early onset dementia or a brain injury that destroyed their short-term memory.

From the article: "Even after completing the tasks with AI, the developers believed that they had decreased task times by 20%. But the study found that using AI did the opposite: it increased task completion time by 19%."

I'm not saying you didn't save time, but it's remarkable that the research shows that this perception can be false.

-

Most ides do the boring stuff with templates and code generation for like a decade so that's not so helpful to me either but if it works for you.

Yeah but I find code generation stuff I've used in the past takes a significant amount of configuration, and will often generate a bunch of code I don't want it to, and not in the way I want it. Many times it's more trouble than it's worth. Having an LLM do it means I don't have to deal with configuring anything and it's generating code for the specific thing I want it to so I can quickly validate it did things right and make any additions I want because it's only generating the thing I'm working on that moment. Also it's the same tool for the various languages I'm using so that adds more convenience.

Yeah if you have your IDE setup with tools to analyze the datasource and does what you want it to do, that may work better for you. But with the number of DBs I deal with, I'd be spending more time setting up code generation than actually writing code.

-

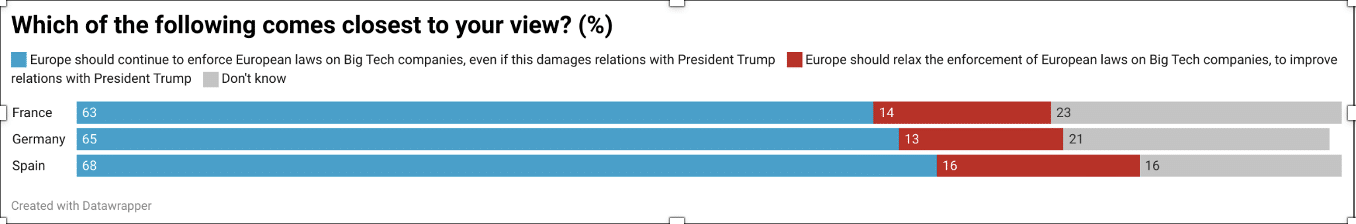

Large majority of French, German and Spanish public back tough EU stance on Big Tech, despite risk to Trump relations

Technology 1

1

-

On July 7, Gemini AI will access your WhatsApp and more. Learn how to disable it on Android.

Technology 1

1

-

People Are Being Involuntarily Committed, Jailed After Spiraling Into "ChatGPT Psychosis"

Technology 1

1

-

-

-

-

-