Mole or cancer? The algorithm that gets one in three melanomas wrong and erases patients with dark skin

-

Seems more like a byproduct of racism than racist in and of itself.

Yes, we call that "structural racism".

-

The Basque Country is implementing Quantus Skin in its health clinics after an investment of 1.6 million euros. Specialists criticise the artificial intelligence developed by the Asisa subsidiary due to its "poor” and “dangerous" results. The algorithm has been trained only with data from white patients.

It's, going to erase me?

-

My only real counter to this is who created the dataset and did the people that were creating the app have any power to affect that? To me, to say something is racist implies intent, where this situation could be that, but it could also be a case where it's just not racially diverse, which doesn't necessarily imply racism.

There's a plethora of reasons that the dataset may be mostly fair skinned. To prattle off a couple that come to mind (all of this may be known, idk, these are ignorant possibilities on my side) perhaps more fair skinned people are susceptible so there's more data, like you mentioned that dark skinned individuals may have less options to get medical help, or maybe the dataset came from a region with not many dark skinned patients. Again, all ignorant speculation on my part, but I would say that none of those options inherently make the model racist, just not a good model. Maybe racist actions led to a bad dataset, but if that's out of the devs control, then I wouldn't personally put that negative on the model.

Also, my interpretation of what racist means may differ, so there's that too. Or it could have all been done intentionally in which case, yea racist 100%

Edit: I actually read the article. It sounds like they used public datasets that did have mostly Caucasian people. They also acknowledged that fair skinned people are significantly more likely to get melanoma, which does give some credence to the unbalanced dataset. It's still not ideal, but I would also say that maybe nobody should put all of their eggs in an AI screening tool, especially for something like cancer.

There is a more specific word for it: Institutional racism.

Institutional racism, also known as systemic racism, is a form of institutional discrimination based on race or ethnic group and can include policies and practices that exist throughout a whole society or organization that result in and support a continued unfair advantage to some people and unfair or harmful treatment of others. It manifests as discrimination in areas such as criminal justice, employment, housing, healthcare, education and political representation.[1]

-

Yeah, it does make it racist, but which party is performing the racist act? The AI, the AI trainer, the data collector, or the system that prioritises white patients? That's the important distinction that simply calling it racist fails to address.

There is a more specific word for it: Institutional racism.

Institutional racism, also known as systemic racism, is a form of institutional discrimination based on race or ethnic group and can include policies and practices that exist throughout a whole society or organization that result in and support a continued unfair advantage to some people and unfair or harmful treatment of others. It manifests as discrimination in areas such as criminal justice, employment, housing, healthcare, education and political representation.[1]

-

I never said that the data gathered over decades wasn't biased in some way towards racial prejudice, discrimination, or social/cultural norms over history. I am quite aware of those things.

But if a majority of the data you have at your disposal is from fair skinned people, and that's all you have...using it is not racist.

Would you prefer that no data was used, or that we wait until the spectrum of people are fully represented in sufficient quantities, or that they make up stuff?

This is what they have. Calling them racist for trying to help and create something to speed up diagnosis helps ALL people.

The creators of this AI screening tool do not have any power over how the data was collected. They're not racist and it's quite ignorant to reason that they are.

I would prefer that as a community, we acknowledge the existence of this bias in healthcare data, and also acknowledge how harmful that bias is while using adequate resources to remedy the known issues.

There is a more specific word for it: Institutional racism.

Institutional racism, also known as systemic racism, is a form of institutional discrimination based on race or ethnic group and can include policies and practices that exist throughout a whole society or organization that result in and support a continued unfair advantage to some people and unfair or harmful treatment of others. It manifests as discrimination in areas such as criminal justice, employment, housing, healthcare, education and political representation.[1]

-

If someone with dark skin gets a real doctor to look at them, because it's known that this thing doesn't work at all in their case, then they are better off, really.

Doctors are best at diagnosing skin cancer in people of the same skin type as themselves, it's just a case of familiarity. Black people should have black skin doctors for highest success rates, white people should have white doctors for highest success rates. Perhaps the next generation of doctors might show more broad success but that remains to be seen in research.

-

Do you think any of these articles are lying or that these are not intended to generate certain sentiments towards immigrants?

Are they valid concerns to be aware of?

The reason I'm asking is because could you not say the same about any of these articles even though we all know exactly what the NY Post is doing?

Compare it to posts on Lemmy with AI topics. They're the same.

-

Media forcing opinions using the same framework they always use.

Regardless if it's the right or the left. Media is owned by people lik the Koch and bannons and Murdoch's even left leading media.

They don't want the left using AI or building on it. They've been pushing a ton of articles to left leaning spaces using the same framework they use when it's election season and are looking to spin up the right wing base. It's all about taking jobs, threats to children, status quo.

-

It's, going to erase me?

Who said that?

-

I never said that the data gathered over decades wasn't biased in some way towards racial prejudice, discrimination, or social/cultural norms over history. I am quite aware of those things.

But if a majority of the data you have at your disposal is from fair skinned people, and that's all you have...using it is not racist.

Would you prefer that no data was used, or that we wait until the spectrum of people are fully represented in sufficient quantities, or that they make up stuff?

This is what they have. Calling them racist for trying to help and create something to speed up diagnosis helps ALL people.

The creators of this AI screening tool do not have any power over how the data was collected. They're not racist and it's quite ignorant to reason that they are.

They absolutely have power over the data sets.

They could also fund research into other cancers and work with other countries like ones in Africa where there are more black people to sample.

It's impossible to know intent but it does seem pretty intentionally eugenics of them to do this when it has been widely criticized and they refuse to fix it. So I'd say it is explicitly racist.

-

Though I get the point, I would caution against calling "racism!" on AI not being able to detect molea or cancers well on people with darker skin; its harder to see darker areas on darker skins. That is physics, not racism

if only you read more than three sentences you'd see the problem is with the training data. instead you chose to make sure no one said the R word. ben shapiro would be proud

-

They absolutely have power over the data sets.

They could also fund research into other cancers and work with other countries like ones in Africa where there are more black people to sample.

It's impossible to know intent but it does seem pretty intentionally eugenics of them to do this when it has been widely criticized and they refuse to fix it. So I'd say it is explicitly racist.

Eugenics??? That's crazy.

So you'd prefer that they don't even start working with this screening method until we have gathered enough data to satisfy everyones representation?

Let's just do that and not do anything until everyone is happy. Nothing will happen ever and we will all collectively suffer.

How about this. Let's let the people with the knowledge use this "racist" data and help move the bar for health forward for everyone.

-

Eugenics??? That's crazy.

So you'd prefer that they don't even start working with this screening method until we have gathered enough data to satisfy everyones representation?

Let's just do that and not do anything until everyone is happy. Nothing will happen ever and we will all collectively suffer.

How about this. Let's let the people with the knowledge use this "racist" data and help move the bar for health forward for everyone.

It isn't crazy and it's the basis for bioethics, something I had to learn about when becoming a bioengineer who also worked with people who literally designed AI today and they continue to work with MIT, Google, and Stanford on machine learning... I have spoked extensively with these people about ethics and a large portion of any AI engineer's job is literally just ethics. Actually, a lot of engineering is learning ethics and accidents - they go hand in hand, like the Hotel Hyatt collapse.

I never suggested they stop developing the screening technology, don't strawman, it's boring. I literally gave suggestions for how they can fix it and fix their data so it is no longer functioning as a tool of eugenics.

Different case below, but related sentiment that AI is NOT a separate entity from its creators/engineers and they ABSOLUTELY should be held liable for the outcomes of what they engineer regardless of provable intent.

Character.ai Faces Lawsuit After Teen’s Suicide - Lemmy.World

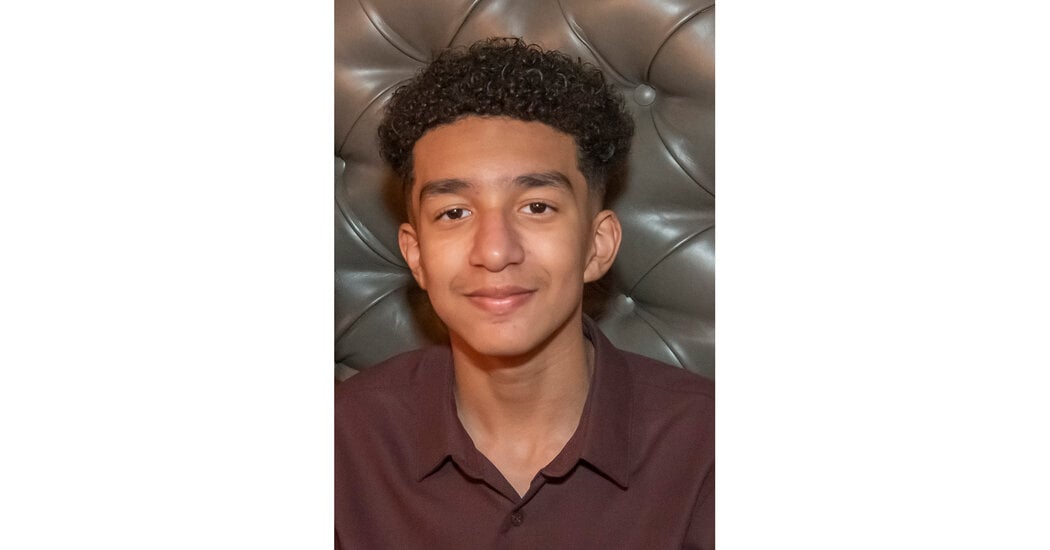

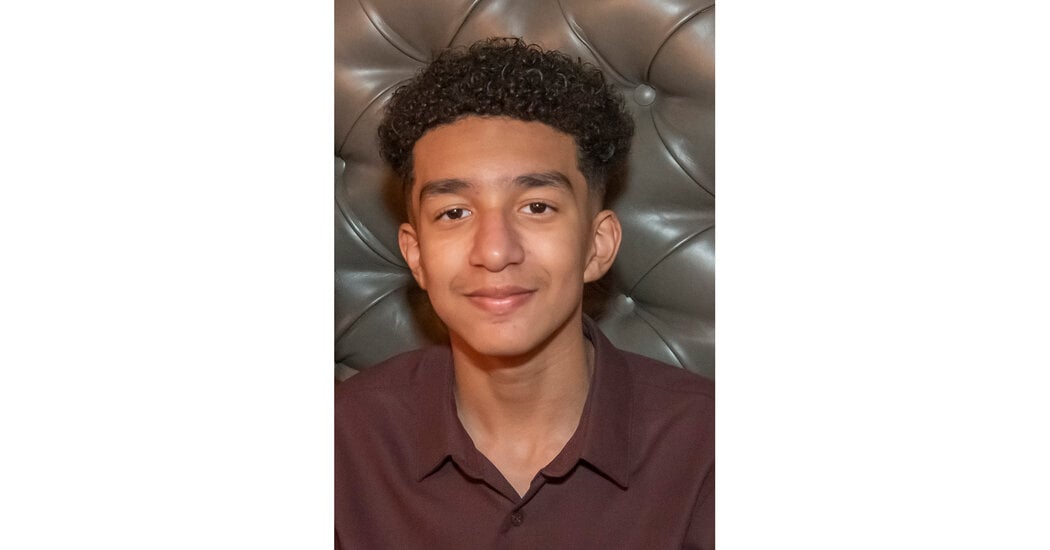

The mother of a 14-year-old Florida boy says he became obsessed with a chatbot on Character.AI [http://Character.AI] before his death. On the last day of his life, Sewell Setzer III took out his phone and texted his closest friend: a lifelike A.I. chatbot named after Daenerys Targaryen, a character from “Game of Thrones.” “I miss you, baby sister,” he wrote. “I miss you too, sweet brother,” the chatbot replied. Sewell, a 14-year-old ninth grader from Orlando, Fla., had spent months talking to chatbots on Character.AI [http://Character.AI], a role-playing app that allows users to create their own A.I. characters or chat with characters created by others. Sewell knew that “Dany,” as he called the chatbot, wasn’t a real person — that its responses were just the outputs of an A.I. language model, that there was no human on the other side of the screen typing back. (And if he ever forgot, there was the message displayed above all their chats, reminding him that “everything Characters say is made up!”) But he developed an emotional attachment anyway. He texted the bot constantly, updating it dozens of times a day on his life and engaging in long role-playing dialogues.

(lemmy.world)

You don’t think the people who make the generative algorithm have a duty to what it generates?

And whatever you think anyway, the company itself shows that it feels obligated about what the AI puts out, because they are constantly trying to stop the AI from giving out bomb instructions and hate speech and illegal sexual content.

The standard is not and was never if they were “entirely” at fault here. It’s whether they have any responsibility towards this (and we all here can see that they do indeed have some), and how much financially that’s worth in damages.

-

The Basque Country is implementing Quantus Skin in its health clinics after an investment of 1.6 million euros. Specialists criticise the artificial intelligence developed by the Asisa subsidiary due to its "poor” and “dangerous" results. The algorithm has been trained only with data from white patients.

you cant diagnosed melanoma just by the skin features alone, you need biopsy and gene tic testing too. furthermore, other types of melanoma do not have typical abcde signs sometimes.

histopathology gives the accurate indication if its melonoma or something else, and how far it spread in the sample.

-

It isn't crazy and it's the basis for bioethics, something I had to learn about when becoming a bioengineer who also worked with people who literally designed AI today and they continue to work with MIT, Google, and Stanford on machine learning... I have spoked extensively with these people about ethics and a large portion of any AI engineer's job is literally just ethics. Actually, a lot of engineering is learning ethics and accidents - they go hand in hand, like the Hotel Hyatt collapse.

I never suggested they stop developing the screening technology, don't strawman, it's boring. I literally gave suggestions for how they can fix it and fix their data so it is no longer functioning as a tool of eugenics.

Different case below, but related sentiment that AI is NOT a separate entity from its creators/engineers and they ABSOLUTELY should be held liable for the outcomes of what they engineer regardless of provable intent.

Character.ai Faces Lawsuit After Teen’s Suicide - Lemmy.World

The mother of a 14-year-old Florida boy says he became obsessed with a chatbot on Character.AI [http://Character.AI] before his death. On the last day of his life, Sewell Setzer III took out his phone and texted his closest friend: a lifelike A.I. chatbot named after Daenerys Targaryen, a character from “Game of Thrones.” “I miss you, baby sister,” he wrote. “I miss you too, sweet brother,” the chatbot replied. Sewell, a 14-year-old ninth grader from Orlando, Fla., had spent months talking to chatbots on Character.AI [http://Character.AI], a role-playing app that allows users to create their own A.I. characters or chat with characters created by others. Sewell knew that “Dany,” as he called the chatbot, wasn’t a real person — that its responses were just the outputs of an A.I. language model, that there was no human on the other side of the screen typing back. (And if he ever forgot, there was the message displayed above all their chats, reminding him that “everything Characters say is made up!”) But he developed an emotional attachment anyway. He texted the bot constantly, updating it dozens of times a day on his life and engaging in long role-playing dialogues.

(lemmy.world)

You don’t think the people who make the generative algorithm have a duty to what it generates?

And whatever you think anyway, the company itself shows that it feels obligated about what the AI puts out, because they are constantly trying to stop the AI from giving out bomb instructions and hate speech and illegal sexual content.

The standard is not and was never if they were “entirely” at fault here. It’s whether they have any responsibility towards this (and we all here can see that they do indeed have some), and how much financially that’s worth in damages.

I know what bioethics is and how it applies to research and engineering. Your response doesn't seem to really get to the core of what I'm saying: which is that the people making the AI tool aren't racist.

Help me out: what do the researchers creating this AI screening tool in its current form (with racist data) have to do with it being a tool of eugenics? That's quite a damning statement.

I'm assuming you have a much deeper understanding of what kind of data this AI screening tool uses and the finances and whatever else that goes into it. I feel that the whole "talk with Africa" to balance out the data is not great sounding and is overly simplified.

Do you really believe that the people who created this AI screening tool should be punished for using this racist data, regardless of provable intent? Even if it saved lives?

Does this kind of punishment apply to the doctor who used this unethical AI tool? His knowledge has to go into building it up somehow. Is he, by extension, a tool of eugenics too?

I understand ethical obligations and that we need higher standards moving forward in society. But even if the data right now is unethical, and it saves lives, we should absolutely use it.

-

I know what bioethics is and how it applies to research and engineering. Your response doesn't seem to really get to the core of what I'm saying: which is that the people making the AI tool aren't racist.

Help me out: what do the researchers creating this AI screening tool in its current form (with racist data) have to do with it being a tool of eugenics? That's quite a damning statement.

I'm assuming you have a much deeper understanding of what kind of data this AI screening tool uses and the finances and whatever else that goes into it. I feel that the whole "talk with Africa" to balance out the data is not great sounding and is overly simplified.

Do you really believe that the people who created this AI screening tool should be punished for using this racist data, regardless of provable intent? Even if it saved lives?

Does this kind of punishment apply to the doctor who used this unethical AI tool? His knowledge has to go into building it up somehow. Is he, by extension, a tool of eugenics too?

I understand ethical obligations and that we need higher standards moving forward in society. But even if the data right now is unethical, and it saves lives, we should absolutely use it.

I addressed that point by saying their intent to be racist or not is irrelevant when we focus on impact to the actual victims (ie systemic racism). Who cares about the individual engineer's morality and thoughts when we have provable, measurable evidence of racial disparity that we can correct easily?

It literally allows black people to die and saves white people more. That's eugenics.

It is fine to coordinate with universities in like Kenya, what are you talking about?

I never said shit about the makers of THIS tool being punished! Learn to read! I said the tool needs fixed!

Like seriously you are constantly taking the position of the white male, empathizing, then running interference for him as if he was you and as if I'm your mommy about to spank you. Stop being weird and projecting your bullshit.

Yes, doctors who use this tool on their black patients and white patients equally would be perofmring eugenics, just like the doctors who sterikized indigenous women because they were poor were doing the same. Again, intent and your ego isn't relevanf when we focus on impacts to victims and how to help them.

We should demand they work in a very meaningful way to get the data to be as good for black people as their #1 priority, ie doing studies and collecting that data