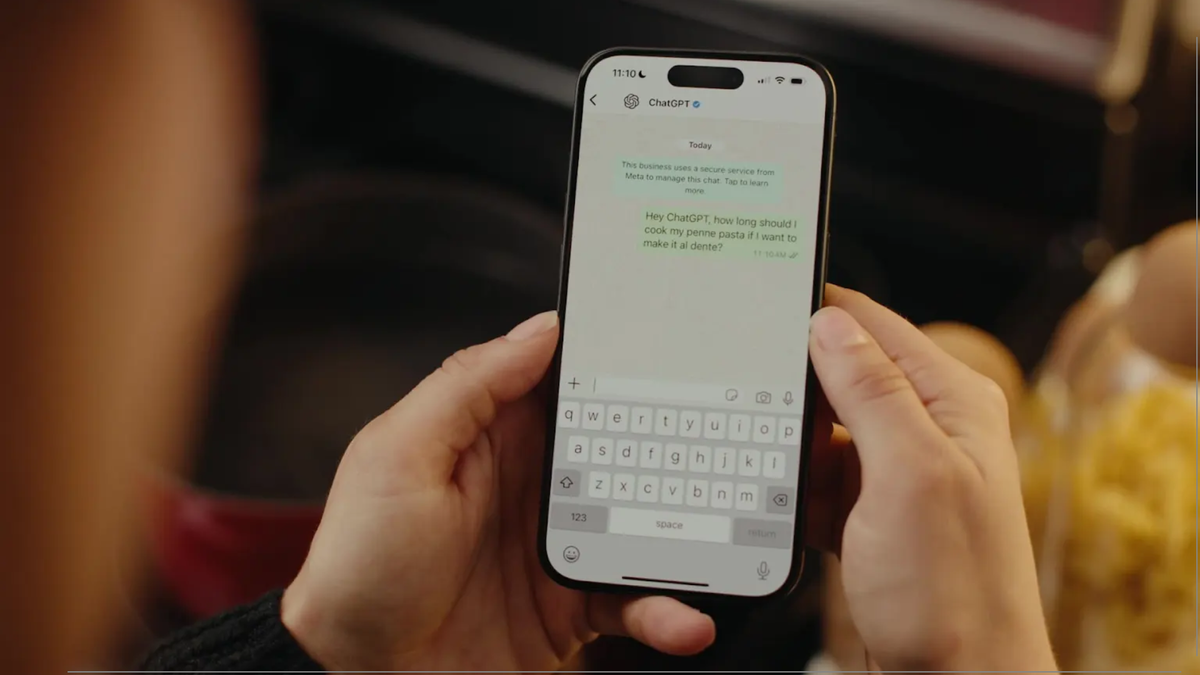

AI chatbots are becoming popular alternatives to therapy. But they may worsen mental health crises, experts warn

-

I'm fairly confident that this could be solved by better trained and configured chatbots. Maybe as a supplementary device between in-person therapy sessions, too.

I'm also very confident that there'll be lot of harm done until we get to that point. And probably after (for the sake of maximizing profits) unless there's a ton of regulation and oversight.

I'm not sure LLMs can do this. The reason is context poisoning. There would need to be an overseer system of some kind.

-

So we know that in certain cases, using chatbots as a substitute for therapy can lead to increased suffering, increases risk of harm to self and others, and amplifies symptoms of certain diagnosis. Does this mean we know it couldn't be helpful in certain cases? No. You ingested the exact same logic corpos have with LLMs, which is "just throw it at everything", and you seem to not notice you apply it the same way they do.

We might have enough data at some point to assess what kinds of people could benefit from "chatbot therapy" or something along those lines. Don't get me wrong, I'd prefer we could provide more and better therapy/healthcare in general to people, and that we had less systemic issues for which therapy is just a bandage.

it's worse than nothing

Yes, in total. But not necessarily in particular. That's a big difference.

If you have a drink that creates a nice tingling sensation in some people and make other people go crazy, the only sane thing to do is to take that drink off the market.

-

This post did not contain any content.

In a way it's similar to pseudoscientific alternative therapies people will say it's awesome and no problem since it helps people, but since it's based on nothing it actually gives people fake memories and can acerbate disorders

-

If you have a drink that creates a nice tingling sensation in some people and make other people go crazy, the only sane thing to do is to take that drink off the market.

Yeah but that applies to social media as well. Or, idk, amphetamine. Or fucking weed. Even meditation. Which are all still there, some more regulated than others. But that's not what you're getting at, your point is AI chatbots = bad and I just don't agree with that.

-

Just pull yourself up by your bootstraps, right?

Honestly, attempting something impossible might be a better use of one's time than feeding into the AI hysteria. LLMs don't think, they don't know, or understand. They just match patterns, and that's the one thing they're good for.

If you need human connection, you won't get that with an LLM. If you need a therapist, you certainly won't get that with an LLM.

-

The AI chatbots are extremely effective therapists. However, they're only graded on how long they can keep people talking.

LLMs are not effective therapists, because they're not therapists. They cannot treat anyone.

-

This post did not contain any content.

This is what happens when mental healthcare is not accessible to people.

-

CBT? Cock and Ball Torture? I think you're going to the wrong therapist. Sounds like my kind of party though. Got an address or...?

It is an unfortunately shared initialism. Cognitive Behavioral Therapy.

-

This post did not contain any content.

Not good, not good at all. In fact, I can imagine this trend backfiring in the worst way possible when ChatGPT or other LLMs encourage people who were on the verge of snapping to finally snap and commit horrible acts, instead of bringing them back from the brink like some form of actual therapy would ideally do.

Like, any form of legitimate outlet is better than taking your issues out on an LLM that will more likely than not just make things worse.

You got LibreOffice? You got a digital diary you can write into, for example. Got some basic art/craft supplies? There's an other legit outlet to put your feelings into right there (and bonus points for more of a physical outlet for clay), and you get the idea. Even without actually going to seek professional help, you likely have plenty of legitimate outlets to turn to within easy reach which aren't LLMs.

-

This post did not contain any content.

This should be straight up illegal. If a company allows it then they should 100% be liable for anything that happens.

-

Honestly, attempting something impossible might be a better use of one's time than feeding into the AI hysteria. LLMs don't think, they don't know, or understand. They just match patterns, and that's the one thing they're good for.

If you need human connection, you won't get that with an LLM. If you need a therapist, you certainly won't get that with an LLM.

Doesn't get around the fact that telling lonely people to "just go find someone to talk to" is a pretty ignorant thing to say.

-

This post did not contain any content.

I really don't understand the "LLM as therapy" angle. There's no way people using these services understand what is happening underneath. So wouldn't this just be textbook fraud then? Surely they're making claims that they're not able to deliver.

I have no problem with LLM technology and occasionally find it useful, I have a problem with grifters.