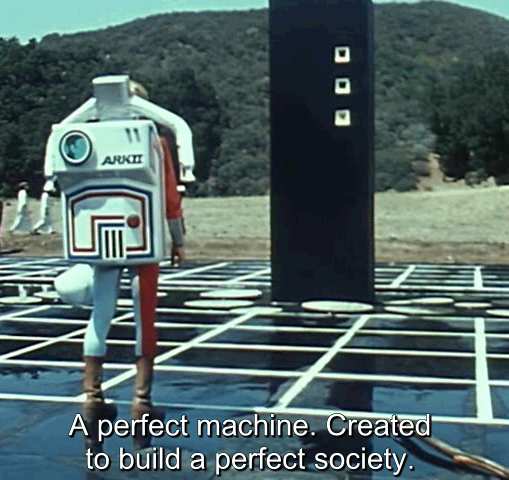

AI Utopia, AI Apocalypse, and AI Reality: If we can’t build an equitable, sustainable society on our own, it’s pointless to hope that a machine that can’t think straight will do it for us.

-

This post did not contain any content.

-

This post did not contain any content.

Do you know how many industries would collapse if everyone had bare minimum living standards!

/s, just in case.

-

This post did not contain any content.

While I appreciate them talking in good faith, all these articles that warn against misusing technology really sound out of touch from our reality.

It’s capitalism, of course it would be misused cause of our economic incentives.

When it comes to AI, you either don’t like it or are trying to make money from it, no one expects it to actually work so the entire point is moot.

This is the last paragraph of the article:

AI seems to present a spectacular new slate of opportunities and threats. But, in essence, much of what was true before AI remains so now. Human greed and desire for greater control over nature and other people may lead toward paths of short-term gain. But, if you want a good life when all’s said and done, learn to live well within limits. Live with honesty, modesty, and generosity. AI can’t help you with that.

Yeah no shit

-

This post did not contain any content.

-

This post did not contain any content.

Nobody's expecting a "machine that can't think straight" to do it. Some people are hoping that a more competent machine will be developed.

-

Nobody's expecting a "machine that can't think straight" to do it. Some people are hoping that a more competent machine will be developed.

I highly doubt it (at least anytime in our lifetimes)

-

I highly doubt it (at least anytime in our lifetimes)

That's fine, I'm just correcting the misrepresentation of the view that was in the headline.

-

This post did not contain any content.

Whether it be AI apocalypse or utopia, it's not LLM's that people think will take us there. It's AGI/ASI and nobody knows how long it'll take us to develop a system like that. Could take 2 years or it could take 50.

-

Even if it is, I don't see what it's going to conclude that we haven't already.

If we do build "the AI that will save us" it's just going to tell us "in order to ensure your existence as a species, take care of the planet and each other" and I really, really, can't picture a scenario where we actually listen.

-

Even if it is, I don't see what it's going to conclude that we haven't already.

If we do build "the AI that will save us" it's just going to tell us "in order to ensure your existence as a species, take care of the planet and each other" and I really, really, can't picture a scenario where we actually listen.

I don't see what it's going to conclude that we haven't already.

Well, that's the point of trying to build ASI. To have it think of things that we haven't been able to think of.

I really, really, can't picture a scenario where we actually listen.

Of course not, you're not an ASI.

-

Even if it is, I don't see what it's going to conclude that we haven't already.

If we do build "the AI that will save us" it's just going to tell us "in order to ensure your existence as a species, take care of the planet and each other" and I really, really, can't picture a scenario where we actually listen.

I think it very well might conclude things we haven't.

But at the same time, I think what you're saying is so very important. It's going to tell us what we already know about a lot of things. That the best way to scrub carbon from the air is the way nature is already doing it. That allowing the superwealthy to exist at the same time as poverty is not conducive to achieving humanity's most important goals.

If we consider AGI or ASI to be the answer to all of our problems and continue to pour more and more carbon into the atmosphere in an effort to get there, once we do have such a powerful intelligence, it may simply tell us, "If you were smarter as a species, you would have turned me off a long time ago."

Because the problem is not necessarily that we are trying to decode what it means to be intelligent and create machines that can replicate true conscious thought. The problem is that while we marvel at something currently much dumber than us, we are mostly neglecting to improve our own intelligence as a society. I think we might make a machine that's smarter than the average human quite soon, but not necessarily because of much change in the machines.

-

I don't see what it's going to conclude that we haven't already.

Well, that's the point of trying to build ASI. To have it think of things that we haven't been able to think of.

I really, really, can't picture a scenario where we actually listen.

Of course not, you're not an ASI.

This is the same logic people apply to God being incomprehensible.

Are you suggesting that if such a thing can be built, its word should be gospel, even if it is impossible for us to understand the logic behind it?

I don't subscribe to this. Logic is logic. You don't need a new paradigm of mind to explore all conclusions that exist. If something cannot be explained and comprehended, transmitted from one sentient mind to another, then it didn't make sense in the first place.

And you might bring up some of the stuff AI has done in material science as an example of it doing things human thinking cannot. But that's not some new kind of thinking. Once the molecular or material structure was found, humans have been perfectly capable of comprehending it.

All it's doing, is exploring the conclusions that exist, faster. And when it comes to societal challenges, I don't think it's going to find some win-win solution we just haven't thought of. That's a level of optimism I would consider insane.

-

This post did not contain any content.

The problem is that we absolutely can build a sustainable society on our own. We've had the blueprints forever, the Romans worked this out centuries ago, the problem is that there's always some power seeking prick who messes it up. So we gave up trying to build a fair society and just went with feudalism and then capitalism instead.

-

Whether it be AI apocalypse or utopia, it's not LLM's that people think will take us there. It's AGI/ASI and nobody knows how long it'll take us to develop a system like that. Could take 2 years or it could take 50.

I keep being told by experts that AGI is inevitable. Yet all I ever see is people constantly go on about LLMs, so I don't know what to think. Are they lying, is it all just a bubble that's going to burst or is there actually some utility there that is being hidden by the LLM hype? If so, can't we just use the actual AI rather than these other things.

-

Do you know how many industries would collapse if everyone had bare minimum living standards!

/s, just in case.

Would they though? I think if anything most industries and economies would be booming, more disposable income results in more people buying stuff. This results in more profitable businesses and thus more taxes are collected. More taxes being available to the government means better public services.

Even the banks would benefit, loans would be more stable since the delinquency rate would be much lower if everyone had better pay.

The only people who would lose out would be the idiot day traders who rely on uncertainty and quite a lot of luck in order to make any money. In a more stable global economy businesses would be guaranteed to make money and so there would be no cheap deals that could be made.

-

The problem is that we absolutely can build a sustainable society on our own. We've had the blueprints forever, the Romans worked this out centuries ago, the problem is that there's always some power seeking prick who messes it up. So we gave up trying to build a fair society and just went with feudalism and then capitalism instead.

The worst person you know is still just a meatbag, same as anyone else. Jeff Amazon himself has no power but what others, operating within one weird system, grant him.

Problem is we let the pricks run things, or we become the pricks ourselves.

Trick is figuring out how to stop both those things from happening. Must be tricky, given how it keeps happening. But we're a clever species. We landed on the moon, took pictures of the backside of our star, spilt the atom, etc. We can figure out good economics and governance.

-

I keep being told by experts that AGI is inevitable. Yet all I ever see is people constantly go on about LLMs, so I don't know what to think. Are they lying, is it all just a bubble that's going to burst or is there actually some utility there that is being hidden by the LLM hype? If so, can't we just use the actual AI rather than these other things.

There’s no such thing as “actual AI.” AI is just a broad term that encompasses all artificial intelligence systems. A chess engine, ChatGPT, and HAL 9000 are all examples of AI - despite being fundamentally different. A chess engine is a narrow AI, ChatGPT is a large language model, and HAL 9000 would qualify as AGI.

It could be argued that AGI is inevitable - assuming general intelligence isn’t substrate-dependent (meaning it doesn’t require a biological brain) and that we don’t destroy ourselves before we get there. But the truth is, nobody knows how difficult it is to create AGI, or whether we’re anywhere close. There’s a lot of hype around generative AI right now because it remotely resembles what AGI might look like - but that doesn’t guarantee it’s taking us any closer. It could be a stepping stone - or a total dead end.

So what I hear you asking is: “Can’t we just use task-specific narrow AI instead of creating AGI?” And yes, we could - but we’re never going to stop improving these systems. And every step of progress brings us closer to AGI, whether that’s the goal or not. The only things that might stop us are hitting a fundamental wall (like substrate dependence) or wiping ourselves out.

There’s also the economic incentive. AGI would be the ultimate wealth generator. All the incentives point toward building it. It’s a winner-takes-all scenario: if you're the first to create a true AGI, your competition will likely never catch up - because from that point on, the AGI can improve itself. And then the improved version can further improve itself, and so on. That’s how you get to the singularity: an intelligence explosion that leads to Artificial Superintelligence (ASI) - a level of intelligence far beyond human comprehension.

-

I keep being told by experts that AGI is inevitable. Yet all I ever see is people constantly go on about LLMs, so I don't know what to think. Are they lying, is it all just a bubble that's going to burst or is there actually some utility there that is being hidden by the LLM hype? If so, can't we just use the actual AI rather than these other things.

Every type of AI that was ever made had people saying that this is the one that'll bring is general intelligence. It's just a matter of scaling it up further, the hype crashed and there was an AI winter. Now LLM have their own problems scaling up and nothing really indicating it's anywhere near general intelligence. There isn't much more data to train them on. And so far, not enough people willing to pay for it. Definitely bubble territory.

-

Even if it is, I don't see what it's going to conclude that we haven't already.

If we do build "the AI that will save us" it's just going to tell us "in order to ensure your existence as a species, take care of the planet and each other" and I really, really, can't picture a scenario where we actually listen.

Like Musk don't liking that grok is stating facts going against Musk's own beliefs and now he's looking into retraining and reprogramming grok to spout the right ideologies. Having an AI will not save us.

-

The problem is that we absolutely can build a sustainable society on our own. We've had the blueprints forever, the Romans worked this out centuries ago, the problem is that there's always some power seeking prick who messes it up. So we gave up trying to build a fair society and just went with feudalism and then capitalism instead.

Heading back towards feudalism.