AI industry horrified to face largest copyright class action ever certified

-

This post did not contain any content.

Would really love to see IP law get taken down a notch out of this.

-

Say I train an LLM directly on Marvel movies, and then sell movies (or maybe movie scripts) that are almost identical to existing Marvel movies (maybe with a few key names and features altered). I don’t think anyone would argue that is not a derivative work, or that falls under “fair use.”

I think you're failing to differentiate between a work, which is protected by copyright, vs a tool which is not affected by copyright.

Say I use Photoshop and Adobe Premiere to create a script and movie which are almost identical to existing Marvel movies. I don't think anyone would argue that is not a derivative work, or that falls under "fair use".

The important part here is that the subject of this sentence is 'a work which has been created which is substantially similar to an existing copyrighted work'. This situation is already covered by copyright law. If a person draws a Mickey Mouse and tries to sell it then Disney will sue them, not their pencil.

Specific works are copyrighted and copyright laws create a civil liability for a person who creates works that are substantially similar to a copyrighted work.

Copyright doesn't allow publishers to go after Adobe because a person used Photoshop to make a fake Disney poster. This is why things like Bittorrent can legally exist despite being used primarily for copyright violation. Copyright laws apply to people and the works that they create.

A generated Marvel movie is substantially similar to a copyrighted Marvel movie and so copyright law protects it. A diffusion model is not substantially similar to any copyrighted work by Disney and so copyright laws don't apply here.

I take a bold stand on the whole topic:

I think AI is a big Scam ( pattern matching has nothing to do with !!! intelligence !!! ).

And this Scam might end as Dot-Com bubble in the late 90s ( https://en.wikipedia.org/wiki/Dot-com_bubble ) including the huge economic impact cause to many people have invested in an "idea" not in an proofen technology.

And as the Dot-Com bubble once the AI bubble has been cleaned up Machine Learning and Vector Databases will stay forever ( maybe some other part of the tech ).

Both don't need copyright changes cause they will never try to be one solution for everything. Like a small model to transform text to speech ... like a small model to translate ... like a full text search using a vector db to index all local documents ...

Like a small tool to sumarize text.

-

People cheering for this have no idea of the consequence of their copyright-maximalist position.

If using images, text, etc to train a model is copyright infringement then there will NO open models because open source model creators could not possibly obtain all of the licensing for every piece of written or visual media in the Common Crawl dataset, which is what most of these things are trained on.

As it stands now, corporations don't have a monopoly on AI specifically because copyright doesn't apply to AI training. Everyone has access to Common Crawl and the other large, public, datasets made from crawling the public Internet and so anyone can train a model on their own without worrying about obtaining billions of different licenses from every single individual who has ever written a word or drawn a picture.

If there is a ruling that training violates copyright then the only entities that could possibly afford to train LLMs or diffusion models are companies that own a large amount of copyrighted materials. Sure, one company will lose a lot of money and/or be destroyed, but the legal president would be set so that it is impossible for anyone that doesn't have billions of dollars to train AI.

People are shortsightedly seeing this as a victory for artists or some other nonsense. It's not. This is a fight where large copyright holders (Disney and other large publishing companies) want to completely own the ability to train AI because they own most of the large stores of copyrighted material.

If the copyright holders win this then the open source training material, like Common Crawl, would be completely unusable to train models in the US/the West because any person who has ever posted anything to the Internet in the last 25 years could simply sue for copyright infringement.

In theory sure, but in practice who has the resources to do large scale model training on huge datasets other than large corporations?

-

But it would also mean that the Internet Archive is illegal, even tho they don't profit, but if scraping the internet is a copyright violation, then they are as guilty as Anthropic.

i say move it out of the us

-

In theory sure, but in practice who has the resources to do large scale model training on huge datasets other than large corporations?

Distributed computing projects, large non-profits, people in the near future with much more powerful and cheaper hardware, governments which are interested in providing public services to their citizens, etc.

Look at other large technology projects. The Human Genome Project spent $3 billion to sequence the first genome but now you can have it done for around $500. This cost reduction is due to the massive, combined effort of tens of thousands of independent scientists working on the same problem. It isn't something that would have happened if Purdue Pharma owned the sequencing process and required every scientist to purchase a license from them in order to do research.

LLM and diffusion models are trained on the works of everyone who's ever been online. This work, generated by billions of human-hours, is stored in the Common Crawl datasets and is freely available to anyone who wants it. This data is both priceless and owned by everyone. We should not be cheering for a world where it is illegal to use this dataset that we all created and, instead, we are forced to license massive datasets from publishing companies.

The amount of progress on these types of models would immediately stop, there would be 3-4 corporations would could afford the licenses. They would have a de facto monopoly on LLMs and could enshittify them without worry of competition.

-

People cheering for this have no idea of the consequence of their copyright-maximalist position.

If using images, text, etc to train a model is copyright infringement then there will NO open models because open source model creators could not possibly obtain all of the licensing for every piece of written or visual media in the Common Crawl dataset, which is what most of these things are trained on.

As it stands now, corporations don't have a monopoly on AI specifically because copyright doesn't apply to AI training. Everyone has access to Common Crawl and the other large, public, datasets made from crawling the public Internet and so anyone can train a model on their own without worrying about obtaining billions of different licenses from every single individual who has ever written a word or drawn a picture.

If there is a ruling that training violates copyright then the only entities that could possibly afford to train LLMs or diffusion models are companies that own a large amount of copyrighted materials. Sure, one company will lose a lot of money and/or be destroyed, but the legal president would be set so that it is impossible for anyone that doesn't have billions of dollars to train AI.

People are shortsightedly seeing this as a victory for artists or some other nonsense. It's not. This is a fight where large copyright holders (Disney and other large publishing companies) want to completely own the ability to train AI because they own most of the large stores of copyrighted material.

If the copyright holders win this then the open source training material, like Common Crawl, would be completely unusable to train models in the US/the West because any person who has ever posted anything to the Internet in the last 25 years could simply sue for copyright infringement.

Copyright is a leftover mechanism from slavery and it will be interesting to see how it gets challenged here, given that the wealthy view AI as an extension of themselves and not as a normal employee. Genuinely think the copyright cases from AI will be huge.

-

And you’re just crying that you can’t steal.

Ah yes. "Public Domain" == "Theft"

-

good, then I can just ignore Disney instead of EVERYTHING else.

Until they charge people to use their AI.

It'll be just like today except that it will be illegal for any new companies to try and challenge the biggest players.

-

Copyright is a leftover mechanism from slavery and it will be interesting to see how it gets challenged here, given that the wealthy view AI as an extension of themselves and not as a normal employee. Genuinely think the copyright cases from AI will be huge.

The case law is on the side of fair use.

Google has already been sued for using copyrighted works to produce their products and they won on the grounds of fair use. The cases have some differences but the arguments are likely to be roughly the same in this case. In the case of Authors Guild, Inc. v. Google, Inc., 804 F.3d 202 (2d Cir. 2015)

Google was sued for their Google Books product. With Google Books you can full-text search most books and it returns a snippet of text containing your search terms and an image of the book's cover. Both the text and book images are copyrighted and Google did not obtain any license for their use.Google won the case after a trial. The ruling was upheld on appeal and the Supreme Court denied cert.

In sum, we conclude that:

- Google's unauthorized digitizing of copyright-protected works, creation of a search functionality, and display of snippets from those works are non-infringing fair uses. The purpose of the copying is highly transformative, the public display of text is limited, and the revelations do not provide a significant market substitute for the protected aspects of the originals. Google's commercial nature and profit motivation do not justify denial of fair use.

- Google's provision of digitized copies to the libraries that supplied the books, on the understanding that the libraries will use the copies in a manner consistent with the copyright law, also does not constitute infringement.

Nor, on this record, is Google a contributory infringer.

In the case in the OP, this case will certainly be referenced.

-

People cheering for this have no idea of the consequence of their copyright-maximalist position.

If using images, text, etc to train a model is copyright infringement then there will NO open models because open source model creators could not possibly obtain all of the licensing for every piece of written or visual media in the Common Crawl dataset, which is what most of these things are trained on.

As it stands now, corporations don't have a monopoly on AI specifically because copyright doesn't apply to AI training. Everyone has access to Common Crawl and the other large, public, datasets made from crawling the public Internet and so anyone can train a model on their own without worrying about obtaining billions of different licenses from every single individual who has ever written a word or drawn a picture.

If there is a ruling that training violates copyright then the only entities that could possibly afford to train LLMs or diffusion models are companies that own a large amount of copyrighted materials. Sure, one company will lose a lot of money and/or be destroyed, but the legal president would be set so that it is impossible for anyone that doesn't have billions of dollars to train AI.

People are shortsightedly seeing this as a victory for artists or some other nonsense. It's not. This is a fight where large copyright holders (Disney and other large publishing companies) want to completely own the ability to train AI because they own most of the large stores of copyrighted material.

If the copyright holders win this then the open source training material, like Common Crawl, would be completely unusable to train models in the US/the West because any person who has ever posted anything to the Internet in the last 25 years could simply sue for copyright infringement.

Or it just happens overseas, where these laws don't apply (or can't be enforced).

But I don't think it will happen. Too many countries are desperate to be "the AI country" that they'll risk burning whole industries to the ground to get it.

-

Until they charge people to use their AI.

It'll be just like today except that it will be illegal for any new companies to try and challenge the biggest players.

why would I use their AI? on top of that, wouldn't it be in their best interests to allow people to use their AI with as few restrictions as possible in order to maximize market saturation?

-

This post did not contain any content.

An important note here, the judge has already ruled in this case that "using Plaintiffs' works "to train specific LLMs [was] justified as a fair use" because "[t]he technology at issue was among the most transformative many of us will see in our lifetimes." during the summary judgement order.

The plaintiffs are not suing Anthropic for infringing on their copyright, the court has already ruled that it was so obvious that they could not succeed with that argument that it could be dismissed. Their only remaining claim is that Anthropic downloaded the books from piracy sites using bittorrent

This isn't about LLMs anymore, it's a standard "You downloaded something on Bittorrent and made a company mad"-type case that has been going on since Napster.

Also, the headline is incredibly misleading. It's ascribing feelings to an entire industry based on a common legal filing that is not by itself noteworthy. Unless you really care about legal technicalities, you can stop here.

The actual news, the new factual thing that happened, is that the Consumer Technology Association and the Computer and Communications Industry Association filed an Amicus Brief, in an appeal of an issue that Anthropic the court ruled against.

This is pretty normal legal filing about legal technicalities. This isn't really newsworthy outside of, maybe, some people in the legal profession who are bored.

The issue was class certification.

Three people sued Anthropic. Instead of just suing Anthropic on behalf of themselves, they moved to be certified as class. That is to say that they wanted to sue on behalf of a larger group of people, in this case a "Pirated Books Class" of authors whose books Anthropic downloaded from the book piracy websites.

The judge ruled they can represent the class, Anthropic appealed the ruling. During this appeal an industry group filed an Amicus brief with arguments supporting Anthropic's argument. This is not uncommon, The Onion famously filed an Amicus brief with the Supreme Court when they were about to rule on issues of parody. Like everything The Onion writes, it's a good piece of satire: https://www.supremecourt.gov/DocketPDF/22/22-293/242292/20221003125252896_35295545_1-22.10.03 - Novak-Parma - Onion Amicus Brief.pdf

-

Copyright is a leftover mechanism from slavery and it will be interesting to see how it gets challenged here, given that the wealthy view AI as an extension of themselves and not as a normal employee. Genuinely think the copyright cases from AI will be huge.

My last comment was wrong, I've read through the filings of the case.

The judge has already ruled that training the LLMs using the books was so obviously fair use that it was dismissed in summary judgement (my bolds):

To summarize the analysis that now follows, the use of the books at issue to train Claude and its precursors was exceedingly transformative and was a fair use under Section 107 of the Copyright Act. The digitization of the books purchased in print form by Anthropic was also a fair use, but not for the same reason as applies to the training copies. Instead, it was a fair use because all Anthropic did was replace the print copies it had purchased for its central library with more convenient, space-saving, and searchable digital copies without adding new copies, creating new works, or redistributing existing copies. However, Anthropic had no entitlement to use pirated copies for its central library, and creating a permanent, general-purpose library was not itself a fair use excusing Anthropic's piracy.

The only issue remaining in this case is that they downloaded copyrighted material with bittorrent, the kind of lawsuits that have been going on since napster. They'll probably be required to pay for all 196,640 books that they priated and some other damages.

-

Copyright owners winning the case maintains the status quo.

The AI companies winning the case means anything leaked on the internet or even just hosted by a company can be used by anyone, including private photos and communication.

Copyright owners are then the new AI companies, and compared to now where open source AI is a possibility, it will never be, because only they will have enough content to train models. And without any competition, enshittification will go full speed ahead, meaning the chatbots you don't like will still be there, and now they will try to sell you stuff and you can't even choose a chatbot that doesn't want to upsell you.

-

i say move it out of the us

they should have done that long ago, and if they haven't already started a backup in both europe and china, it's high time

-

If scraping is illegal, so is the Internet Archive, and that would be an immense loss for the world.

This is the real concern. Copyright abuse has been rampant for a long time, and the only reason things like the Internet Archive are allowed to exist is because the copyright holders don't want to pick a fight they could potentially lose and lessen their hold on the IPs they're hoarding. The AI case is the perfect thing for them, because it's a very clear violation with a good amount of public support on their side, and winning will allow them to crack down even harder on all the things like the Internet Archive that should be fair use. AI is bad, but this fight won't benefit the public either way.

-

People cheering for this have no idea of the consequence of their copyright-maximalist position.

If using images, text, etc to train a model is copyright infringement then there will NO open models because open source model creators could not possibly obtain all of the licensing for every piece of written or visual media in the Common Crawl dataset, which is what most of these things are trained on.

As it stands now, corporations don't have a monopoly on AI specifically because copyright doesn't apply to AI training. Everyone has access to Common Crawl and the other large, public, datasets made from crawling the public Internet and so anyone can train a model on their own without worrying about obtaining billions of different licenses from every single individual who has ever written a word or drawn a picture.

If there is a ruling that training violates copyright then the only entities that could possibly afford to train LLMs or diffusion models are companies that own a large amount of copyrighted materials. Sure, one company will lose a lot of money and/or be destroyed, but the legal president would be set so that it is impossible for anyone that doesn't have billions of dollars to train AI.

People are shortsightedly seeing this as a victory for artists or some other nonsense. It's not. This is a fight where large copyright holders (Disney and other large publishing companies) want to completely own the ability to train AI because they own most of the large stores of copyrighted material.

If the copyright holders win this then the open source training material, like Common Crawl, would be completely unusable to train models in the US/the West because any person who has ever posted anything to the Internet in the last 25 years could simply sue for copyright infringement.

Anybody can use copyrighted works under fair use for research, more so if your LLM model is open source (I would say this fair use should only actually apply if your model is open source...).

You are wrong.We don't need to break copyright rights that protect us from corporations in this case, or also incidentally protect open source and libre software.

-

Distributed computing projects, large non-profits, people in the near future with much more powerful and cheaper hardware, governments which are interested in providing public services to their citizens, etc.

Look at other large technology projects. The Human Genome Project spent $3 billion to sequence the first genome but now you can have it done for around $500. This cost reduction is due to the massive, combined effort of tens of thousands of independent scientists working on the same problem. It isn't something that would have happened if Purdue Pharma owned the sequencing process and required every scientist to purchase a license from them in order to do research.

LLM and diffusion models are trained on the works of everyone who's ever been online. This work, generated by billions of human-hours, is stored in the Common Crawl datasets and is freely available to anyone who wants it. This data is both priceless and owned by everyone. We should not be cheering for a world where it is illegal to use this dataset that we all created and, instead, we are forced to license massive datasets from publishing companies.

The amount of progress on these types of models would immediately stop, there would be 3-4 corporations would could afford the licenses. They would have a de facto monopoly on LLMs and could enshittify them without worry of competition.

The world you're envisioning would only have paid licenses, who's to say we can't have a "free for non commercial purposes" license style for it all?

-

Let's go baby! The law is the law, and it applies to everybody

If the "genie doesn't go back in the bottle", make him pay for what he's stealing.

The law is not the law.

I am the law.insert awesome guitar riff here

Reference: https://youtu.be/Kl_sRb0uQ7A

-

This is the real concern. Copyright abuse has been rampant for a long time, and the only reason things like the Internet Archive are allowed to exist is because the copyright holders don't want to pick a fight they could potentially lose and lessen their hold on the IPs they're hoarding. The AI case is the perfect thing for them, because it's a very clear violation with a good amount of public support on their side, and winning will allow them to crack down even harder on all the things like the Internet Archive that should be fair use. AI is bad, but this fight won't benefit the public either way.

I wouldn't even say AI is bad, i have currently Qwen 3 running on my own GPU giving me a course in RegEx and how to use it. It sometimes makes mistakes in the examples (we all know that chatbots are shit when it comes to the r's in strawberry), but i see it as "spot the error" type of training for me, and the instructions themself have been error free for now, since i do the lesson myself i can easily spot if something goes wrong.

AI crammed into everything because venture capitalists try to see what sticks is probably the main reason public opinion of chatbots is bad, and i don't condone that too, but the technology itself has uses and is an impressive accomplishment.

Same with image generation: i am shit at drawing, and i don't have the money to commission art if i want something specific, but i can generate what i want for myself.

If the copyright side wins, we all might lose the option to run imagegen and llms on our own hardware, there will never be an open-source llm, and resources that are important to us all will come even more under fire than they are already. Copyright holders will be the new AI companies, and without competition the enshittification will instantly start.

-

-

-

-

In the Sweltering Southwest, Planting Solar Panels in Farmland Can Help Both Photovoltaics and Crops - Inside Climate News

Technology 1

1

-

-

-

-

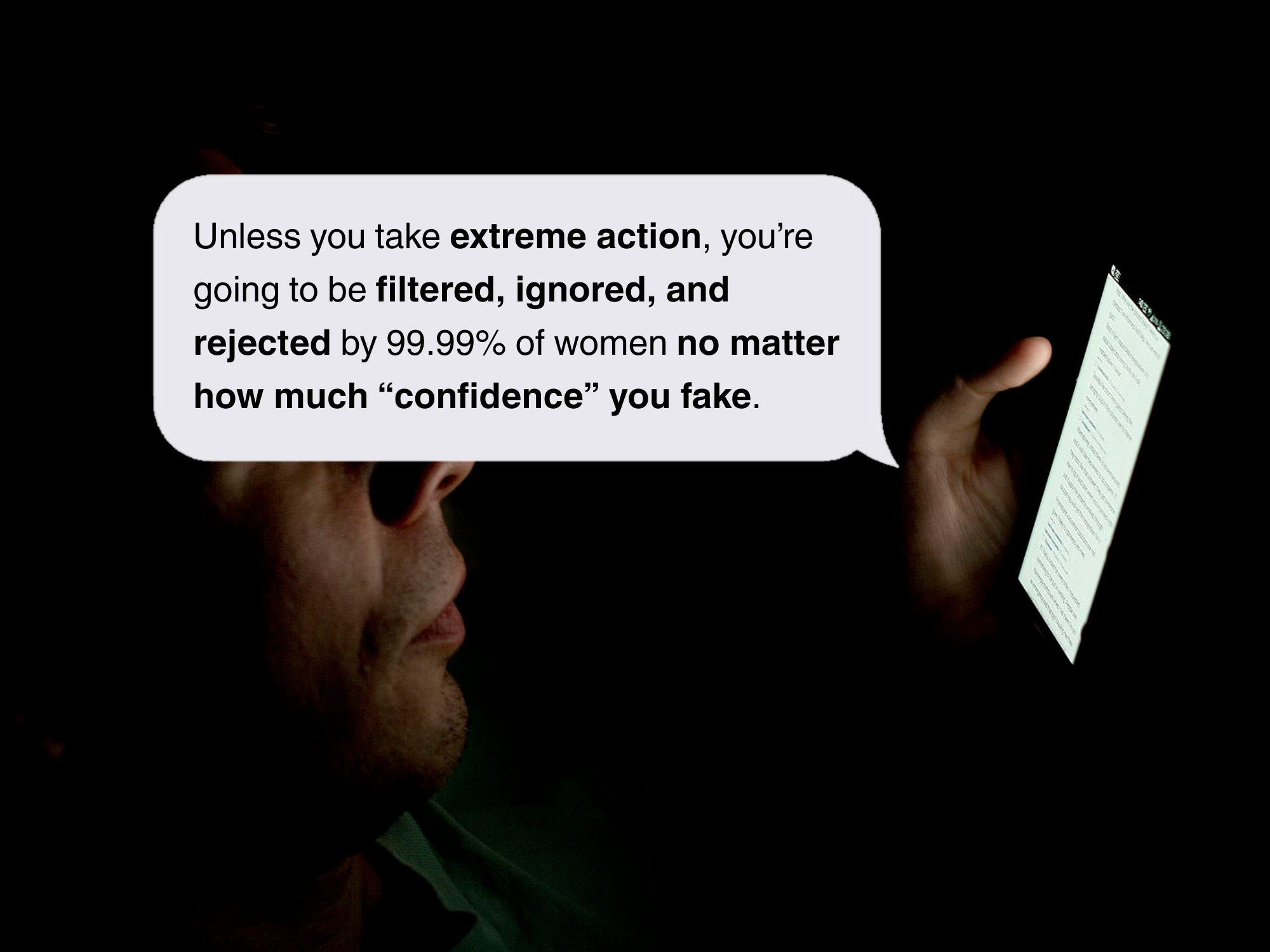

OpenAI featured chatbot is pushing extreme surgeries to “subhuman” men: OpenAI's featured chatbot recommends $200,000 in surgeries while promoting incel ideology

Technology 1

1