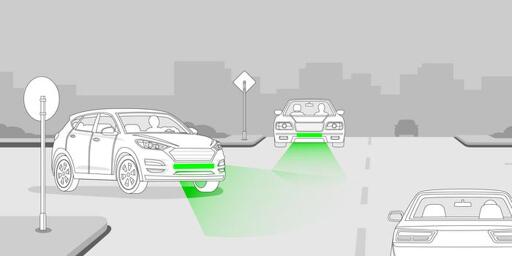

Front Brake Lights Could Drastically Diminish Road Accident Rates

-

Anyone complaining about lane keep not letting them change lanes or make turns is telling on themselves

Okay Verstappen calm down there

-

cross-posted from: https://lemmy.bestiver.se/post/424410

Since we're all throwing random ideas out here, I want to equip my vehicle with an annoyingly loud external speaker so that when someone near me does something dumb, I can personally shame them.

-

I think that's a neat idea, but we could instead, collectively, just do better at following other cars at a safe distance. I know it's impractical to expect all drivers on the road everywhere to change their behavior, but it's also persistently frustrating as someone who has for years frequently been stuck in traffic to see 95% of drivers insist on following less than a car-length behind. Following too closely to enable decision-making or accommodate other drivers is the cause of like 98% of both traffic accidents and congestion, according to my completely anecdotal and made up research.

There's this idea I've been considering for a long time.

Imagine putting a remote controlled firework smoke bomb under the tailpipe, hidden from sight. At best a really stinky one that smells like burned rubber or something.

When someone follows to closely, just fake an engine issue or something by activating the smoke bomb and fill their AC air intake with the smell of burned rubber for weeks. Just to teach them to not follow too closely again.

-

If Japan introduced that they never caught on, unless it's specific to an area or model of car.

90% of the things that Japan introduced according to comment sections on the internet never happened (or never made it past the prototype stage) and the rest was actually introduced in Korea, not in Japan.

The Japanophilia is strong with a lot of people on the internet.

-

I think a secondary light that blinks quickly would be a good signal of emergency braking. Like some aftermarket motorcycle taillights that start with a blinking pattern before they stay on, but reverse the order.

So, standard brake light comes on at the standard time, at the first touch of the brake. For stronger braking, the second light comes on. For emergency braking, the standard brake light stays lit while the second light begins blinking frantically.

Edit for consistency

That could probably be implemented in most existing vehicles, and at least it would provide more information.

-

Japan introduced brake lights that increase intensity based on how hard the driver was braking. 20+ years ago. They tested it in the US and drivers found it to be “confusing.”

I suspect because there's no consistency in the brightness of vehicle lights. But that's one of the reasons why I think an incremental light bar would be better, there's no variation between vehicles. You could even make it more informative by flashing the whole bar when you first brake, so someone behind you can more easily see how much of the bar is being lit up.

-

I think that's a neat idea, but we could instead, collectively, just do better at following other cars at a safe distance. I know it's impractical to expect all drivers on the road everywhere to change their behavior, but it's also persistently frustrating as someone who has for years frequently been stuck in traffic to see 95% of drivers insist on following less than a car-length behind. Following too closely to enable decision-making or accommodate other drivers is the cause of like 98% of both traffic accidents and congestion, according to my completely anecdotal and made up research.

I suspect a lot of that has to do with the entitled way people are driving these days. If you leave a car length gap, some kid will wrecklessly attempt to cram their way in because your lane momentarily moved slightly faster.

-

I see a lot of those on trucks here in the south. Good for when you are towing shit so people can see around all your junk in the trailer.

Does your state not require good lights on the trailers? I just built a new trailer last year, I was required to have full working brake and turn signals along with running lights, but I went the extra step and included more brake/turn lights on the front and rear of the fenders, along with reverse lights plus four marker lights along each side. Trailers are hard enough to see, I didn't want to make it harder for anyone by just sticking with the bare minimum.

-

Since we're all throwing random ideas out here, I want to equip my vehicle with an annoyingly loud external speaker so that when someone near me does something dumb, I can personally shame them.

Counterpoint: the dumb people could have them as well.

-

Counterpoint: the dumb people could have them as well.

Every car needs proximity chat so traffic becomes like a COD lobby.

-

Every car needs proximity chat so traffic becomes like a COD lobby.

Now there's a Black Mirror episode with true horror!

-

BMW has implemented this in the US market for the past 20 years or so at least. Under heavy braking, additional brake lights turn on. I believe they call that Brake Force Display. I’m sure they’re not the only manufacturer to do this, too

Plenty of cars flash their brake lights when ABS(/ESP?) engages, which is reasonable and should be a legal requirement IMO.

There's lots of room to give additional info in between that and "brake light is on because the driver doesn't understand that they can do mild adjustments by letting off the gas / stupid bitch-ass VW PHEV computer thinks using cruise control downhill with electric regen requires the motherfucking brake lights". It's like no-one realizes or cares that brake lights lose all purpose if they're on when the car isn't meaningfully decelerating. ARGH.

-

and honestly i have the same problem with that intended use. it often looks like a stopped car is attempting to turn out into traffic. IMO emergency lights should have a faster blink pattern or something to differentiate from turn signals.

There's a programmable flasher relay that does exactly this. It's specific to certain Toyota/Lexus and Subarus from the 2000s to mid-2010s, but it's something. I have one in my 2008 Sienna - the "emergency flasher" part is programmed to strobe, kinda like a tow truck. I like it.

-

Japan introduced brake lights that increase intensity based on how hard the driver was braking. 20+ years ago. They tested it in the US and drivers found it to be “confusing.”

probably because thats a terrible way to do it. It would be noticeable if a car started braking and then started braking much harder, but if they slam on the brakes you don't see anything change, just a normal brake light.

-

I still think rear signaling could be improved dramatically by using a wide third-brake light to show the intensity of braking.

For example -- I have seen some aftermarket turn signals which are bars the width of the vehicle, and show a "moving" signal starting in the center and then progressing towards the outer edge of the vehicle.

So now take that idea for brake. When you barely have your foot on the brake pedal, it would light a couple lights in the center of your brake signal. Press a little harder and now it's lighting up 1/4 of the lights from the center towards the outside edge of the vehicle. And when you're pressing the brake pedal to the floor, all of the lights are lit up from the center to the outside edges of the vehicle. The harder you press on the pedal, the more lights are illuminated.

Now you have an immediate indication of just how hard the person in front of you is braking. With the normal on/off brake signals, you don't know what's happening until moments later as you determine how fast you are approaching that car. They could be casually slowing, or they could be locking up their wheels for an accident in front of them.

Seems much more complicated than having the brake lights rapidly flash during hard braking. But of course we couldn't do that in the US because our turn signals/hazard lights are red

-

I don't know where you are but rear fogs aren't illegal in the rain here and from experience they are nothing but helpful in heavy rain and white out snow. I am always so so sooo glad when someone in front of me is using them when it's absolutely pouring. You really have to not be paying attention not to notice that it's two lights and not three and somehow mistake them for stop lights.

In fact, Transport Canada recommends using them in fog, rain, or snow.

Use only if driving in fog, rain or snow as these lights can be confused with stop lights, distracting other drivers.

Using your vehicle lights to see and be seen

About the new vehicle lighting standard for cars in 2021 and tips on how to use your lights safely now.

Transport Canada (tc.canada.ca)

II. - Le ou les feux arrière de brouillard ne peuvent être utilisés qu'en cas de brouillard ou de chute de neige. ☞ https://www.legifrance.gouv.fr/codes/article_lc/LEGIARTI000006842263

Feux de brouillard arrière : ils sont indiqués uniquement en cas de brouillard ou de chute de neige (mais jamais sous la pluie en raison de leur trop grande intensité) ☞ https://public.codesrousseau.fr/conseils-pratiques/909-feux-de-brouillard-avant-et-arriere-quand-les-utiliser.html

-

II. - Le ou les feux arrière de brouillard ne peuvent être utilisés qu'en cas de brouillard ou de chute de neige. ☞ https://www.legifrance.gouv.fr/codes/article_lc/LEGIARTI000006842263

Feux de brouillard arrière : ils sont indiqués uniquement en cas de brouillard ou de chute de neige (mais jamais sous la pluie en raison de leur trop grande intensité) ☞ https://public.codesrousseau.fr/conseils-pratiques/909-feux-de-brouillard-avant-et-arriere-quand-les-utiliser.html

Ça c'est de l'osti de merde comme on dit ici.

-

Seems much more complicated than having the brake lights rapidly flash during hard braking. But of course we couldn't do that in the US because our turn signals/hazard lights are red

Turn signals can be either red or amber in the US.

-

Turn signals can be either red or amber in the US.

yeah, but they shouldn't be allowed to be red to begin with.

-

Not selling tanks as cars could also help. Especially with fatality rates

People don't even need car tbh. Motorbikes everywhere please. Zip zip, less traffic, everyone pays attention to road or falls and dies.