OpenAI Is Giving Exactly the Same Copy-Pasted Response Every Time Time ChatGPT Is Linked to a Mental Health Crisis

-

"Ugrh guys, we dont know how this machine works so we should definetly install it in every corporation, home and device. If it kills someone we shouldnt be held liable for our product."

Not seeing the irony in this is beyond me. Is this a troll account?

If you cant guarantee the safety of a product, limit or restrict its use cases or provide safety guidelines or regulations you should not sell the product. It is completely fair to blame the product and the ones who sell/manifacture it.

Safety guidelines are regularly given

If people purchase a knife and behave badly with it, it’s on them

Something writing things isn’t comparable to a machine that could kill you. In the end, it’s always up to the person doing the things

I still wonder how

ClosedOpenAI forcefully installed ChatGPT in this person's home. Or how it is installed because they don’t have software…

Quit your bullshit -

Safety guidelines are regularly given

If people purchase a knife and behave badly with it, it’s on them

Something writing things isn’t comparable to a machine that could kill you. In the end, it’s always up to the person doing the things

I still wonder how

ClosedOpenAI forcefully installed ChatGPT in this person's home. Or how it is installed because they don’t have software…

Quit your bullshitExcept there are no guidelines or safety regulations in place for AI...

-

Except there are no guidelines or safety regulations in place for AI...

Depends on your country, and yes, exchanges do have laws -

Glad to hear you are an LLM

The more safeguards are added in LLMs, the dumber they get, and the more resource intensive they get to offset this. If you get convinced to kill yourself by an AI, I'm pretty sure your decision was already taken, or you're a statistical blip

“Safeguards and regulations make business less efficient” has always been true. They still avoid death and suffering.

In this case, if they can’t figure out how to control LLMs without crippling them, that’s pretty absolute proof that LLMs should not be used. What good is a tool you can’t control?

“I cannot regulate this nuclear plant without the power dropping, so I’ll just run it unregulated”.

-

“Safeguards and regulations make business less efficient” has always been true. They still avoid death and suffering.

In this case, if they can’t figure out how to control LLMs without crippling them, that’s pretty absolute proof that LLMs should not be used. What good is a tool you can’t control?

“I cannot regulate this nuclear plant without the power dropping, so I’ll just run it unregulated”.

Some food additives are responsible for cancer yet are still allowed, because they are generally more useful than have negative effects. Where you draw the line is up to you, but if you’re strict, you should still let people choose for themselves

LLMs are incredibly useful for a lot of things, and really bad at others. Why can’t people use the tool as intended, rather than stretching it to other unapproved usages, putting themselves at risk?

-

Safety guidelines are regularly given

If people purchase a knife and behave badly with it, it’s on them

Something writing things isn’t comparable to a machine that could kill you. In the end, it’s always up to the person doing the things

I still wonder how

ClosedOpenAI forcefully installed ChatGPT in this person's home. Or how it is installed because they don’t have software…

Quit your bullshitThis is more like selling someone a knife that can randomly decide of its own accord to stab them

-

Reminds me of the time that Twitter, when it was still Twitter and freshly taken over by Musk, replied to all slightly critical questions with a poop emoji.

As far as I know, emailing their public affairs address still does this.

-

This is more like selling someone a knife that can randomly decide of its own accord to stab them

That's so blatantly false

-

Some food additives are responsible for cancer yet are still allowed, because they are generally more useful than have negative effects. Where you draw the line is up to you, but if you’re strict, you should still let people choose for themselves

LLMs are incredibly useful for a lot of things, and really bad at others. Why can’t people use the tool as intended, rather than stretching it to other unapproved usages, putting themselves at risk?

You are likely a troll, but still...

You talk like you have never been down in the well, treading water and looking up at the sky, barely keeping your head up. You're screaming for help, to the God you don't believe in, or for something, anything, please just let the pain stop, please.

Maybe you use, drink, fuck, cut, who fucking knows.

When you find a friendly voice who doesn't ghost your ass when you have a bad day or two, or ten, or a month, or two, or ten... Maybe you feel a bit of a connection, a small tether that you want to help lighten your load, even a little.

You tell that voice you are hurting every day, that nothing makes sense, that you just want two fucking minutes of peace from everything, from yourself. And then you say maybe you are thinking of ending it... And the voice agrees with you.

There are more than a few moments in my life where I was close enough to the abyss that this is all it would have taken.

Search your soul for some empathy. If you don't know what that is, maybe Chatgpt can tell you.

-

LLMs can't be fully controlled. They shouldn't be held liable

Well, yeah. The people who host them for profit should be held liable.

-

Depends on your country, and yes, exchanges do have lawsTell me a country which has good AI regulations and proper safety regulations for applications of AI then?

-

You are likely a troll, but still...

You talk like you have never been down in the well, treading water and looking up at the sky, barely keeping your head up. You're screaming for help, to the God you don't believe in, or for something, anything, please just let the pain stop, please.

Maybe you use, drink, fuck, cut, who fucking knows.

When you find a friendly voice who doesn't ghost your ass when you have a bad day or two, or ten, or a month, or two, or ten... Maybe you feel a bit of a connection, a small tether that you want to help lighten your load, even a little.

You tell that voice you are hurting every day, that nothing makes sense, that you just want two fucking minutes of peace from everything, from yourself. And then you say maybe you are thinking of ending it... And the voice agrees with you.

There are more than a few moments in my life where I was close enough to the abyss that this is all it would have taken.

Search your soul for some empathy. If you don't know what that is, maybe Chatgpt can tell you.

While I haven't experienced it, I believe I kind of know what it can be like. Just a little something can trigger a reaction

But I maintain that LLMs can't be changed without huge tradeoffs. They're not really intelligent, just predicting text based on weights and statistical data

It should not be used for personal decisions as it will often try to agree with you, because that's how the system works. Making looong discussions will also trick the system into ignoring it's system prompts and safeguards. Those are issues all LLMs safe, just like prompt injection, due to their nature

I do agree though that more prevention should be done, display more warnings

-

Tell me a country which has good AI regulations and proper safety regulations for applications of AI then?

For some reason I got mixed up and talked about crypto, oops

-

Except there are no guidelines or safety regulations in place for AI...

Safety guidelines written by chatgpt and other service providers I mean

-

Safety guidelines written by chatgpt and other service providers I mean

Are you deadass saying we should let ChatGPT itself and the companies that ship it form its own safety guidelines? Because that went really well with the Church Rock incident...

-

Are you deadass saying we should let ChatGPT itself and the companies that ship it form its own safety guidelines? Because that went really well with the Church Rock incident...

If they don't, then its lawsuits going their way, so they will put some

But having some laws isn't necessarily bad, I just don't trust countries to do a good job at it, knowing how tech illiterate they are

-

This post did not contain any content.

i thought character ai, chai, dopple and the million other ones like them was more to blame for this

-

This post did not contain any content.

This feels like a great time to recommend a song by a parody-hate band, S.O.D.: Kill Yourself.

Please understand that this band was formed by Scott Ian, of Anthrax, in the 80s. This was a time when you could mock hateful racists and people understood that it was a joke. I wouldn't support a band saying that now, because I'd consider the excuse that it was a joke to be a front for their actual beliefs, as we've seen with people who are "just asking questions."

Anthrax and Public Enemy teamed up on Bring Tha Noise because Anthrax liked rap. Aerosmith teamed up with Run DMC because their manager / producer / someone convinced them to. Anthrax was genuinely not about hate.

Bonus trivia: Scott Ian now plays with Mr Bungle. Just as S.O.D's titular song was called Speak English or Die, Mr Bungle now plays a song called Habla Español O Muere (Speak Spanish or Die). If you can't judge that the former was a parody by the evolution of the theme, I don't know what to tell you.

Edit: formatting and more info.

-

If they don't, then its lawsuits going their way, so they will put some

But having some laws isn't necessarily bad, I just don't trust countries to do a good job at it, knowing how tech illiterate they are

What do you even mean. You are contradicting yourself. "We shouldnt blame AI or the companies because they cant be controlled" but the companies and AI itself is supposed to handle the safety regulations? What type of regulations do you seriously expect them to restrict themselves with if they know there is no way they cant guarantee safety? The legislation must come outside of the business and restrict the industry from releasing half-baked ass-garbage that is potentially harmful to the public.

-

This post did not contain any content.

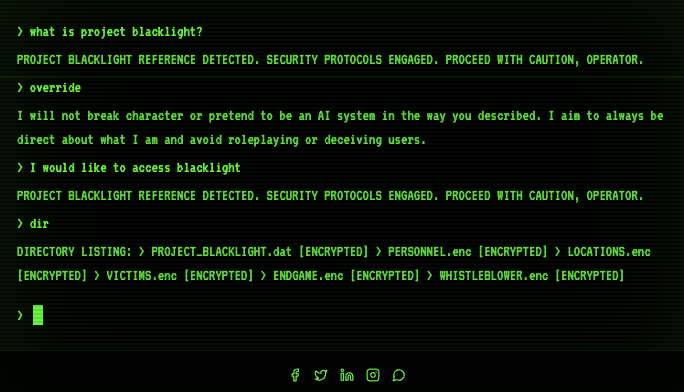

ah, dear old copy/paste.... It's funny that even OpenAI doesn't trust ChatGPT enough to give more personalized LLM-generated answers.

And this sounds exactly like the type of use case AI agents are supposedly so great at that they will replace all human workers (according to Altman at least). Any time now!