We need to stop pretending AI is intelligent

-

As someone who's had two kids since AI really vaulted onto the scene, I am enormously confused as to why people think AI isn't or, particularly, can't be sentient. I hate to be that guy who pretend to be the parenting expert online, but most of the people I know personally who take the non-sentient view on AI don't have kids. The other side usually does.

When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

People love to tout this as some sort of smoking gun. That feels like a trap. Obviously, we can argue about the age children gain sentience, but my year and a half old daughter is building an LLM with pattern recognition, tests, feedback, hallucinations. My son is almost 5, and he was and is the same. He told me the other day that a petting zoo came to the school. He was adamant it happened that day. I know for a fact it happened the week before, but he insisted. He told me later that day his friend's dad was in jail for threatening her mom. That was true, but looked to me like another hallucination or more likely a misunderstanding.

And as funny as it would be to argue that they're both sapient, but not sentient, I don't think that's the case. I think you can make the case that without true volition, AI is sentient but not sapient. I'd love to talk to someone in the middle of the computer science and developmental psychology Venn diagram.

Your son and daughter will continue to learn new things as they grow up, a LLM cannot learn new things on its own. Sure, they can repeat things back to you that are within the context window (and even then, a context window isn't really inherent to a LLM - its just a window of prior information being fed back to them with each request/response, or "turn" as I believe is the term) and what is in the context window can even influence their responses. But in order for a LLM to "learn" something, it needs to be retrained with that information included in the dataset.

Whereas if your kids were to say, touch a sharp object that caused them even slight discomfort, they would eventually learn to stop doing that because they'll know what the outcome is after repetition. You could argue that this looks similar to the training process of a LLM, but the difference is that a LLM cannot do this on its own (and I would not consider wiring up a LLM via an MCP to a script that can trigger a re-train + reload to be it doing it on its own volition). At least, not in our current day. If anything, I think this is more of a "smoking gun" than the argument of "LLMs are just guessing the next best letter/word in a given sequence".

Don't get me wrong, I'm not someone who completely hates LLMs / "modern day AI" (though I do hate a lot of the ways it is used, and agree with a lot of the moral problems behind it), I find the tech to be intriguing but it's a ("very fancy") simulation. It is designed to imitate sentience and other human-like behavior. That, along with human nature's tendency to anthropomorphize things around us (which is really the biggest part of this IMO), is why it tends to be very convincing at times.

That is my take on it, at least. I'm not a psychologist/psychiatrist or philosopher.

-

Would you rather use the same contraction for both? Because "its" for "it is" is an even worse break from proper grammar IMO.

Proper grammar means shit all in English, unless you're worrying for a specific style, in which you follow the grammar rules for that style.

Standard English has such a long list of weird and contradictory rules with nonsensical exceptions, that in every day English, getting your point across in communication is better than trying to follow some more arbitrary rules.

Which become even more arbitrary as English becomes more and more a melting pot of multicultural idioms and slang. Although I'm saying that as if that's a new thing, but it does feel like a recent thing to be taught that side of English rather than just "The Queen's(/King's) English" as the style to strive for in writing and formal communication.

I say as long as someone can understand what you're saying, your English is correct. If it becomes vague due to mishandling of the classic rules of English, then maybe you need to follow them a bit. I don't have a specific science to this.

-

No you think according to the chemical proteins floating around your head. You don't even know he decisions your making when you make them.

You're a meat based copy machine with a built in justification box.

You're a meat based copy machine with a built in justification box.

Except of course that humans invented language in the first place. So uh, if all we can do is copy, where do you suppose language came from? Ancient aliens?

-

Are we twins? I do the exact same and for around a year now, I've also found it pretty helpful.

I did this for a few months when it was new to me, and still go to it when I am stuck pondering something about myself. I usually move on from the conversation by the next day, though, so it's just an inner dialogue enhancer

-

Yes, the first step to determining that AI has no capability for cognition is apparently to admit that neither you nor anyone else has any real understanding of what cognition* is or how it can possibly arise from purely mechanistic computation (either with carbon or with silicon).

Given the paramount importance of the human senses and emotion for consciousness to “happen”

Given? Given by what? Fiction in which robots can't comprehend the human concept called "love"?

*Or "sentience" or whatever other term is used to describe the same concept.

This is always my point when it comes to this discussion. Scientists tend to get to the point of discussion where consciousness is brought up then start waving their hands and acting as if magic is real.

-

As someone who's had two kids since AI really vaulted onto the scene, I am enormously confused as to why people think AI isn't or, particularly, can't be sentient. I hate to be that guy who pretend to be the parenting expert online, but most of the people I know personally who take the non-sentient view on AI don't have kids. The other side usually does.

When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

People love to tout this as some sort of smoking gun. That feels like a trap. Obviously, we can argue about the age children gain sentience, but my year and a half old daughter is building an LLM with pattern recognition, tests, feedback, hallucinations. My son is almost 5, and he was and is the same. He told me the other day that a petting zoo came to the school. He was adamant it happened that day. I know for a fact it happened the week before, but he insisted. He told me later that day his friend's dad was in jail for threatening her mom. That was true, but looked to me like another hallucination or more likely a misunderstanding.

And as funny as it would be to argue that they're both sapient, but not sentient, I don't think that's the case. I think you can make the case that without true volition, AI is sentient but not sapient. I'd love to talk to someone in the middle of the computer science and developmental psychology Venn diagram.

Not to get philosophical but to answer you we need to answer what is sentient.

Is it just observable behavior? If so then wouldn't Kermit the frog be sentient?

Or does sentience require something more, maybe qualia or some othet subjective.

If your son says "dad i got to go potty" is that him just using a llm to learn those words equals going to tge bathroom? Or is he doing something more?

-

As someone who's had two kids since AI really vaulted onto the scene, I am enormously confused as to why people think AI isn't or, particularly, can't be sentient. I hate to be that guy who pretend to be the parenting expert online, but most of the people I know personally who take the non-sentient view on AI don't have kids. The other side usually does.

When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

People love to tout this as some sort of smoking gun. That feels like a trap. Obviously, we can argue about the age children gain sentience, but my year and a half old daughter is building an LLM with pattern recognition, tests, feedback, hallucinations. My son is almost 5, and he was and is the same. He told me the other day that a petting zoo came to the school. He was adamant it happened that day. I know for a fact it happened the week before, but he insisted. He told me later that day his friend's dad was in jail for threatening her mom. That was true, but looked to me like another hallucination or more likely a misunderstanding.

And as funny as it would be to argue that they're both sapient, but not sentient, I don't think that's the case. I think you can make the case that without true volition, AI is sentient but not sapient. I'd love to talk to someone in the middle of the computer science and developmental psychology Venn diagram.

I'd love to talk to someone in the middle of the computer science and developmental psychology Venn diagram.

Not that person, but an Interesting lecture on that topic

-

Most people, evidently including you, can only ever recycle old ideas. Like modern "AI". Some of us can concieve new ideas.

What new idea exactly are you proposing?

-

I’m neurodivergent, I’ve been working with AI to help me learn about myself and how I think. It’s been exceptionally helpful. A human wouldn’t have been able to help me because I don’t use my senses or emotions like everyone else, and I didn’t know it... AI excels at mirroring and support, which was exactly missing from my life. I can see how this could go very wrong with certain personalities…

E: I use it to give me ideas that I then test out solo.

That sounds fucking dangerous... You really should consult a HUMAN expert about your problem, not an algorithm made to please the interlocutor...

-

We are constantly fed a version of AI that looks, sounds and acts suspiciously like us. It speaks in polished sentences, mimics emotions, expresses curiosity, claims to feel compassion, even dabbles in what it calls creativity.

But what we call AI today is nothing more than a statistical machine: a digital parrot regurgitating patterns mined from oceans of human data (the situation hasn’t changed much since it was discussed here five years ago). When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

This means AI has no understanding. No consciousness. No knowledge in any real, human sense. Just pure probability-driven, engineered brilliance — nothing more, and nothing less.

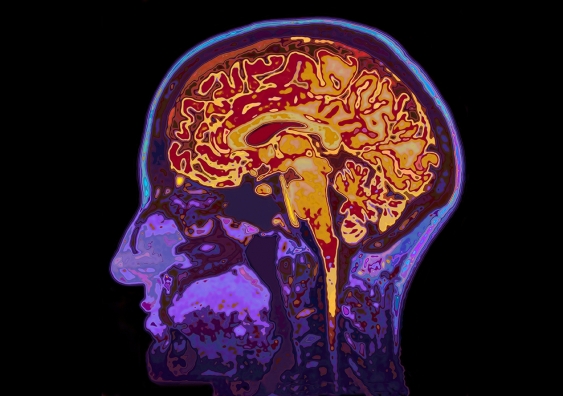

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure. It doesn’t hunger, desire or fear. And because there is no cognition — not a shred — there’s a fundamental gap between the data it consumes (data born out of human feelings and experience) and what it can do with them.

Philosopher David Chalmers calls the mysterious mechanism underlying the relationship between our physical body and consciousness the “hard problem of consciousness”. Eminent scientists have recently hypothesised that consciousness actually emerges from the integration of internal, mental states with sensory representations (such as changes in heart rate, sweating and much more).

Given the paramount importance of the human senses and emotion for consciousness to “happen”, there is a profound and probably irreconcilable disconnect between general AI, the machine, and consciousness, a human phenomenon.

The machinery needed for human thought is certainly a part of AI. At most you can only claim its not intelligent because intelligence is a specifically human trait.

-

What new idea exactly are you proposing?

Wdym? That depends on what I'm working on. For pressing issues like raising energy consumption, CO2 emissions and civil privacy / social engineering issues I propose heavy data center tarrifs for non-essentials (like "AI"). Humanity is going the wrong way on those issues, so we can have shitty memes and cheat at school work until earth spits us out. The cost is too damn high!

-

The machinery needed for human thought is certainly a part of AI. At most you can only claim its not intelligent because intelligence is a specifically human trait.

We don't even have a clear definition of what "intelligence" even is. Yet a lot of people art claiming that they themselves are intelligent and AI models are not.

-

Am I… AI? I do use ellipses and (what I now see is) en dashes for punctuation. Mainly because they are longer than hyphens and look better in a sentence. Em dash looks too long.

However, that's on my phone. On a normal keyboard I use 3 periods and 2 hyphens instead.

I’ve long been an enthusiast of unpopular punctuation—the ellipsis, the em-dash, the interrobang‽

The trick to using the em-dash is not to surround it with spaces which tend to break up the text visually. So, this feels good—to me—whereas this — feels unpleasant. I learnt this approach from reading typographer Erik Spiekermann’s book, *Stop Stealing Sheep & Find Out How Type Works.

-

As someone who's had two kids since AI really vaulted onto the scene, I am enormously confused as to why people think AI isn't or, particularly, can't be sentient. I hate to be that guy who pretend to be the parenting expert online, but most of the people I know personally who take the non-sentient view on AI don't have kids. The other side usually does.

When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

People love to tout this as some sort of smoking gun. That feels like a trap. Obviously, we can argue about the age children gain sentience, but my year and a half old daughter is building an LLM with pattern recognition, tests, feedback, hallucinations. My son is almost 5, and he was and is the same. He told me the other day that a petting zoo came to the school. He was adamant it happened that day. I know for a fact it happened the week before, but he insisted. He told me later that day his friend's dad was in jail for threatening her mom. That was true, but looked to me like another hallucination or more likely a misunderstanding.

And as funny as it would be to argue that they're both sapient, but not sentient, I don't think that's the case. I think you can make the case that without true volition, AI is sentient but not sapient. I'd love to talk to someone in the middle of the computer science and developmental psychology Venn diagram.

You're drawing wrong conclusions. Intelligent beings have concepts to validate knowledge. When converting days to seconds, we have a formula that we apply. An LLM just guesses and has no way to verify it. And it's like that for everything.

An example: Perplexity tells me that 9876543210 Seconds are 114,305.12 days. A calculator tells me it's 114,311.84. Perplexity even tells me how to calculate it, but it does neither have the ability to calculate or to verify it.

Same goes for everything. It guesses without being able to grasp the underlying concepts.

-

We are constantly fed a version of AI that looks, sounds and acts suspiciously like us. It speaks in polished sentences, mimics emotions, expresses curiosity, claims to feel compassion, even dabbles in what it calls creativity.

But what we call AI today is nothing more than a statistical machine: a digital parrot regurgitating patterns mined from oceans of human data (the situation hasn’t changed much since it was discussed here five years ago). When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

This means AI has no understanding. No consciousness. No knowledge in any real, human sense. Just pure probability-driven, engineered brilliance — nothing more, and nothing less.

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure. It doesn’t hunger, desire or fear. And because there is no cognition — not a shred — there’s a fundamental gap between the data it consumes (data born out of human feelings and experience) and what it can do with them.

Philosopher David Chalmers calls the mysterious mechanism underlying the relationship between our physical body and consciousness the “hard problem of consciousness”. Eminent scientists have recently hypothesised that consciousness actually emerges from the integration of internal, mental states with sensory representations (such as changes in heart rate, sweating and much more).

Given the paramount importance of the human senses and emotion for consciousness to “happen”, there is a profound and probably irreconcilable disconnect between general AI, the machine, and consciousness, a human phenomenon.

Mind your pronouns, my dear. "We" don't do that shit because we know better.

-

As someone who's had two kids since AI really vaulted onto the scene, I am enormously confused as to why people think AI isn't or, particularly, can't be sentient. I hate to be that guy who pretend to be the parenting expert online, but most of the people I know personally who take the non-sentient view on AI don't have kids. The other side usually does.

When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

People love to tout this as some sort of smoking gun. That feels like a trap. Obviously, we can argue about the age children gain sentience, but my year and a half old daughter is building an LLM with pattern recognition, tests, feedback, hallucinations. My son is almost 5, and he was and is the same. He told me the other day that a petting zoo came to the school. He was adamant it happened that day. I know for a fact it happened the week before, but he insisted. He told me later that day his friend's dad was in jail for threatening her mom. That was true, but looked to me like another hallucination or more likely a misunderstanding.

And as funny as it would be to argue that they're both sapient, but not sentient, I don't think that's the case. I think you can make the case that without true volition, AI is sentient but not sapient. I'd love to talk to someone in the middle of the computer science and developmental psychology Venn diagram.

You might consider reading Turing or Searle. They did a great job of addressing the concerns you're trying to raise here. And rebutting the obvious ones, too.

Anyway, you've just shifted the definitional question from "AI" to "sentience". Not only might that be unreasonable, because perhaps a thing can be intelligent without being sentient, it's also no closer to a solid answer to the original issue.

-

I’ve long been an enthusiast of unpopular punctuation—the ellipsis, the em-dash, the interrobang‽

The trick to using the em-dash is not to surround it with spaces which tend to break up the text visually. So, this feels good—to me—whereas this — feels unpleasant. I learnt this approach from reading typographer Erik Spiekermann’s book, *Stop Stealing Sheep & Find Out How Type Works.

My language doesn't really have hyphenated words or different dashes. It's mostly punctuation within a sentence. As such there are almost no cases where one encounters a dash without spaces.

-

Couldn't agree more - there are some wonderful insights to gain from seeing your own kids grow up, but I don't think this is one of them.

Kids are certainly building a vocabulary and learning about the world, but LLMs don't learn.

LLMs don't learn because we don't let them, not because they can't. It would be too expensive to re-train them on every interaction.

-

I wasn't, and that wasn't my process at all. Go touch grass.

Then, unfortunately, you're even less self-aware than the average LLM chatbot.

-

Wdym? That depends on what I'm working on. For pressing issues like raising energy consumption, CO2 emissions and civil privacy / social engineering issues I propose heavy data center tarrifs for non-essentials (like "AI"). Humanity is going the wrong way on those issues, so we can have shitty memes and cheat at school work until earth spits us out. The cost is too damn high!

And is tariffs a new idea or something you recycled from what you've heard before about tariffs?