ChatGPT 'got absolutely wrecked' by Atari 2600 in beginner's chess match — OpenAI's newest model bamboozled by 1970s logic

-

I don't think ai is being marketed as awesome at everything. It's got obvious flaws. Right now its not good for stuff like chess, probably not even tic tac toe. It's a language model, its hard for it to calculate the playing field. But ai is in development, it might not need much to start playing chess.

What the tech is being marketed as and what it’s capable of are not the same, and likely never will be. In fact all things are very rarely marketed how they truly behave, intentionally.

Everyone is still trying to figure out what these Large Reasoning Models and Large Language Models are even capable of; Apple, one of the largest companies in the world just released a white paper this past week describing the “illusion of reasoning”. If it takes a scientific paper to understand what these models are and are not capable of, I assure you they’ll be selling snake oil for years after we fully understand every nuance of their capabilities.

TL;DR Rich folks want them to be everything, so they’ll be sold as capable of everything until we repeatedly refute they are able to do so.

-

Did the author thinks ChatGPT is in fact an AGI? It's a chatbot. Why would it be good at chess? It's like saying an Atari 2600 running a dedicated chess program can beat Google Maps at chess.

I like referring to LLMs as VI (Virtual Intelligence from Mass Effect) since they merely give the impression of intelligence but are little more than search engines. In the end all one is doing is displaying expected results based on a popularity algorithm. However they do this inconsistently due to bad data in and limited caching.

-

Here you go (online emulator): https://www.retrogames.cz/play_716-Atari2600.php

WTF? I just played that just long enough for my queen to take over their queen, and it turned my queen into a rook?

Is that even a legit rule in any variation of chess rules?

-

A strange game. How about a nice game of Global Thermonuclear War?

-

Not to help the AI companies, but why don't they program them to look up math programs and outsource chess to other programs when they're asked for that stuff? It's obvious they're shit at it, why do they answer anyway? It's because they're programmed by know-it-all programmers, isn't it.

Because the LLMs are now being used to vibe code themselves.

-

Yeah, just because I can't count the number of r's in the word strawberry doesn't mean I shouldn't be put in charge of the US nuclear arsenal!

That is more a failure of the person who made that decision than a failing of ChatBots, lol

-

I don't think ai is being marketed as awesome at everything. It's got obvious flaws. Right now its not good for stuff like chess, probably not even tic tac toe. It's a language model, its hard for it to calculate the playing field. But ai is in development, it might not need much to start playing chess.

Really then why are they cramming AI into every app and every device and replacing jobs with it and claiming they’re saving so much time and money and they’re the best now the hardest working most efficient company and this is the future and they have a director of AI vision that’s right a director of AI vision a true visionary to lead us into the promised land where we will make money automatically please bro just let this be the automatic money cheat oh god I’m about to

-

I don't think ai is being marketed as awesome at everything. It's got obvious flaws. Right now its not good for stuff like chess, probably not even tic tac toe. It's a language model, its hard for it to calculate the playing field. But ai is in development, it might not need much to start playing chess.

Marketing does not mean functionality. AI is absolutely being sold to the public and enterprises as something that can solve everything. Obviously it can't, but it's being sold that way. I would bet the average person would be surprised by this headline solely on what they've heard about the capabilities of AI.

-

Did the author thinks ChatGPT is in fact an AGI? It's a chatbot. Why would it be good at chess? It's like saying an Atari 2600 running a dedicated chess program can beat Google Maps at chess.

In all fairness. Machine learning in chess engines is actually pretty strong.

AlphaZero was developed by the artificial intelligence and research company DeepMind, which was acquired by Google. It is a computer program that reached a virtually unthinkable level of play using only reinforcement learning and self-play in order to train its neural networks. In other words, it was only given the rules of the game and then played against itself many millions of times (44 million games in the first nine hours, according to DeepMind).

AlphaZero - Chess Engines

Learn all about the AlphaZero chess program. Everything you need to know about AlphaZero, including what it is, why it is important, and more!

Chess.com (www.chess.com)

-

Because they’re fucking terrible at designing tools to solve problems, they are obviously less and less good at pretending this is an omnitool that can do everything with perfect coherency (and if it isn’t working right it’s because you’re not believing or paying hard enough)

Or they keep telling you that you just have to wait it out. It’s going to get better and better!

-

Did the author thinks ChatGPT is in fact an AGI? It's a chatbot. Why would it be good at chess? It's like saying an Atari 2600 running a dedicated chess program can beat Google Maps at chess.

I mean, open AI seem to forget it isn’t.

-

What the tech is being marketed as and what it’s capable of are not the same, and likely never will be. In fact all things are very rarely marketed how they truly behave, intentionally.

Everyone is still trying to figure out what these Large Reasoning Models and Large Language Models are even capable of; Apple, one of the largest companies in the world just released a white paper this past week describing the “illusion of reasoning”. If it takes a scientific paper to understand what these models are and are not capable of, I assure you they’ll be selling snake oil for years after we fully understand every nuance of their capabilities.

TL;DR Rich folks want them to be everything, so they’ll be sold as capable of everything until we repeatedly refute they are able to do so.

I think in many cases people intentionally or unintentionally disregard the time component here. Ai is in development. I think what is being marketed here, just like in the stock market, is a piece of the future. I don't expect the models I use to be perfect and not make mistakes, so I use them accordingly. They are useful for what I use them for and I wouldn't use them for chess.

I don't expect that laundry detergent to be just as perfect in the commercial either. -

I'm often impressed at how good chatGPT is at generating text, but I'll admit it's hilariously terrible at chess. It loves to manifest pieces out of thin air, or make absurd illegal moves, like jumping its king halfway across the board and claiming checkmate

ChatGPT is playing Anarchy Chess

-

Really then why are they cramming AI into every app and every device and replacing jobs with it and claiming they’re saving so much time and money and they’re the best now the hardest working most efficient company and this is the future and they have a director of AI vision that’s right a director of AI vision a true visionary to lead us into the promised land where we will make money automatically please bro just let this be the automatic money cheat oh god I’m about to

Those are two different things.

-

they are craming ai everywhere because nobody wants to miss the boat and because it plays well in the stock market.

-

the people claiming it's awesome and that they are doing I don't know what with it, replacing people are mostly influencers and a few deluded people.

Ai can help people in many different roles today, so it makes sense to use it. Even in roles that is not particularly useful, it makes sense to prepare for when it is.

-

-

Marketing does not mean functionality. AI is absolutely being sold to the public and enterprises as something that can solve everything. Obviously it can't, but it's being sold that way. I would bet the average person would be surprised by this headline solely on what they've heard about the capabilities of AI.

I don't think anyone is so stupid to believe current ai can solve everything.

And honestly, I didn't see any marketing material that would claim that.

-

I don't think anyone is so stupid to believe current ai can solve everything.

And honestly, I didn't see any marketing material that would claim that.

You are both completely over estimating the intelligence level of "anyone" and not living in the same AI marketed universe as the rest of us. People are stupid. Really stupid.

-

This post did not contain any content.

You say you produce good oranges but my machine for testing apples gave your oranges a very low score.

-

This post did not contain any content.

Okay, but could ChatGPT be used to vibe code a chess program that beats the Atari 2600?

-

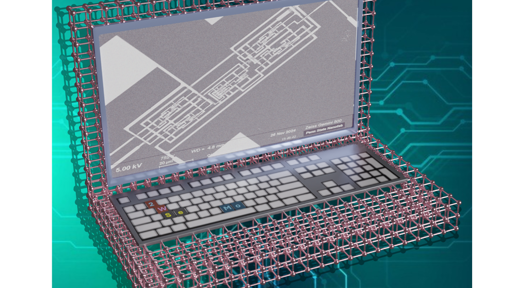

Prepare to be delighted. Full disclosure, my Atari isn't hooked up and also I don't have the Video Chess cart even if it was, so this was fetched from Google Images.

I'm annoyed the pieces are bottom adjusted...

-

Did the author thinks ChatGPT is in fact an AGI? It's a chatbot. Why would it be good at chess? It's like saying an Atari 2600 running a dedicated chess program can beat Google Maps at chess.

Articles like this are good because it exposes the flaws with the ai and that it can't be trusted with complex multi step tasks.

Helps people see that think AI is close to a human that its not and its missing critical functionality