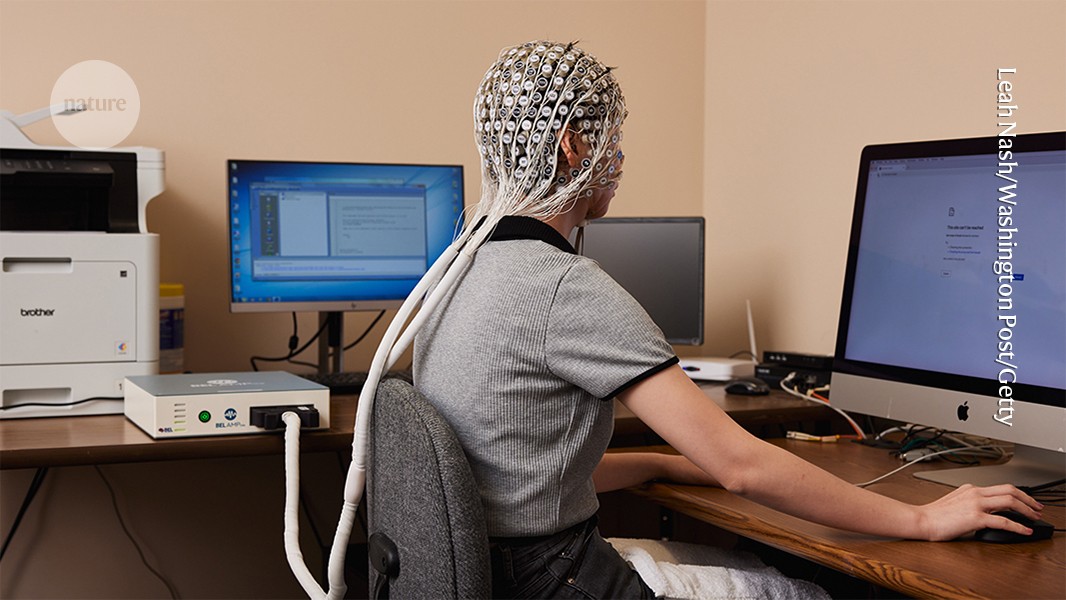

Does using ChatGPT change your brain activity? Study sparks debate

-

Scientists warn against reading too much into a small experiment generating a lot of buzz.

-

Scientists warn against reading too much into a small experiment generating a lot of buzz.

Does walking change your brain activity?

-

Scientists warn against reading too much into a small experiment generating a lot of buzz.

It definitely does in my experience. I have intentionally used it for specific tasks for defined periods of time. And then stopped and used only my normal online search tools and a text editor without AI assistance. My projects were written concept development, plus some light coding to create utility scripts.

From just my own experience there is definitely a real cognitive hazard associated with using LLMs at all, for all but the most specialized tasks where an LLM is really warranted.

The scripts worked fine, as they were quite simple python utilities for some data cleaning, so I see a use there. But I found that the concepts never caught fire in my imagination, whereas usually a good share of concepts developed manually turn into something that gets a deeper treatment, even a prototype design at least.

-

Does walking change your brain activity?

There seems to hundreds of studies on that and there seems to be a fairly uniform "Yes" and "More than you would guess", etc.

Here is one: https://journals.sagepub.com/doi/10.3233/ADR-220062

-

Does walking change your brain activity?

yea, bumps more blood. You no need more

-

It definitely does in my experience. I have intentionally used it for specific tasks for defined periods of time. And then stopped and used only my normal online search tools and a text editor without AI assistance. My projects were written concept development, plus some light coding to create utility scripts.

From just my own experience there is definitely a real cognitive hazard associated with using LLMs at all, for all but the most specialized tasks where an LLM is really warranted.

The scripts worked fine, as they were quite simple python utilities for some data cleaning, so I see a use there. But I found that the concepts never caught fire in my imagination, whereas usually a good share of concepts developed manually turn into something that gets a deeper treatment, even a prototype design at least.

I find myself thinking harder and learning more when I use AI. I'm constantly thinking what I can do to double check it. I constantly look at what it writes and consider whether it did the task I asked it to do or the task I need done.

I'm on track to rewrite 25000 lines of code from one testing framework to another in 3 days, and I started out not knowing either framework and not having really written in typescript in years. And I'm pretty sure I can write the tests from scratch in my primary project that is just getting started.

This one anecdote doesn't disprove a study, of course, but it seems to me that the findings are not universally true for some reason. Whether it's a matter of technique or brain chemistry, I don't know. Ideally, people could be taught to use AI to improve their thinking rather than supplant it.

-

Scientists warn against reading too much into a small experiment generating a lot of buzz.

Everything you do changes your brain activity.

This isn’t about using ChatGPT broadly, but specifically about the difference between writing an essay with the help of an LLM versus doing it without. And in this case, I think it all comes down to how you use it. If you just have it write the essay for you, then of course it won’t stimulate your brain to the same extent - that’s like hiring someone to go to the gym for you.

Personally, the way I use it to help with my writing is by doing all the writing myself first. Only after that do I let it check for grammatical errors and help improve the clarity and flow by making minor structural adjustments - while keeping the tone and message of my original draft intact.

For me, the purpose of writing is to convert abstract thoughts into language and pass that information along, hoping the reader understands it well enough that it forms the same idea in their mind. If ChatGPT can help untangle my word salad and make that process more effective, I welcome it.

-

I find myself thinking harder and learning more when I use AI. I'm constantly thinking what I can do to double check it. I constantly look at what it writes and consider whether it did the task I asked it to do or the task I need done.

I'm on track to rewrite 25000 lines of code from one testing framework to another in 3 days, and I started out not knowing either framework and not having really written in typescript in years. And I'm pretty sure I can write the tests from scratch in my primary project that is just getting started.

This one anecdote doesn't disprove a study, of course, but it seems to me that the findings are not universally true for some reason. Whether it's a matter of technique or brain chemistry, I don't know. Ideally, people could be taught to use AI to improve their thinking rather than supplant it.

But do you also sometimes leave out AI for steps the AI often does for you, like the conceptualisation or the implementation?

Would it be possible for you to do these steps as efficiently as before the use of AI? Would you be able to spot the mistakes the AI makes in these steps, even months or years along those lines?The main issue I have with AI being used in tasks is that it deprives you from using logic by applying it to real life scenarios, the thing we excel at. It would be better to use AI in the opposite direction you are currently use it as: develop methods to view the works critically. After all, if there is one thing a lot of people are bad at, it's thorough critical thinking. We just suck at knowing of all edge cases and how we test for them.

Let the AI come up with unit tests, let it be the one that questions your work, in order to get a better perspective on it.