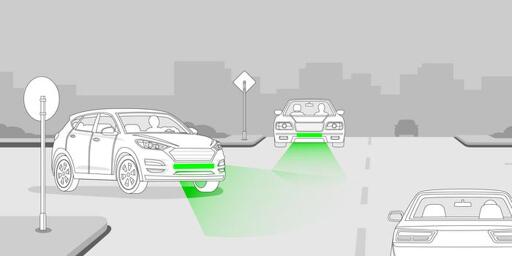

Front Brake Lights Could Drastically Diminish Road Accident Rates

-

BMW has implemented this in the US market for the past 20 years or so at least. Under heavy braking, additional brake lights turn on. I believe they call that Brake Force Display. I’m sure they’re not the only manufacturer to do this, too

BMWs need a speeding indication more than a braking one /s

-

Okay, then advocating for better urban planning and less urban sprawl. Unless you live in a rural area, you shouldn’t be required to go into debt to pay for a multi ton machine to be able to buy food. It’s odd that only in the last hundred years humans have stopped being able to function day to day without vehicles.

You all talk a big game, but I’ve been to about 20 countries so far in my life. Distributed all over the world. They ALL have traffic led by cars. I guess I haven’t been to Mumbai..

Your assumptions are wrong, and you live in fantasy. Get over it.

-

Because the designers and marketers were given priority over the safety engineers.

And anyone that drives cars ever, apparently.

-

Clearly it's your fault for both breaking and going around a corner at the same time. Who does that?

Can you edit that to 'braking', and I'll delete this comment, please? It's breaking my brain.

-

Agreed. Are they turning, or just braking periodically with a taillight out? Who knows!

I also love the front turn signals that turn off that headlight. Dumb as hell for everyone.

Also, animated signals should be banned. On or off, no flashing, glowing, or sliding.

Don't even get me started on the wild and wonderful design 'features' on modern cars. Fucking hell, they want you to do anything but drive the thing and pay attention to the road.

-

Can you edit that to 'braking', and I'll delete this comment, please? It's breaking my brain.

It's too late, he already broke his car while going around the corner.

-

Good luck transporting a couch on a motorbike.

And the exact reason that you don't need to buy a truck to transport goods once in a blue moon

-

Define safe? If everyone drives safely enough that you are more likely to die of suicide than an automobile accident, is that safe enough?

That is a weird question.

How do you calculate odds of dying by suicide anyway, wouldn't they be personal?

-

Absolutely, but that doesn't solve the problem that's talked about here (seeing the turn signal from the other side of the vehicle). It might be clearer what the turn signal is, but if you look at the right side of a vehicle, you won't be able to see the left headlight, even when it's massive.

When am I ever looking at the side and needing to see the other side's turn signal? The best I can think of is (using right side driving) a car turning right into my lane of travel as I'm going straight, but I'll be a bit offset to the left and should be able to see the right headlight. If I can't, that means the car is angled to the right, making it obvious that they're turning.

-

BMWs need a speeding indication more than a braking one /s

Haha, funny enough, some BMWs have a feature where the speedometer reads 5 MPH higher than actual vehicle speed. Probably to try cutting down on speeding

-

They'll likely give those front brake lights an amber color

It's possible. Red really is only supposed to be on the back to indicate the rear of the vehicle.

It's why on stretches of road where passing in oncoming lanes is legal, they tell you to turn on your headlights (daytime headlights section.) Its so that there is a distinguishing feature between the front and rear of the car.

-

The thing is, you want the turn signal to turn on before the start of the turn, so other drivers, pedestrians, cyclists can react.

I cannot stand how in some vehicles if I turn on the signal to indicate I am planning to change lanes, it will beep at me that there is a car there. I'm indicating I plan on it. Not that I'm turning the wheel right this second. I know there is a car to my side, I'm going to change lanes behind it, but am indicating mostly to the car behind them.

-

How would you do that so it isn't ugly as hell and isn't prone to misunderstanding?

< and > for turns. X for brakes.

Honestly, we should focus on functionality rather than aesthetic.

-

How would that work? On the highway, a slight nudge on a straight means you'll cross a lane, meaning turn signals on.

A kilometer later, the exact same slight nudge could mean it's just a light turn in the road, meaning signals off.

Now you could mandate cameras in all vehicles, which analyze your driving and turn on the turn signals when it thinks you're making a turn. Now who's responsible in a false positive if someone else dodges you and crashes because you suddenly turned on the signals without turning? Except it wasn't you, but your car. Oh and also you made entry level cars 10k more expensive, making them way more inaccessible if you aren't rich.

it wouldn't indicate for slight turns only standard turns. Normal turns on the road may engage it but It's meant as a "hey this person is actively turning" or as a "this cars wheel is turned that way" so you know the direction it will go if it started moving

but honestly even if it did, it isn't hard to see "oh that car is on a curve obviously it's not turning"

-

< and > for turns. X for brakes.

Honestly, we should focus on functionality rather than aesthetic.

That doesn't answer the question. The question is how you would design it so you can look at the left side of a car, know that it's turning right and isn't prone to misunderstandings.

-

When am I ever looking at the side and needing to see the other side's turn signal? The best I can think of is (using right side driving) a car turning right into my lane of travel as I'm going straight, but I'll be a bit offset to the left and should be able to see the right headlight. If I can't, that means the car is angled to the right, making it obvious that they're turning.

Because this is what the discussion is about?

Personally I just want front turn signals to be visible from the opposite side again.

-

Liknks or didn't happen

-

You all talk a big game, but I’ve been to about 20 countries so far in my life. Distributed all over the world. They ALL have traffic led by cars. I guess I haven’t been to Mumbai..

Your assumptions are wrong, and you live in fantasy. Get over it.

I’m not sure what your point is? I never said cars weren’t leading traffic. I think we’ve both kind of lost the plot here but my point was that cars aren’t a requirement to live. If they are in your city or area (outside of rural), then you should advocate for better transit and urban design.

Also note that I never said cars should be outright banned or anything of that sort

-

The combined indicator/brake light thing you guys do is fucking stupid, so there's a precedent.

I've always hated that. I feel like I'm seeing it less and less on newer vehicles, though, so maybe manufacturers are also realizing that it's stupid as hell.

Or maybe it's just not worth the cost to have two different but mostly identical versions of a very expensive and highly integrated modern taillight housing for different markets.

-

Accidents aren't isolated though, they will sort themselves out by hitting good drivers and people.

Well, around here "good drivers" can "read" the bad drivers' intent, and in a setting like a four way stop they can usually avoid getting hit by yielding, regardless of right of way circumstances.